Remote judging is the new baseline

Remote judging isn't just a backup plan anymore. Even as physical events return, the efficiency of digital evaluation is hard to ignore. But you can't just move a physical clipboard to a Zoom call and expect it to work. We have to rethink how we verify work and manage panels when everyone is in a different time zone.

The benefits are clear. Virtual judging expands the potential pool of qualified judges beyond geographical limitations, offering access to specialized expertise that might otherwise be unavailable. Cost savings related to travel and venue rental are substantial. However, initial hurdles included ensuring technical accessibility for all participants and maintaining the fairness and integrity of the process. These aren’t insurmountable, but require deliberate planning.

This guide aims to provide a practical, evidence-based approach to setting up virtual competition judging for events in 2026. It’s not about avoiding the complexities of remote evaluation; it’s about understanding them and building a system that delivers a fair, reliable, and valuable experience for both judges and competitors. A successful virtual competition isn’t a pale imitation of an in-person event, but a distinct experience with its own strengths.

Pick a platform that actually fits

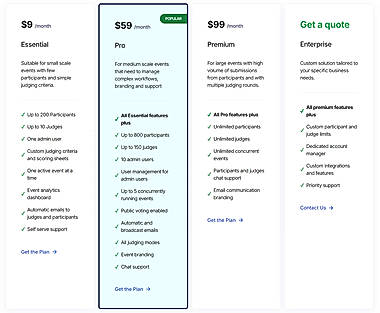

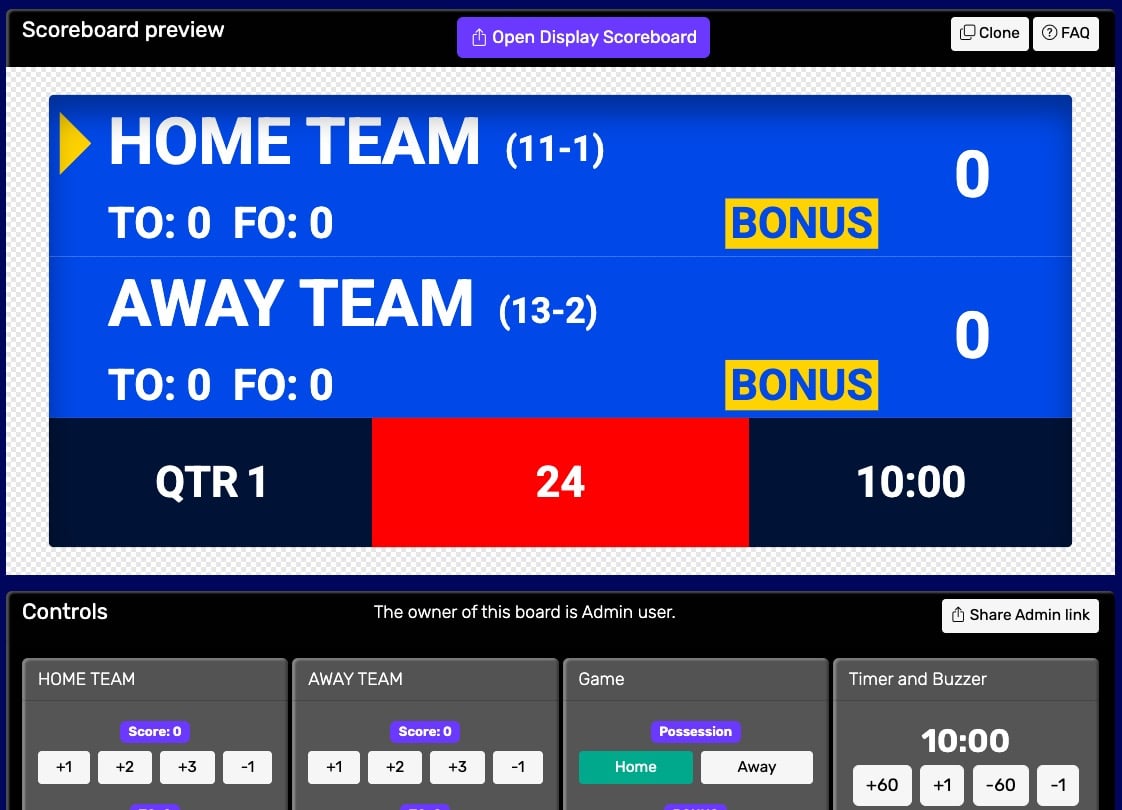

Selecting the right platform is arguably the most important decision you’ll make. While video conferencing tools like Zoom or Microsoft Teams can facilitate communication, they lack the specialized features needed for robust competition judging. Think carefully about the specific needs of your competition. An art contest requires different functionality than a business pitch competition or a scientific abstract review.

Key features to prioritize include blind judging capabilities – concealing competitor identities from judges to minimize bias – and customizable scoring rubrics. Real-time collaboration features allow judges to discuss entries and resolve discrepancies. Secure entry submission and storage are essential to protect intellectual property and prevent unauthorized access. Look for platforms that offer robust data security measures and comply with relevant privacy regulations.

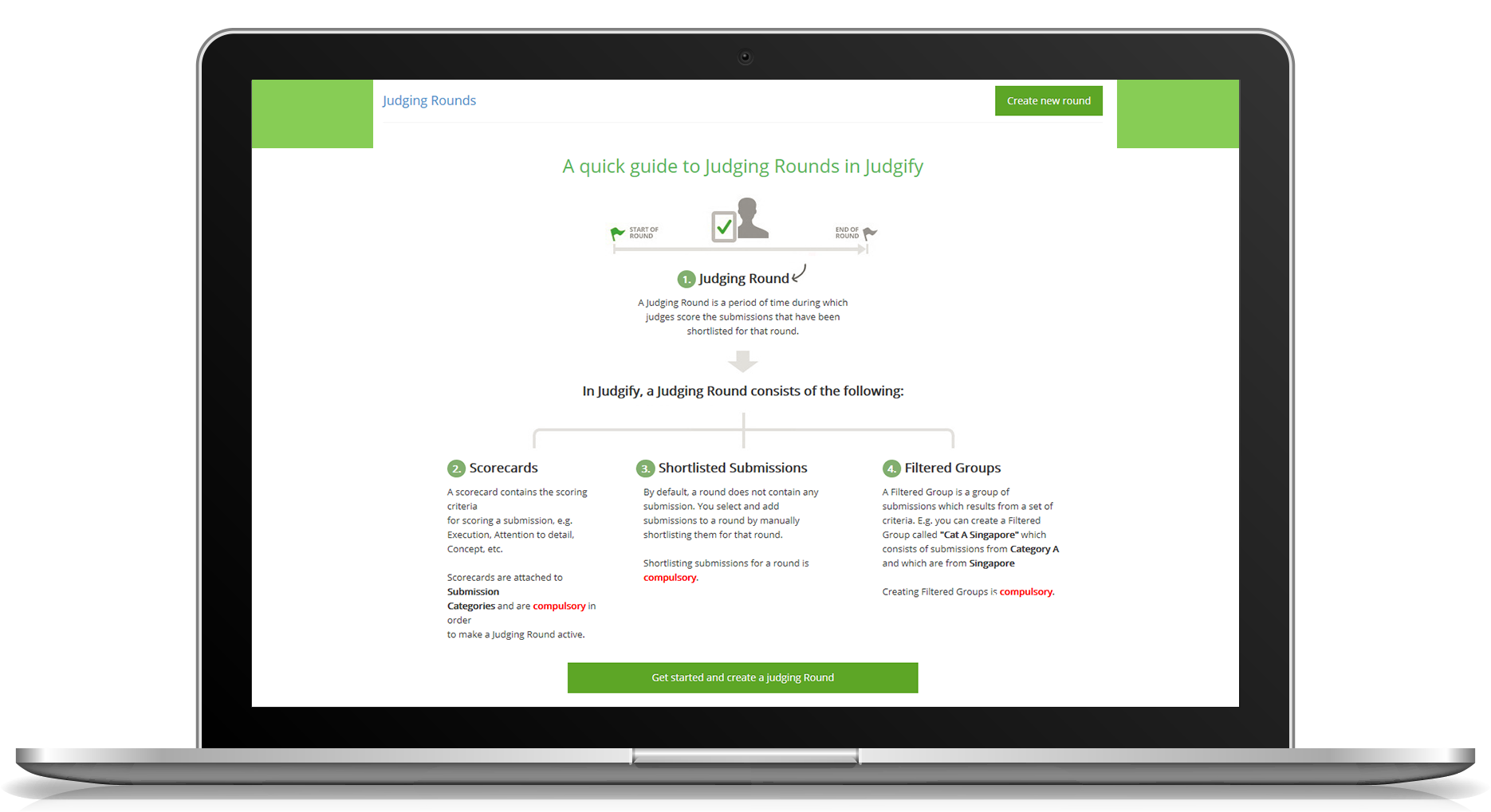

RocketJudge focuses on streamlining events, offering features for judge management and automated scoring. Evalato provides a comprehensive suite of tools for awards management, including online judging software. Judgify offers solutions for contest planning, submission management, and advanced scoring. However, don't choose a platform based on name recognition alone. Evaluate how well it supports your specific judging workflow and criteria. Don’t assume a feature exists just because a competitor advertises it; request a demo and test it thoroughly.

- Blind judging to hide names and photos

- Customizable Rubrics: Tailor scoring criteria to your competition.

- Secure Submission: Protect intellectual property.

- Real-time Collaboration: Facilitate judge discussion.

Virtual Competition Judging Platform Comparison - 2026

| Platform Type | Blind Judging | Rubric & Scoring | Security & Access Control | Collaboration Features | Overall Complexity |

|---|---|---|---|---|---|

| General Event Platforms (e.g., Zoom, Teams) | Basic - relies on judge discipline | Limited - requires manual implementation | Standard meeting security features | Good for live discussion, limited structured feedback | Lower - familiar interface, but judging features are add-ons |

| Dedicated Judging Platforms (e.g., RocketJudge, Evalato) | Strong - built-in features for anonymity | Excellent - designed for detailed rubrics and automated scoring | Robust - granular permission controls and data protection | High - designed for judge communication and review workflows | Medium - learning curve for platform-specific features |

| DIY Solutions (e.g., Google Forms + Spreadsheets) | Manual - requires significant setup and monitoring | Moderate - rubrics can be created, scoring is manual | Basic - relies on standard account security | Limited - requires separate communication channels | Higher - significant manual effort for setup, data management, and analysis |

| Hybrid Approach (Combination of platforms) | Variable - depends on the tools combined | Moderate to Excellent - can leverage strengths of different tools | Variable - depends on the tools combined | Moderate - requires careful integration | Medium to Higher - requires technical expertise for integration |

| Open Source Solutions | Potentially High - depends on implementation | Highly Customizable - requires development effort | Variable - depends on security measures implemented | Variable - depends on features developed | Higher - requires technical expertise and maintenance |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

Judging Workflow: From Submission to Results

A well-defined judging workflow is crucial for fairness and efficiency. Start with a clear entry submission process, ensuring all participants understand the requirements and deadlines. Once submissions are received, assign judges based on their expertise and potential conflicts of interest. Automated assignment features, if available in your chosen platform, can streamline this process.

Implement a multi-phase scoring system. Initial scoring should be individual, allowing judges to evaluate entries independently. A review/moderation phase can address discrepancies or questionable scores. Consider incorporating a second round of judging for finalists, with a different panel of judges to ensure objectivity. Throughout the process, maintain a clear audit trail of all scores and comments.

Minimize bias by clearly communicating expectations to judges. Provide detailed instructions on how to use the platform and interpret the scoring rubrics. Emphasize the importance of focusing solely on the established criteria and avoiding personal preferences. The final step is transparent results tabulation and communication to participants. Ensure the process for handling ties or disputes is clearly defined upfront.

- Entry Submission: Clear requirements and deadlines.

- Judge Assignment: Based on expertise and conflict checks.

- Scoring Phases: Individual, review, and potentially finalist rounds.

- Results Tabulation: Transparent and documented.

Rubrics are the only way to stay fair

Well-defined scoring rubrics are the bedrock of objective judging. They provide a consistent framework for evaluating entries, reducing subjectivity and ensuring fairness. A vague rubric is demonstrably worse than having no rubric at all; it invites inconsistent application and opens the door to accusations of bias. Invest the time to create rubrics that are specific, measurable, achievable, relevant, and time-bound (SMART).

There are two main types of rubrics: holistic and analytic. Holistic rubrics provide an overall impression score, while analytic rubrics break down the evaluation into specific criteria. Analytic rubrics are generally preferred for competitions, as they provide more detailed feedback and allow for a more nuanced assessment. Choose the rubric type that best suits the nature of your competition.

For an art contest, criteria might include creativity, technique, composition, and originality. A business pitch competition might evaluate market viability, financial projections, and presentation quality. A scientific abstract review might focus on methodology, results, and clarity of writing. Each criterion should have a clear scoring scale, with detailed descriptions of what each score represents. For example, a scale of 1-5, with 1 being 'poor' and 5 being 'excellent'.

- Originality and how much the idea pushes boundaries

- Technique: Skill and craftsmanship.

- Market Viability: Potential for success.

- Methodology: Soundness of research approach.

Judging Platform Considerations

- Zoom – Utilize breakout rooms for individual judging sessions and ensure all participants have stable internet connections. Consider Zoom’s webinar functionality for larger events with presentations.

- Google Workspace (Docs, Sheets, Forms) – Leverage Google Docs for collaborative rubric completion and feedback. Google Sheets allows for efficient data aggregation and scoring. Google Forms can streamline initial submission collection.

- Microsoft Teams – Similar to Zoom, Teams offers meeting and breakout room capabilities, alongside integrated file sharing and collaboration features. Consider its integration with Microsoft Office applications.

- Qualtrics – For more complex competitions requiring detailed survey-style judging, Qualtrics provides robust survey design and analysis tools, including scoring and reporting features.

- Slack – Facilitate real-time communication between judges and organizers using Slack channels. Create dedicated channels for questions, technical support, and announcements.

- OBS Studio – If live streaming of judging or awards ceremonies is planned, OBS Studio is a free and open-source software for video recording and live streaming.

- Discord – A popular platform for community building, Discord can be used to create a dedicated space for judges to connect, share resources, and ask questions.

Maintaining Integrity: Preventing Cheating & Bias

Maintaining integrity in a remote setting presents unique challenges. Secure entry submission is paramount. Implement measures to prevent plagiarism, such as requiring originality reports or using plagiarism detection software. Consider watermarking submissions to discourage unauthorized sharing. Timestamped submissions can also help establish authenticity.

Mitigating bias requires a multi-faceted approach. Blind judging is essential, concealing competitor identities from judges. Diverse judging panels, representing a range of backgrounds and perspectives, can help reduce unconscious bias. Clearly defined conflict-of-interest policies are also crucial. While proctoring software exists, its effectiveness varies greatly depending on the competition type, and may not be appropriate for all situations.

Implement a clear process for reporting and investigating suspected cheating or bias. Encourage judges to flag any concerns they may have. A transparent investigation process can help maintain trust and credibility. It’s also a good idea to include a disclaimer stating that any form of cheating will result in disqualification.

- Secure Submission: Prevent plagiarism and unauthorized access.

- Blind Judging: Conceal competitor identities.

- Diverse Panels: Reduce unconscious bias.

- Conflict-of-Interest Policies: Ensure impartiality.

Beyond the Score: Gathering Qualitative Feedback

Judging isn’t solely about assigning a numerical score. Qualitative feedback provides valuable insights for participants, helping them understand their strengths and weaknesses. Incorporate open-ended questions into the judging process, allowing judges to provide detailed comments on each entry. Encourage constructive criticism, focusing on specific areas for improvement.

Virtual judging can actually enhance the quality of feedback. Judges have more time to formulate thoughtful responses, and the platform allows for detailed written comments. This is a significant advantage over in-person events, where time constraints often limit the amount of feedback that can be provided. Consider using a standardized feedback template to ensure consistency.

The goal is to provide participants with actionable feedback that will help them grow and improve. Avoid vague or overly critical comments. Focus on specific aspects of the entry and offer concrete suggestions for improvement. A well-crafted feedback report can be just as valuable as the final score.

No comments yet. Be the first to share your thoughts!