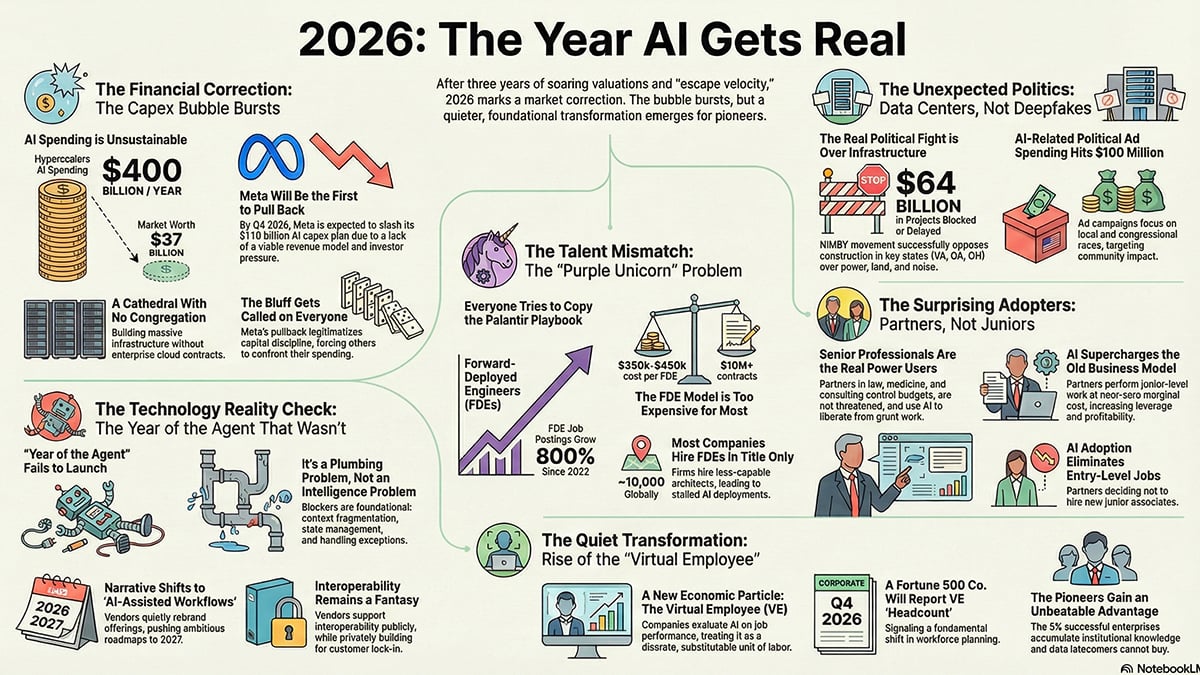

The shift toward AI judging

Competition judging, for a long time, has relied heavily on human expertise. That’s understandable, given the subjective nature of many contests. However, this approach isn’t without its challenges. Finding enough qualified judges can be difficult and expensive. Even with the best intentions, unconscious biases can creep into evaluations, impacting fairness. The sheer volume of submissions in some events – think coding competitions or large-scale art shows – can overwhelm judges, leading to rushed assessments.

AI is now a standard part of the judging process. Organizers use it to flag plagiarism, screen entries, and generate baseline scores. This year marks a shift where the software is finally reliable enough for high-stakes contests while becoming affordable for smaller events.

It's natural to feel some anxiety about AI taking on roles traditionally held by humans. I think it's important to frame AI not as a replacement for human judges, but as a powerful tool to augment their abilities. AI can handle the repetitive tasks, identify potential issues, and provide data-driven insights, allowing human judges to focus on the more nuanced aspects of evaluation. The goal isn't to eliminate human judgment, it's to improve it.

Right now, we are seeing the biggest AI integration in areas where objective criteria are important, or where handling large volumes of submissions is a major challenge. This includes coding competitions, writing contests, science fairs, and even visual arts competitions where style or technical skill can be assessed algorithmically. Expect this trend to continue, with AI finding applications in an even wider range of competitive events.

Essential features for your contest

Not all AI judging features are created equal. The value of a particular feature depends heavily on the type of competition you're running. It’s important to understand what each feature does and how it can benefit your evaluation process.

Sentiment analysis is particularly useful for subjective categories like creative writing, poetry, or even design. AI can analyze the text (or visual elements) to assess the emotional tone or overall feeling conveyed. This can help judges identify submissions that evoke a specific emotion or resonate with the competition's theme. However, it’s not a substitute for human interpretation – context matters.

Plagiarism detection is essential for academic contests, research grants, and any competition where originality is paramount. Tools like Turnitin (often integrated into these platforms) can compare submissions against a vast database of existing content. This helps ensure fairness and academic integrity. It’s important to note that plagiarism detection isn’t foolproof; it can sometimes flag false positives.

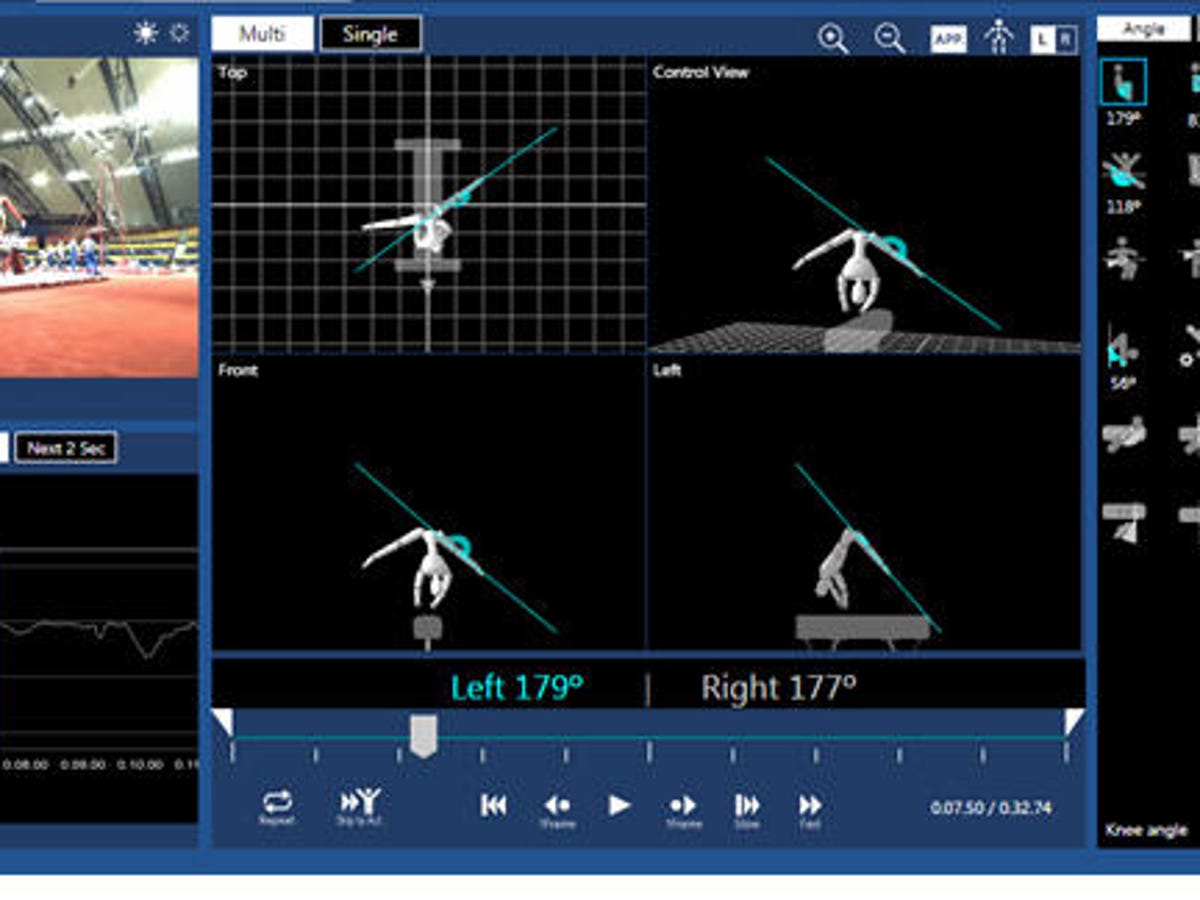

Style analysis can be helpful for assessing the technical skill or artistic merit of submissions. For example, in a coding competition, AI can analyze the code for efficiency, readability, and adherence to coding standards. In an art competition, AI can analyze images for composition, color balance, and technical execution. This is where explainability becomes crucial – you need to understand why the AI is assigning a particular score.

Automated scoring is the most ambitious AI feature. It involves training an AI model to evaluate submissions based on predefined criteria. This can save a lot of time and effort, but it requires a large dataset of labeled examples to train the model effectively. And, even with a well-trained model, human oversight is still essential to ensure fairness and accuracy. The AI should provide justification for its scores.

Essential Tools for Seamless Competition Judging

Includes 50 participant ribbons · Ribbons have space for event cards · Suitable for kids' competitions and school events

These classic ribbons offer a tangible way to recognize participants, complementing AI judging by providing immediate, physical acknowledgment of effort and achievement.

Clipboard with internal storage compartment · Accommodates letter, legal, and A4 size paper · Features a low-profile clip and pen holder

This versatile clipboard with storage is perfect for judges or organizers needing to keep essential documents and notes organized and accessible during events.

Clear donation/ballot box with a locking mechanism · Includes a sign holder for instructions or information · Compact size suitable for various locations

This secure and transparent box is ideal for collecting feedback, suggestions, or donations, ensuring fair and organized collection processes alongside AI-driven evaluations.

Adjustable floor-standing sign holder · Features a snap-open frame for easy poster changes · Suitable for various indoor locations

This adjustable sign holder ensures clear communication and directional guidance for participants and attendees, supporting the smooth flow of any competition managed with AI software.

As an Amazon Associate I earn from qualifying purchases. Prices may vary.

Security, Bias, and Ethical Considerations

The use of AI in judging raises important security, bias, and ethical considerations. It’s crucial to address these concerns proactively to ensure fairness, transparency, and accountability. Data security is paramount. The platform should use robust security measures to protect sensitive data from unauthorized access. Ensure the platform is compliant with relevant data privacy regulations, such as GDPR or CCPA.

AI algorithms can be biased, reflecting the biases present in the data they were trained on. This can lead to unfair or discriminatory outcomes. To mitigate bias, it’s important to use diverse and representative training datasets. Regularly audit the AI’s performance to identify and correct any biases. Transparency is key – understand how the AI is making its decisions.

Ethical considerations extend beyond bias. It’s important to ensure that the use of AI doesn’t undermine the integrity of the competition. Participants should be informed that AI is being used in the judging process. Provide a mechanism for appealing AI-driven decisions. Remember, AI should augment human judgment, not replace it entirely.

Consider the potential for adversarial attacks. Someone might try to manipulate the AI by submitting carefully crafted entries designed to exploit its weaknesses. Implement safeguards to prevent such attacks. Regularly update the AI model to address new threats.

- Ensure robust data security and privacy.

- Use diverse and representative training data.

- Regularly audit for algorithmic bias.

- Be transparent about AI’s role in judging.

Platforms Worth a Closer Look

From the platforms we discussed, Competitions.ai and Zenith Awards stand out for their innovative approaches to AI-powered judging. Competitions.ai’s comprehensive suite of AI features – sentiment analysis, style analysis, and automated scoring – makes it a strong contender for competitions that require nuanced evaluation. Their ability to customize the AI model to specific criteria is a significant advantage.

Zenith Awards is particularly well-suited for creative competitions. Their AI-powered image analysis tools can assess technical quality, aesthetic appeal, and originality. This can be incredibly valuable for judging photography, art, and design contests. The platform’s focus on visual content and its intuitive interface make it a user-friendly option for both judges and participants.

Judgify also deserves another mention. While not solely focused on AI, its robust contest management features combined with its scoring analysis tools make it a solid choice for organizations that need a comprehensive solution. Their emphasis on security and compliance is a major selling point for competitions that handle sensitive data. It's a good all-rounder, particularly if you anticipate needing extensive support for event logistics beyond just the judging phase.

Ultimately, the best platform for you will depend on your specific needs and budget. Carefully evaluate your requirements and compare the features and pricing of different platforms before making a decision. Don't be afraid to request demos or trials to get a feel for how the platforms work.

Scoring Method Comparison for AI-Powered Judging

| Scoring Method | Ease of Implementation | Suitability for Competition Type | Potential for Bias | Transparency |

|---|---|---|---|---|

| Weighted Scoring | Moderate - Requires defining criteria weights | Better for competitions with clearly defined, independent criteria (e.g., business plan competitions) | Moderate - Weight assignment can introduce subjective bias | High - Weights are explicitly defined, allowing review of influence |

| Rubric-Based Scoring | Moderate - Requires detailed rubric creation | Versatile - Suitable for diverse competition types, especially creative or performance-based | Moderate - Rubric design influences scoring, potential for interpretation differences | High - Clear criteria and descriptions enhance understandability |

| Pairwise Comparison | Lower - Can be complex to set up and manage with many entries | Better for competitions where direct comparison is meaningful (e.g., bracket-style tournaments, design competitions) | Lower - Relies on relative judgements, potentially amplifying initial biases | Moderate - The process reveals preferences, but underlying reasons aren't always clear |

| Hybrid (Rubric + Weighted) | Higher - Combines strengths of both methods | Very Versatile - Adaptable to nearly any competition format | Moderate - Requires careful design to minimize bias from both rubric and weights | Moderate - Transparency depends on clarity of both rubric and weighting scheme |

| AI-Assisted Rubric Scoring | Moderate - Requires initial rubric creation and AI training | Good for competitions with large numbers of submissions and well-defined criteria | Potentially Lower - AI bias is a concern; requires careful model validation | Moderate - AI’s reasoning may not always be fully explainable |

| AI-Driven Pairwise Ranking | Moderate - Requires AI training and data input | Suitable for competitions focused on ranking and preference determination | Potentially Moderate - AI can reflect existing biases in the training data | Lower - The AI's decision-making process can be a 'black box' |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

No comments yet. Be the first to share your thoughts!