The shift toward AI judging

Competition judging is undergoing a significant transformation. For decades, the process relied heavily on human expertise, but now, artificial intelligence is rapidly becoming an integral part of how we evaluate everything from culinary creations to design submissions. This isn't about replacing judges—a fear I frequently encounter when speaking with contest organizers—but about augmenting their abilities and streamlining the entire process.

The benefits are considerable. AI-powered judging software promises increased speed, particularly when dealing with a high volume of entries. Consistency is another key advantage; algorithms apply scoring rubrics uniformly, minimizing the discrepancies that can arise from human subjectivity. Perhaps most importantly, AI has the potential to reduce bias, though that’s a complex issue we’ll address later.

However, anxieties remain valid. Concerns about the lack of nuance in algorithmic assessment, the potential for errors in data interpretation, and the "black box’ nature of some AI systems are all legitimate. A system can be consistent while still being wrong. The ideal scenario, and what we"re seeing emerge, is a hybrid approach where AI handles initial screening and data analysis, while human judges focus on the more subjective aspects of evaluation.

I've evaluated the current market to see which tools actually handle high-volume scoring without losing the nuance of human critique. Here is how the top platforms compare in 2026.

Seven platforms to consider

The market is crowded, but most platforms fall into two camps: enterprise-grade suites or specialized niche tools. These seven are the most reliable options right now.

Judgify is a well-established platform known for its comprehensive suite of tools. Beyond AI-assisted scoring, Judgify excels at contest planning, submissions management, and branding. They cater to a wide range of event types, from small local competitions to large-scale international awards. A key strength is their robust reporting and analytics, allowing organizers to track judging trends and identify areas for improvement.

Evalato positions itself as a dedicated awards management solution. They offer features like online judging software, abstract management, and compliance tools. Evalato’s platform is particularly strong in handling complex scoring rubrics and facilitating collaboration among judges. They emphasize security and data privacy, which is crucial for maintaining the integrity of the judging process.

Judging Hub stands out for its focus on flexibility and customization. They offer a modular platform that allows organizers to select the features they need, rather than being forced into a one-size-fits-all solution. This makes it a good choice for competitions with unique requirements. Their API allows for integration with other systems, which is a significant advantage.

AwardStage is a more streamlined platform, geared towards smaller competitions and awards programs. It offers essential features like online submissions, judging dashboards, and basic reporting. While it may lack the advanced capabilities of some of the other platforms, it’s a cost-effective option for organizations with limited budgets.

Competitions.io targets the esports and gaming industries, offering features specifically designed for these types of competitions. This includes bracket management, live scoring, and integration with streaming platforms. Their AI-powered features focus on detecting cheating and ensuring fair play.

Scorechain is a newer entrant to the market, focusing on simplicity and ease of use. They offer a clean, intuitive interface and a streamlined workflow. Scorechain’s AI features are currently limited, but they are actively developing new capabilities. They are particularly strong in handling image and video submissions.

Finally, Qualtrics—primarily known for survey software—has expanded into the judging space. They leverage their existing data analysis capabilities to provide powerful insights into judging patterns. However, their platform may require more customization to meet the specific needs of a competition.

- Judgify: Best for large-scale reporting and end-to-end event management.

- Evalato: Awards management focused, complex rubrics, security-conscious.

- Judging Hub: Flexible, customizable, API integration.

- AwardStage: Streamlined, cost-effective, smaller competitions.

- Competitions.io: Esports/gaming specific, cheating detection.

- Scorechain: Simple, intuitive interface, image/video focus.

- Qualtrics: Data analysis, requires customization.

AI-Powered Judging Software Platforms: A Comparative Analysis - 2026

| Platform Name | Best For | Key Strengths | Notable Weaknesses | Pricing Model |

|---|---|---|---|---|

| Judgify | Broad range, particularly events with complex scoring | Robust feature set for contest lifecycle management. Strong emphasis on security and compliance. Supports diverse event types. | Can be complex to set up initially. May have feature overload for simpler contests. Reporting customization limited. | Tiered, based on event scale and features |

| Evalato | Award programs and competitions requiring detailed judge feedback | User-friendly interface. Focus on streamlined judging workflow. Good support for multiple judging rounds. | Less comprehensive event planning tools compared to Judgify. Limited branding options. Scalability concerns for very large events. | Per-submission, with volume discounts |

| Competition HQ | Science fairs and academic competitions | Specialized features for STEM judging. Strong support for rubric-based evaluation. Facilitates mentor assignment. | Interface may appear dated. Limited integration with third-party tools. Reporting functionality is basic. | Subscription-based, tiered by number of submissions |

| AwardForce | Film festivals and creative arts competitions | Designed for media-rich submissions. Strong support for video and image judging. Collaborative judging features. | Can be expensive for smaller competitions. Limited support for non-media submissions. Complex permission management. | Per-event, with add-ons for extra features |

| Submittable | Writing contests and grant applications | Excellent submission management capabilities. Flexible workflow customization. Strong integration with other tools. | Judging features are less specialized than dedicated platforms. Can be costly for large-scale judging. Reporting requires significant configuration. | Tiered, based on submissions and features |

| Qualtrics | Competitions requiring extensive data analysis and survey integration | Powerful survey and data analytics tools. Highly customizable. Integrates with a wide range of platforms. | Requires significant technical expertise to set up and manage. Not specifically designed for contest judging. Can be expensive. | Enterprise-level, quote-based pricing |

| Formstack | Simple contests and quick evaluations | Easy-to-use form builder. Streamlined submission process. Affordable for small-scale events. | Limited judging features. Basic reporting capabilities. Not suitable for complex scoring schemes. | Subscription-based, tiered by form submissions |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

Featured Products

Practical guidance on platform engineering principles · Integration of generative AI for software delivery · Focus on achieving high-performance software

This guide provides essential insights into leveraging AI for optimizing software delivery pipelines, crucial for advanced judging platforms.

Digital interface for score input · Automated calculation and display of scores · Potential for data logging and retrieval

An electronic scoring unit offers a foundation for digital evaluation, enabling precise and immediate score recording in competitive settings.

Interactive exercises for leadership development · Application of AI principles to leadership · Reinforcement of concepts from the main book

This workbook helps develop the strategic thinking necessary to implement and manage AI-driven processes, including those used in judging.

Durable metal construction · Customizable engraving for recognition · Includes mounting hardware for easy attachment

While not software, this product represents the output of a judging process, highlighting the importance of clear and permanent record-keeping for awards.

Principles for designing robust AI systems · Strategies for building scalable AI architectures · Focus on modern software development practices

This resource is vital for understanding the underlying architecture of AI judging platforms, ensuring their reliability and scalability.

As an Amazon Associate I earn from qualifying purchases. Prices may vary.

Scoring and analytics

The true power of AI judging platforms lies in their scoring and analytics capabilities. Most platforms support a variety of scoring rubrics, from simple numerical scales to complex weighted criteria. The key is how easily judges can apply these rubrics and provide meaningful feedback. I’ve seen some platforms where the interface makes it cumbersome to assign scores, leading to rushed evaluations and lower quality results.

Platforms like Judgify and Evalato offer advanced features like blind judging, where judge identities are concealed from entrants. This helps to minimize bias. They also allow for multiple rounds of judging, with scores aggregated and analyzed to identify outliers and ensure consistency. The ability to easily flag entries for review is also crucial.

Providing constructive feedback is another important aspect of the judging process. The best platforms allow judges to leave comments directly on submissions, highlighting specific strengths and weaknesses. Some platforms even offer AI-generated feedback suggestions, which can be helpful but should always be reviewed by a human judge. I'm skeptical of fully automated feedback; it often lacks the nuance and context of a human assessment.

Reporting and analytics are essential for understanding judging trends and identifying areas for improvement. Platforms should provide reports on score distributions, inter-judge reliability, and common themes in feedback. This data can be used to refine scoring rubrics, improve the judging process, and provide valuable insights to entrants.

- Support for various scoring rubrics (numerical, weighted, etc.)

- Blind judging capabilities to minimize bias

- Multiple rounds of judging with score aggregation

- Easy-to-use interface for providing feedback

- AI-generated feedback suggestions (with human review)

- Comprehensive reporting and analytics

Integration and Workflow

A seamless integration with existing contest management systems is critical for a smooth workflow. Many platforms offer APIs that allow developers to connect them to other applications, such as submission platforms, payment gateways, and email marketing tools. This avoids the need for manual data transfer, which is prone to errors and time-consuming.

The typical workflow looks like this: entrants submit their work through a designated platform, the submissions are automatically imported into the judging software, judges are assigned to evaluate entries, scores and feedback are collected, and finally, results are compiled and announced. The more automated this process is, the more efficient it will be.

Judgify and Judging Hub excel in this area, offering robust API support and integrations with popular contest management systems. Evalato also provides integration options, but they may require more customization. Smaller platforms like AwardStage may have limited integration capabilities. It’s important to assess your organization’s technical resources and integration needs before making a decision.

Consider also the mobile accessibility of the platform. Judges should be able to evaluate submissions on a variety of devices, including laptops, tablets, and smartphones. A mobile-friendly interface is particularly important for competitions that involve on-site judging.

Bias Detection & Mitigation

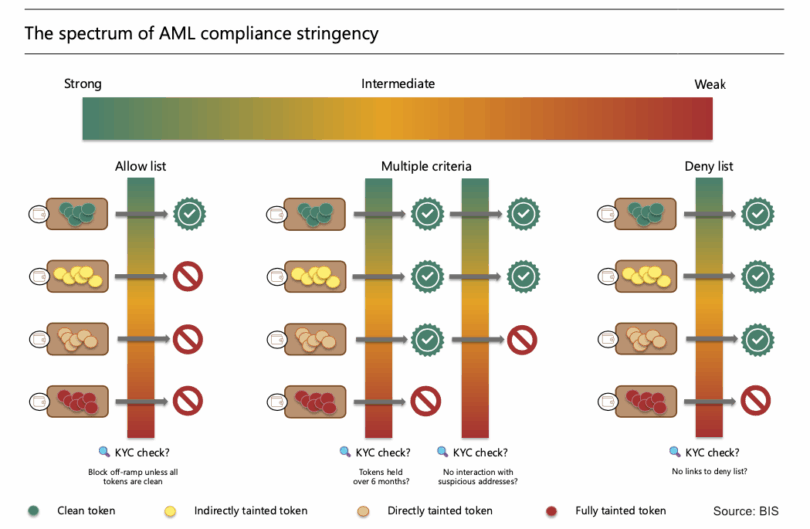

Addressing bias in judging is arguably the most critical challenge facing AI-powered platforms. While AI can help to reduce certain types of bias, it’s not a silver bullet. Algorithms are trained on data, and if that data reflects existing biases, the AI will perpetuate them. This is a well-documented problem in the field of artificial intelligence.

Most platforms employ techniques like blind judging, data anonymization, and algorithmic fairness checks to mitigate bias. However, these measures are not foolproof. It’s essential to carefully evaluate the algorithms used by each platform and understand their potential limitations. Some platforms claim to use "fairness-aware" algorithms, but the specifics are often opaque.

Data anonymization involves removing identifying information from submissions, such as the entrant’s name, location, and affiliation. This helps to prevent judges from being influenced by factors unrelated to the quality of the work. However, even anonymized data can sometimes reveal clues about the entrant’s identity.

Ultimately, the responsibility for ensuring fairness lies with the contest organizers. They should carefully design the scoring rubrics, train the judges, and monitor the judging process for any signs of bias. AI can be a valuable tool in this effort, but it should not be seen as a replacement for human oversight. I’ve seen too many competitions rely solely on the algorithm, leading to questionable results.

- Blind judging

- Data anonymization

- Algorithmic fairness checks

- Human oversight and monitoring

No comments yet. Be the first to share your thoughts!