Judging in 2026: The AI Shift

Competition judging is changing, and it's happening fast. For many judges, the idea of Artificial Intelligence stepping into the process feels… unsettling. There’s a worry that the human element – the nuance, the subjective appreciation – will be lost. I get that. But the reality is, AI isn't about replacing judges; it’s about equipping them with tools to be more effective, more consistent, and more fair.

Think of it like this: AI can handle the heavy lifting – the initial screening for plagiarism, the identification of potential biases, the consistent application of rubrics. This frees up judges to focus on what they do best: evaluating the creative merit, the technical skill, and the overall impact of each submission. It’s a collaboration, not a takeover.

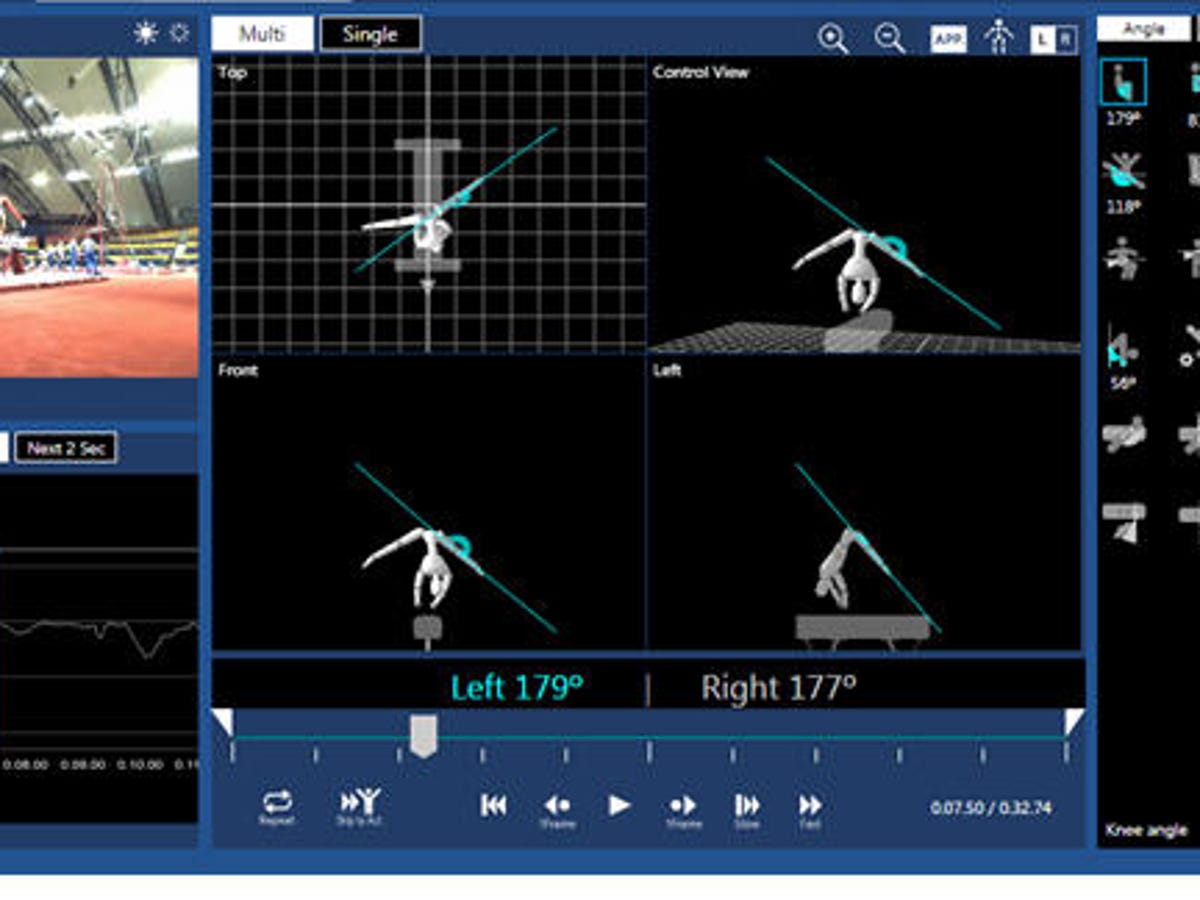

We’re already seeing AI make inroads in several competition areas. Art and design contests are using AI to analyze composition and color palettes. Writing competitions are employing AI to check for originality and assess readability. Coding challenges are leveraging AI to evaluate code efficiency and identify bugs. Science fairs are using AI to analyze data and identify trends. And this is just the beginning. Expect to see AI play a bigger role in everything from music competitions to business plan pitches in the coming years.

This guide will walk you through the best AI-powered judging platforms available in 2026. We’ll look at features, pricing, integration options, and everything you need to know to make an informed decision. Whether you’re an organizer looking to streamline your competition or a judge wanting to improve your efficiency, this is your starting point.

The Top 7 AI Judging Platforms

The AI judging platform market is evolving quickly, with several key players emerging as leaders. This rundown covers seven of the best platforms as of late 2026, focusing on features that directly benefit judges and organizers and explaining their impact on the judging process.

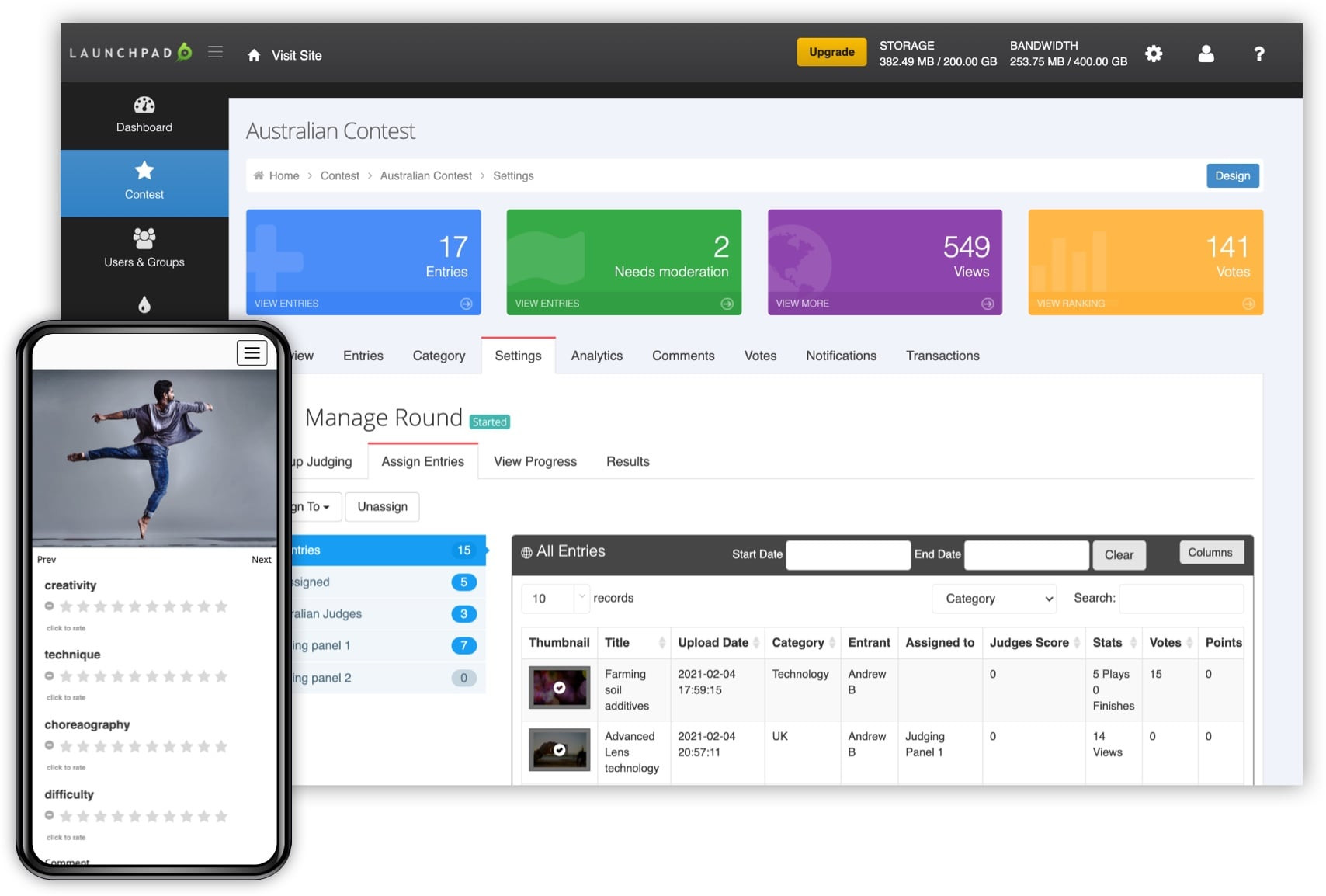

Judgify is a strong contender with a comprehensive suite of tools for contest planning and reporting. Their focus on branding and promotion is a notable differentiator. For judges, 'Advanced Scoring and Reporting' features, including data visualizations and pattern identification, are valuable. They also emphasize security and compliance for sensitive submissions.

Evalato specializes in award management, with a strong focus on online judging software, customizable workflows, and secure submission handling. A key benefit for judges is blind judging, which hides submitter identity to reduce bias. The platform also manages judge assignments and tracks progress.

Judging Hub offers judging dashboards, scoring rubrics, and communication tools. Their emphasis on testimonials and case studies suggests a focus on customer satisfaction. Organizers will find the 'Awards Management' features appealing for managing the entire awards process. The platform also has a robust API for system integration.

Scorecast, a newer entrant, focuses heavily on bias detection using AI algorithms to analyze submissions for potential biases related to gender, ethnicity, or other demographic factors. While this is a powerful feature, AI is only as unbiased as its training data, making human oversight essential. The platform also offers automated scoring and feedback.

CritiqueAI specializes in creative fields like art, writing, and music, with AI algorithms trained to understand aesthetic qualities and provide constructive feedback. Judges can use CritiqueAI to quickly identify strengths and weaknesses and provide detailed critiques. The platform also supports collaborative judging.

CodeReviewAI is designed for coding competitions, automatically analyzing code for quality, efficiency, and security vulnerabilities. Judges can use it to quickly identify potential issues and ensure submissions meet standards. The platform integrates with code repositories like GitHub and GitLab.

Awardify offers a streamlined experience for smaller competitions. While it may lack advanced features, it provides basic scoring, judging assignment, and reporting tools, making it a good option for budget-conscious organizations. It's a simpler, effective solution for specific use cases.

Essential Gear for Seamless AI Judging

Noise-cancelling microphone for clear audio · USB-A connectivity for easy plug-and-play · In-line controls for volume and mute adjustments

This headset ensures judges can clearly communicate with participants and organizers, even in noisy environments, thanks to its noise-cancelling microphone.

Dual-band Gigabit Wi-Fi for fast internet speeds · Parental controls and QoS for network management · Works with Alexa for voice control

A reliable and fast internet connection is crucial for AI judging platforms, and this router provides just that, along with features to manage network traffic effectively.

Studio-quality condenser microphone · Blue VO!CE effects for voice modulation · Plug-and-play USB connectivity for easy setup

This microphone delivers exceptional audio quality, ensuring that judges can clearly hear and understand any audio submissions or verbal feedback during the competition.

2TB of portable storage · Built-in backup software · Password protection and ransomware defense

With ample storage and robust security features, this external hard drive is perfect for judges to securely store and back up competition data and submissions.

Industry-leading noise cancellation · Comfortable over-ear design · Bluetooth wireless connectivity

These headphones provide an immersive and distraction-free environment for judges, allowing them to focus entirely on evaluating entries without external noise interference.

As an Amazon Associate I earn from qualifying purchases. Prices may vary.

Scoring Methods: AI’s Role

AI is changing how we score competitions, moving beyond simple point totals to more nuanced, data-driven methods. While rubric-based scoring is common, AI can automate rubric application, ensuring consistency. The AI analyzes submissions against rubric criteria and assigns scores.

AI enhances comparative scoring, where submissions are ranked against each other. The AI identifies outliers—exceptionally good or bad submissions—and flags them for judge review, ensuring the best are recognized and the worst are eliminated.

Anomaly detection is a more advanced technique where AI algorithms identify submissions that deviate significantly from the norm. This is useful for detecting plagiarism, fraudulent submissions, or highlighting innovative work. It's about finding the unexpected.

The key benefit of AI-powered scoring methods is standardization, which helps eliminate subjectivity and ensures all submissions are evaluated by the same criteria for fairer, more consistent results. However, AI is a tool, not a replacement for human judgment; judges must review AI findings and make the final decision.

AI-Powered Judging Software Comparison - 2026

| Scoring Method | Ease of Implementation | Transparency & Explainability | Bias Reduction Potential | Suitable Competition Types |

|---|---|---|---|---|

| Rubric-Based AI 📏 | Generally straightforward, especially with platforms offering pre-built rubric templates. May require initial effort to define detailed criteria. | High. Scores are directly tied to rubric elements, providing clear justification. However, the AI's *interpretation* of rubric levels can be a black box. | Moderate. Reduces subjectivity by standardizing evaluation, but AI training data can still introduce bias. Careful rubric design is crucial. | Art, Writing, Design, any competition with clearly defined quality standards. |

| Comparative AI (Pairwise Ranking) 🆚 | Moderate. Requires a significant volume of submissions for effective ranking. Setup can be simpler than rubric-based if detailed criteria aren’t needed. | Low to Moderate. Explaining *why* one submission was ranked higher than another is challenging. Often relies on feature extraction the user can’t easily interpret. | Moderate. Can mitigate individual judge bias by focusing on relative differences. Still susceptible to systemic biases in the data. | Coding challenges, Photography, where aesthetic or functional preference is key, and detailed rubrics are difficult to create. |

| Anomaly Detection AI 🚨 | Complex. Requires specialized expertise in data science and machine learning to train and deploy effectively. Not typically a feature of off-the-shelf judging platforms. | Low. Identifying outliers doesn't inherently explain *why* they are outliers or whether the outlier represents positive or negative quality. | High. Focuses on identifying submissions that deviate significantly from the norm, potentially highlighting innovative or flawed entries. | Coding (identifying security vulnerabilities), Data Science competitions, situations where novelty is highly valued. |

| Hybrid (Rubric + Comparative) 🤝 | Moderate to High. Leverages the benefits of both approaches, but requires careful integration and configuration. | Moderate. Transparency depends on the weighting given to each method. Rubric scores provide some explainability, while comparative ranking remains less transparent. | High. Combining standardized rubrics with comparative analysis can reduce both individual and systemic biases. | Versatile - suitable for a wide range of competitions including Writing, Art, Coding, and Business Plan contests. |

| Evalato (Platform Example) 🏆 | Reportedly offers a user-friendly interface and streamlined workflow. Implementation complexity depends on the contest setup. | Moderate. Provides reporting and analytics, but the underlying AI algorithms are not fully transparent. | Potentially Moderate. Evalato emphasizes features like blind judging to reduce bias. | Supports a variety of competition types including essay contests, design challenges, and innovation awards. |

| Judgify (Platform Example) ⚙️ | Focuses on end-to-end contest management, potentially simplifying implementation. Offers features like submission management and public voting. | Moderate. Provides scoring and reporting tools, but detailed algorithm explainability may be limited. | Potentially Moderate. Judgify offers advanced scoring options and security features. | Suitable for a broad range of contests, including abstract submissions, award nominations, and general competitions. |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

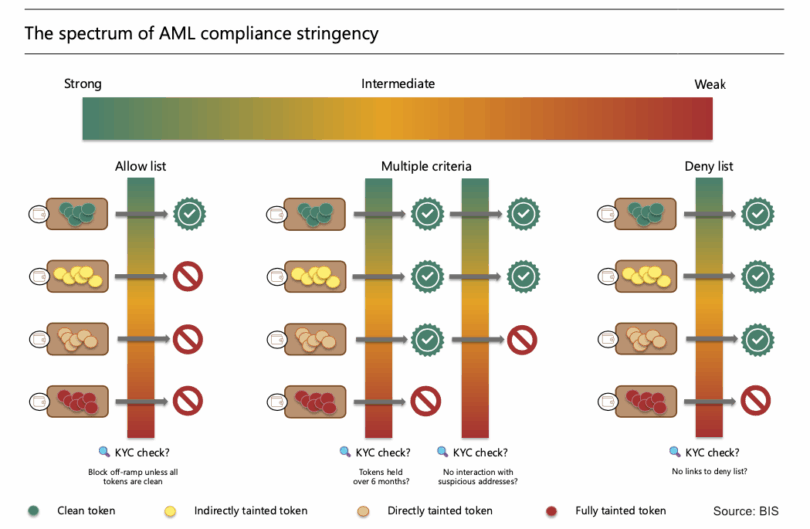

Bias Detection and Fairness

A promising application of AI in judging is bias detection. AI algorithms can analyze submissions for potential biases related to gender, ethnicity, age, or other demographic factors, flagging submissions that use gendered language or stereotypes. This is a significant step toward more inclusive and equitable competitions.

However, AI's limitations in bias detection must be understood. AI is only as unbiased as its training data; biased data leads to biased AI. This is a serious concern, making human oversight crucial. Judges must critically evaluate AI findings to ensure they do not reinforce existing inequalities.

AI can also identify false positives, flagging submissions as biased when they are not. This can frustrate judges and lead to unfair outcomes. AI should be used as a screening tool, not a definitive judge of bias; the final decision should always rest with a human.

To mitigate bias, look for platforms with transparent AI algorithms. Understand how the AI makes decisions and what data it uses. The goal is to minimize the impact of unconscious biases, not eliminate all subjectivity, striving for fairness.

- Review AI Findings: Don't accept AI bias detections at face value.

- Understand Training Data: Ask vendors about the data used to train their AI.

- Maintain Human Oversight: Judges should always have the final say.

Integration and Workflow

Consider how easily the AI judging platform integrates with your existing competition management systems. If you use a platform like Judgify, find an AI solution that integrates seamlessly to save time, effort, and reduce errors.

Most AI judging platforms offer APIs for connecting with other systems, but integration complexity varies. Some offer pre-built integrations with popular systems, while others require custom development. Consider your technical resources and budget.

The learning curve for judges is another important factor. Look for a platform with a clear, intuitive interface and adequate training materials. Easier platforms are more likely to be adopted by judges.

undefined can make all the difference.

Cost Considerations and ROI

AI judging platforms vary significantly in price. Some offer subscription-based pricing, while others charge per submission. Some platforms offer tiered pricing plans, with different features and usage limits. The cost will depend on the size and complexity of your competition, as well as the features you need.

Evaluating the return on investment (ROI) can be tricky. The most obvious cost savings come from reduced judging time. AI can automate many of the tedious tasks that judges used to do manually, freeing up their time to focus on more important aspects of the judging process. However, quantifying these savings can be difficult.

Other potential benefits include increased efficiency, improved consistency, and reduced bias. These benefits are harder to measure, but they can have a significant impact on the overall quality of your competition. It’s important to weigh the costs and benefits carefully before making a decision.

Don’t forget to factor in the cost of training and integration. These costs can add up quickly, especially if you need to hire external consultants. Be sure to get a clear understanding of all the costs involved before signing a contract.

Platforms Worth a Closer Look

From the options we discussed, Scorecast and CritiqueAI stand out as particularly promising. Scorecast’s focus on bias detection is incredibly valuable, especially in today’s environment. While the AI isn’t perfect, it provides a valuable layer of scrutiny and helps to ensure that judging is as fair as possible. Their commitment to transparency in their algorithms is also a plus.

CritiqueAI shines in creative competitions. The ability to provide automated feedback on artistic submissions is a game-changer. It’s not about replacing human critique, but about augmenting it. Judges can use CritiqueAI to quickly identify areas for improvement and to provide more targeted and constructive feedback. This is particularly useful for competitions with a large number of submissions.

Finally, Judgify remains a solid all-around choice. Its comprehensive feature set and focus on branding and promotion make it a good option for organizations looking for a complete competition management solution. While it may not be the most innovative platform, it’s reliable and well-supported.

No comments yet. Be the first to share your thoughts!