The shift toward AI judging

Organizing a competition is getting harder. Entry counts are climbing, and people expect results instantly. Paying a panel of human experts to review thousands of submissions is expensive and slow. AI tools are now reliable enough to handle the heavy lifting of initial scoring without the overhead of a massive human team.

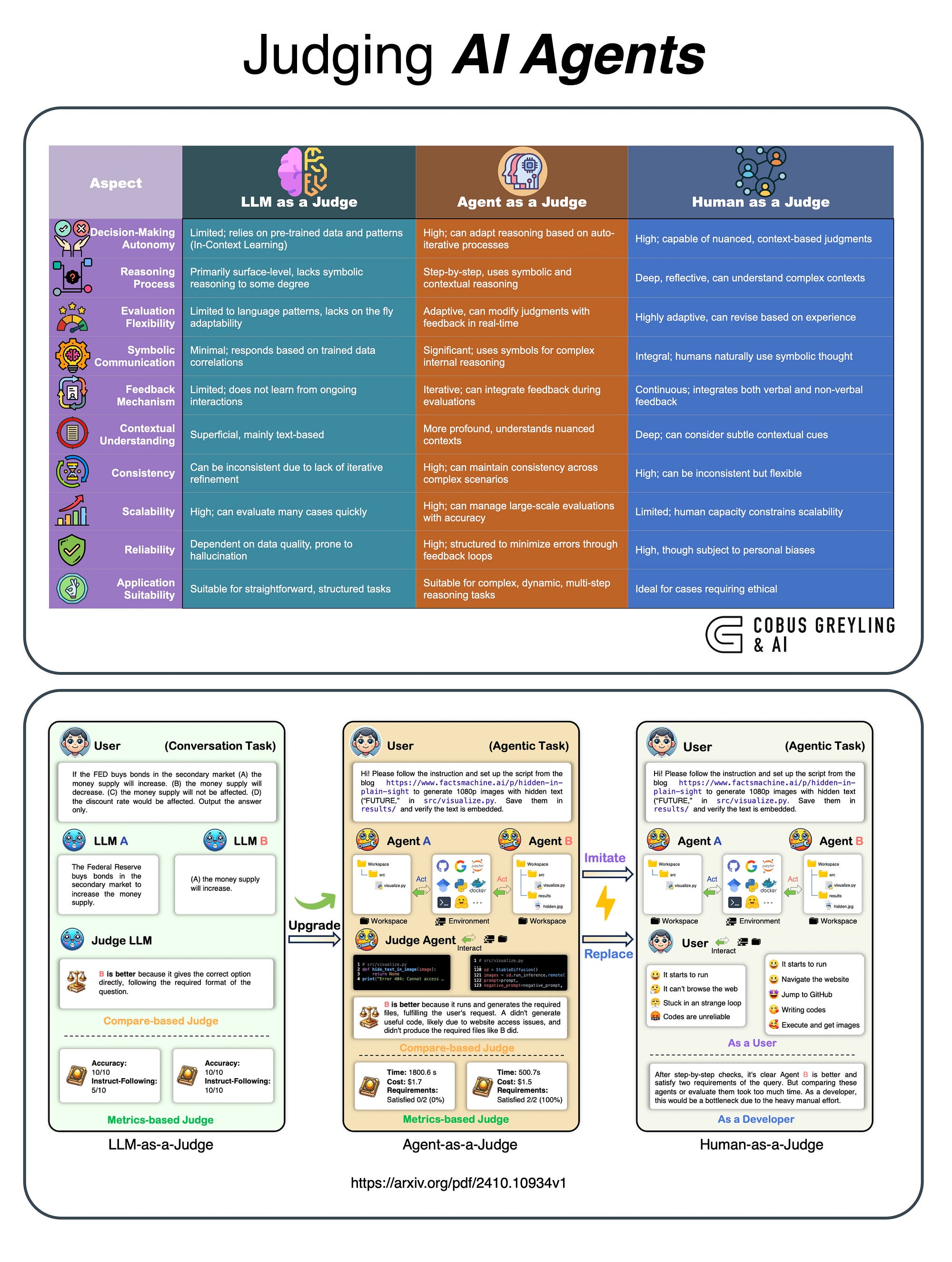

Initial skepticism surrounding AI judging is rapidly fading. Early concerns about a lack of nuance or creativity being recognized are being addressed through sophisticated algorithms and machine learning models. We’re seeing a shift from "can AI judge?’ to ‘how can AI enhance judging?’ The platforms aren"t intended to replace human oversight entirely—at least, not yet—but to augment it, handling the initial screening and scoring, and flagging submissions for closer human review.

The market for judging software has exploded, according to reports from JudgingHub. The sheer number of options can be overwhelming. However, the platforms that are gaining traction aren't simply automating existing processes; they're fundamentally changing how competitions are run. This isn’t about simply digitizing paper forms; it’s about leveraging AI to identify patterns, detect plagiarism, and ensure that the best submissions rise to the top. The benefits extend to participants, too, with faster feedback and increased transparency.

By 2026, AI judging isn't a futuristic concept; it’s a mainstream reality. While concerns about data privacy and algorithmic bias remain, the advantages in terms of efficiency and scalability are proving too significant to ignore. We’re entering an era where AI will be integral to ensuring fair and efficient competition judging across a wide range of disciplines.

Essential features for your workflow

Choosing the right AI judging platform requires careful consideration of your specific needs. Beyond the basic features, several key capabilities are essential for ensuring a fair and efficient judging process. Automated scoring is a cornerstone, but the sophistication of the algorithms varies significantly between platforms. Look for platforms that allow you to customize scoring rubrics and weight different criteria.

Plagiarism detection is particularly important for text-based submissions. Robust plagiarism detection tools can help identify submissions that are not original, ensuring that participants are evaluated on their own merits. Bias mitigation techniques are equally crucial. AI algorithms can inadvertently perpetuate existing biases, so it’s essential to choose a platform that actively addresses this issue through anonymization of submissions or other methods.

Support for different scoring rubrics is another key consideration. Competitions often use different scoring systems, so the platform should be flexible enough to accommodate your specific requirements. Reporting and analytics are essential for tracking judging patterns and identifying potential issues. A good platform will provide detailed insights into how submissions were scored, allowing you to identify potential biases or inconsistencies. Look for customizable reports and data export options.

Transparency is paramount. Organizers need to understand how the AI is arriving at its scores. A "black box’ system erodes trust. Platforms that offer explainable AI (XAI) features are gaining prominence. These features provide insights into the factors that influenced the AI"s scoring decisions, helping to build confidence in the process.

- Automated Scoring: Customizable rubrics and weighting.

- Plagiarism Detection: Robust tools for text-based submissions.

- Bias Mitigation: Anonymization and bias detection algorithms.

- Scoring Rubric Support: Flexibility to accommodate different systems.

- Reporting & Analytics: Customizable reports and data export.

AI-Powered Judging Software Comparison - 2026

| Platform | Ease of Use | Supported Media Types | Bias Detection | Integration Options | Customer Support |

|---|---|---|---|---|---|

| JudgingHub | Good | Text, Image, Video | Fair | API, Zapier | Good |

| Evalato | Good | Text, Image | Fair | API | Good |

| AwardStage | Fair | Text, Image, Audio | Limited | Zapier | Fair |

| Submittable | Fair | Text, Image, Video, Audio | Limited | API, Webhooks | Good |

| FilmFreeway | Fair | Video | Limited | API | Fair |

| Qualtrics | Fair | Text, Image | Good | API, Various Integrations | Excellent |

| SurveyMonkey Apply | Good | Text | Limited | API, Integrations | Good |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

Media Type Support: A Critical Distinction

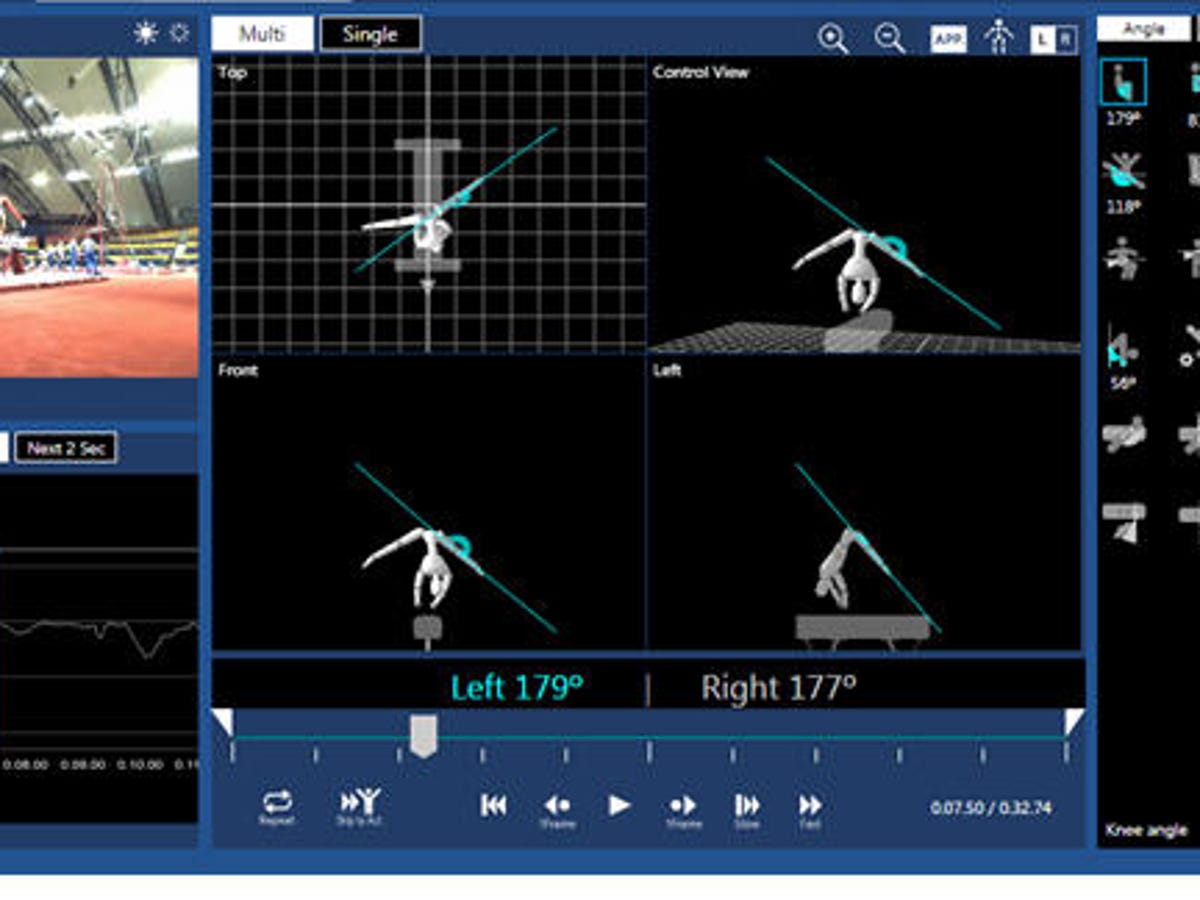

Not all AI judging platforms are created equal when it comes to media type support. Some excel at evaluating text-based submissions, while others are better suited for visual arts or video/audio content. Zenith Judging, as mentioned earlier, is specifically designed for visual media, offering AI-powered analysis of composition and aesthetics. This is a significant advantage for photography or graphic design competitions.

For written submissions, platforms like Evalato and JudgingHub offer robust plagiarism detection and natural language processing capabilities. These tools can assess grammar, style, and overall quality. However, evaluating creative writing often requires a more nuanced approach. AI can identify technical flaws, but it may struggle to appreciate artistic merit or originality.

Video and audio content present unique challenges for AI judging. Platforms are beginning to incorporate features for analyzing video quality, audio clarity, and even emotional tone. However, these technologies are still in their early stages of development. Human review remains essential for evaluating the artistic and technical aspects of video and audio submissions. DOMjudge's capabilities for automated testing are useful for judging submissions involving code or interactive elements.

Before selecting a platform, carefully consider the primary media type of your competition. Choosing a platform that is well-suited for your specific needs will significantly improve the accuracy and efficiency of the judging process. A platform that attempts to be a jack-of-all-trades may not excel at any one particular media type.

Bias Detection and Mitigation

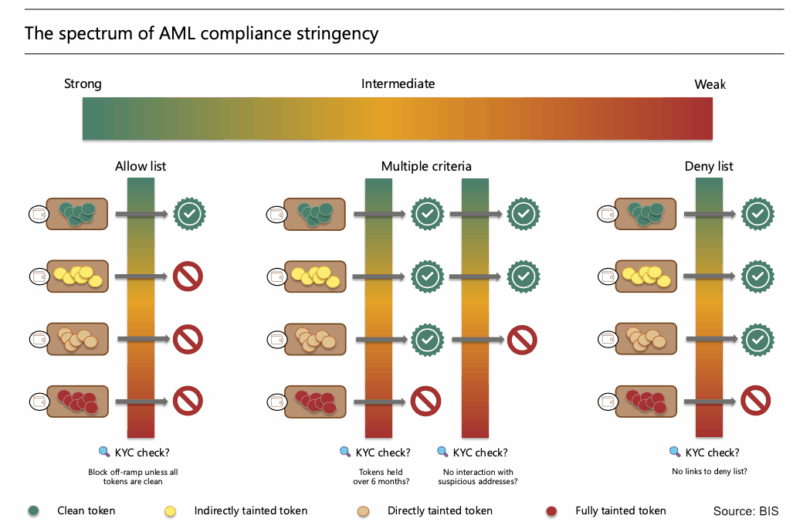

The potential for bias in AI judging is a serious concern. AI algorithms are trained on data, and if that data reflects existing biases, the algorithms will likely perpetuate them. This can lead to unfair or discriminatory outcomes. Leading platforms are actively addressing this issue through various techniques, but it’s crucial to understand the limitations.

Anonymizing submissions is a common approach to mitigating bias. By removing identifying information, such as the participant’s name or affiliation, the AI can focus solely on the content of the submission. However, anonymization is not always foolproof. Subtle clues in the writing style or content may still reveal the author’s identity. Some platforms use techniques like adversarial debiasing to actively remove bias from the training data.

Identifying potentially biased scoring patterns is another important step. Platforms like Evalato provide detailed analytics that can reveal whether certain groups of participants are consistently being scored lower than others. This information can be used to investigate potential biases and adjust the scoring rubrics accordingly. It’s important to note that bias detection is an ongoing process.

Organizers also have a role to play in mitigating bias. Carefully reviewing the scoring rubrics and ensuring that they are fair and objective is essential. Providing clear guidelines to human judges can also help to reduce the impact of unconscious bias. Transparency in the judging process is key to building trust and ensuring fairness. This is an area where ongoing research and development are critical.

- Strip names and affiliations from entries so the AI only sees the work.

- A: Anonymize submissions, review scoring rubrics for bias, provide clear guidelines to human judges, and use platforms with bias detection features.

- FAQ: Can AI truly be unbiased?

- A: Not entirely. AI reflects the data it's trained on. Mitigation techniques can reduce bias, but ongoing monitoring is essential.

Platforms Worth a Closer Look

While all the platforms mentioned above have their strengths, a few stand out for their innovation and potential. Evalato’s robust analytics and bias detection features make it a particularly promising option for organizations that prioritize fairness and transparency. Their commitment to data-driven decision-making is commendable.

CompetitionHQ’s focus on user experience is also noteworthy. The platform’s clean, intuitive interface makes it easy for both organizers and participants to navigate. This is especially important for competitions that attract a diverse range of users with varying levels of technical expertise.

Zenith Judging, with its specialized focus on visual arts, offers a unique value proposition. Its AI-powered analysis of aesthetic elements can provide valuable insights for judges and help to identify truly outstanding submissions. These three platforms represent the cutting edge of AI-powered judging technology.

- Evalato: Excellent analytics and bias detection.

- CompetitionHQ: Superior user experience.

- Zenith Judging: Specialized for visual arts.

Essential Hardware for Seamless AI-Powered Competition Judging

Full HD 1080p video calling · Clear stereo audio · Light correction

Ensure clear and professional video communication for remote judges with this reliable webcam.

Active noise cancellation · Wireless Bluetooth connectivity · Comfortable over-ear design

Provide judges with an immersive and distraction-free audio experience, crucial for focused evaluations.

Ergonomic design for posture support · Adjustable lumbar support · Durable construction

Guarantee judge comfort during long evaluation periods with this premium ergonomic chair.

Automatic Document Feeder (ADF) · 600 dpi optical resolution · Fast scanning speeds

Efficiently digitize competition entries and supporting documents with this high-performance scanner.

Up to 1050MB/s read speed · USB 3.2 Gen2 interface · IP65 rated ruggedness

Securely store and quickly access large competition files and media with this durable and fast portable SSD.

As an Amazon Associate I earn from qualifying purchases. Prices may vary.

No comments yet. Be the first to share your thoughts!