The shift toward automated evaluation

Competition judging, for a long time, has relied on the dedication of human judges. This system isn’t without its challenges. Finding enough qualified judges, ensuring consistency in scoring, and mitigating inherent biases are ongoing concerns for contest organizers. The process can be incredibly time-consuming and, frankly, expensive. We’re often asking people to volunteer their expertise, and that’s a big ask.

Now, we’re seeing a shift. Artificial intelligence isn’t poised to replace human judges, but it’s rapidly becoming a powerful tool to augment their capabilities. Think of it as a co-pilot, assisting with tasks like initial screening, data analysis, and identifying potential outliers. This frees up human judges to focus on the more nuanced aspects of evaluation – the creative spark, the innovative thinking, the subtle details that an algorithm might miss.

Competitions benefiting the most from this early AI integration are those with large submission volumes and clearly defined criteria. Art contests, writing competitions, science fairs, and even marketing award shows are all prime candidates. The ability of AI to quickly process and analyze data is a game-changer, especially when dealing with hundreds or even thousands of entries. However, it's not just about speed. AI can also help to improve the fairness of judging, a crucial consideration for any competition.

It's important to be realistic. AI judging is still evolving. The technology isn’t perfect, and it’s not a silver bullet. But the potential benefits are significant enough that we’re seeing increasing adoption across a wide range of industries. In 2026, expect AI to be a standard component of the judging process for many major competitions, working alongside human expertise to deliver more efficient, consistent, and objective evaluations.

Top platforms for 2026

The market is crowded, but these seven platforms stand out for 2026. Each fits a different niche depending on your budget and the type of entries you're reviewing.

Judgify.me is a comprehensive award management system. It’s more than just judging software; it handles everything from contest creation and submission collection to award distribution. It’s a good option for organizations running multiple contests annually and needing an all-in-one solution. They emphasize streamlining the entire process, making it easier for both organizers and judges.

Evalato.com positions itself as a platform for awards, and their judging tools reflect that. They offer robust features for managing the judging process, including blind judging, scoring rubrics, and detailed reporting. They also focus on data privacy and security, which is critical for maintaining the integrity of the competition. They are known for a strong focus on awards programs.

RocketJudge is geared towards in-person and hybrid events. Their mobile judging app allows judges to interview participants and submit scores directly from their devices. This is particularly useful for events like hackathons, pitch competitions, and robotics competitions where real-time feedback is essential. Their strength is in live event scenarios.

DOMjudge is an open-source platform traditionally used in programming contests. While it requires more technical expertise to set up and maintain, it offers a high degree of customization and control. It’s a popular choice for universities and technical institutions. It’s a powerful tool, but not necessarily the most user-friendly.

Awardify (a newer entrant, gaining traction) focuses on creative competitions – art, design, photography. They offer AI-powered tools for analyzing visual submissions, identifying potential copyright issues, and providing preliminary scores based on aesthetic criteria. It’s a niche platform, but very strong within its area.

ScoreVision is a platform geared toward performance-based competitions like dance, cheerleading, and music. They offer tools for capturing and analyzing video submissions, providing judges with a standardized scoring interface. They are focused on events with a strong visual component.

ContestHQ is a more general-purpose competition platform that has recently integrated AI features. They offer a range of tools for managing submissions, judging, and communication. It's a good option for smaller competitions that don't require advanced AI capabilities.

Essential Judging Supplies for Seamless Competition Management

Vibrant blue color for clear 1st place recognition · Durable material for repeated use · Versatile for various competitions

These classic award ribbons offer a tangible way to celebrate winners, complementing the digital efficiency of AI judging software.

Secure storage for documents and supplies · Accommodates letter, legal, and A4 paper sizes · Integrated pen holder for convenience

This clipboard with storage helps keep competition details organized and accessible, working hand-in-hand with AI judging platforms.

Clear front cover for easy identification of contents · Sliding bar closure for secure document binding · Holds up to 70 sheets of paper

These report covers provide a professional way to present competition entries or results, enhancing the overall participant experience alongside AI evaluation.

Refillable notebook with durable TechLock binder rings · Includes pockets, tabs, and dividers for organization · 200-sheet capacity for extensive note-taking

This versatile notebook is perfect for jotting down notes, organizing participant information, or tracking competition progress, supporting the administrative side of AI-judged events.

As an Amazon Associate I earn from qualifying purchases. Prices may vary.

How these systems score entries

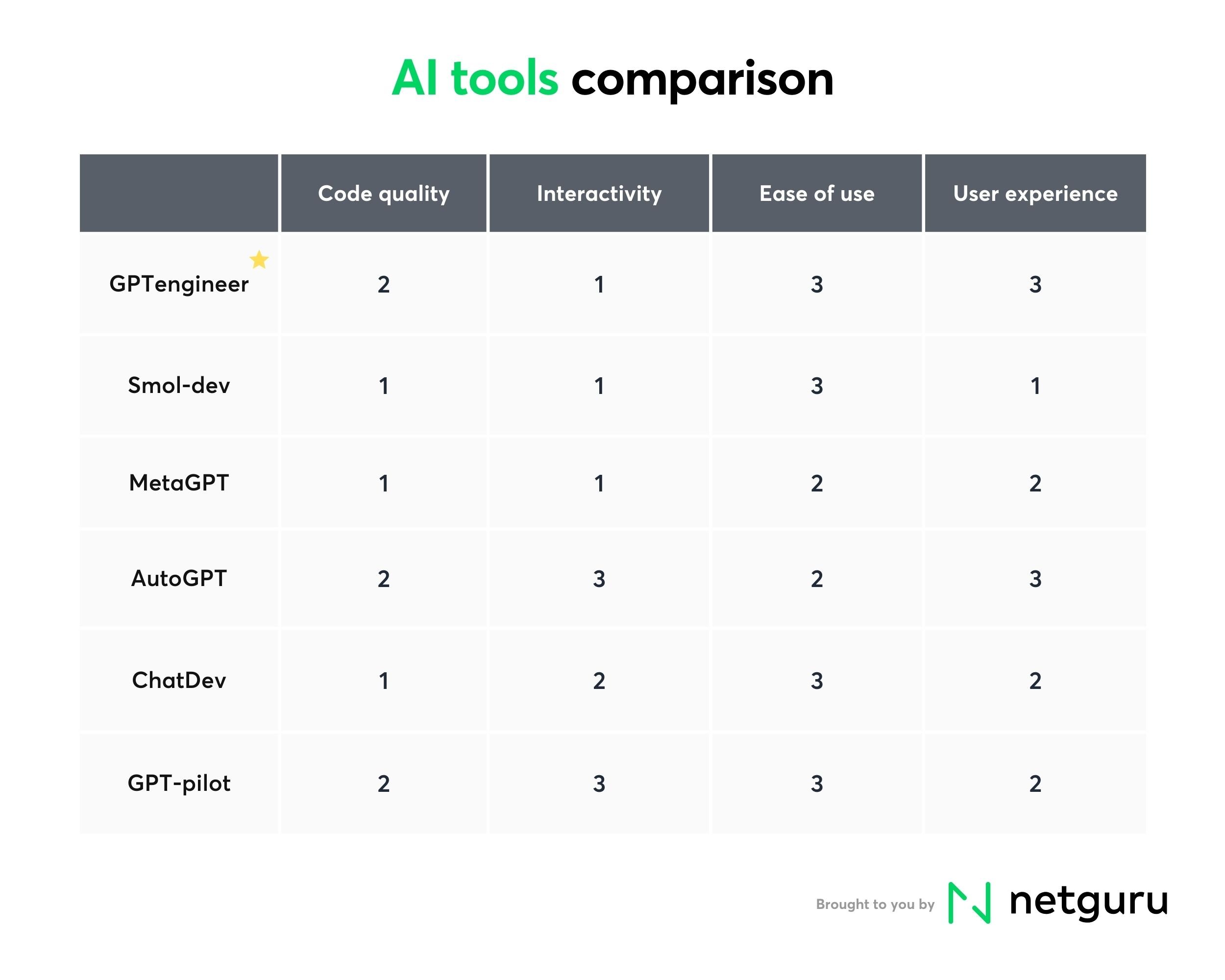

AI judging platforms employ a variety of scoring methods, each with its own strengths and weaknesses. Understanding these methods is crucial for choosing the right platform and ensuring the fairness of your competition. One common approach is rubric-based scoring. The AI is trained to evaluate submissions based on a predefined set of criteria, assigning points for each criterion. This is effective for competitions with clear and objective standards.

Comparative judgment is another popular technique. Instead of scoring submissions against a fixed rubric, the AI compares pairs of submissions and determines which one is better. This method is particularly useful for subjective evaluations, like judging creative writing or artwork. It relies on the collective wisdom of the AI, identifying patterns and preferences.

Anomaly detection is a powerful tool for identifying potential plagiarism or rule violations. The AI analyzes submissions for unusual patterns or similarities to existing content. This can help to ensure that all submissions are original and comply with the competition guidelines. RocketJudge’s real-time monitoring during live judging events utilizes a similar principle, flagging submissions that deviate from expected norms.

Sentiment analysis is useful for judging creative content where emotional impact is important. The AI analyzes the text or visual content to determine the overall sentiment expressed. This can be helpful for evaluating marketing copy, advertising campaigns, or even poetry. However, it's important to remember that sentiment analysis is not always accurate and should be used in conjunction with human judgment.

AI-Powered Judging Method Comparison - 2026

| Scoring Method | Entry Type Suitability | Implementation Complexity | Bias Potential | Data Requirements |

|---|---|---|---|---|

| Rubric-Based AI | Well-suited for entries with clearly defined criteria (e.g., essays, reports, code). | Moderate - requires careful rubric design and AI training on rubric application. | Moderate - bias can be present in the rubric itself or in the AI's interpretation of rubric elements. | Moderate - requires a sufficient number of scored examples for training. |

| Comparative Judgment | Effective for subjective entries where absolute standards are difficult (e.g., art, design, creative writing). | Low to Moderate - simpler to implement than rubric-based systems, but requires participant pairing and preference collection. | Low - relies on relative comparisons, reducing the impact of individual judge biases. | High - benefits from a large number of pairwise comparisons for reliable ranking. |

| Anomaly Detection | Useful for identifying outliers or unusual submissions (e.g., detecting plagiarism, identifying exceptional performance). | Moderate - requires defining 'normal' behavior and training the AI on a representative dataset. | Low to Moderate - potential for false positives/negatives; bias in the training data can lead to skewed results. | High - requires a large and diverse dataset to establish a baseline of normal submissions. |

| Sentiment Analysis | Applicable to text-based entries (e.g., comments, reviews, social media posts) to assess tone and emotional impact. | Low - readily available APIs and libraries simplify implementation. | High - susceptible to misinterpreting sarcasm, nuance, and cultural context. | Moderate - requires a substantial corpus of text data for accurate sentiment classification. |

| Hybrid Approaches (Rubric + Comparative) | Versatile, combining the structure of rubrics with the nuance of comparative judgment. | High - most complex to implement, requiring integration of multiple AI techniques. | Moderate - potential biases from both rubric design and comparative judgment process. | High - benefits from both rubric-defined examples and large-scale pairwise comparisons. |

| Hybrid Approaches (Anomaly + Rubric) | Useful for identifying submissions that deviate from expected quality based on rubric criteria. | Moderate to High - requires integration of anomaly detection and rubric scoring systems. | Moderate - potential for bias in both the rubric and anomaly detection model. | High - requires both a representative dataset for anomaly detection and scored examples for rubric training. |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

Addressing algorithmic bias

It’s a critical point: AI isn’t inherently objective. It learns from the data it’s trained on, and if that data reflects existing biases, the AI will perpetuate those biases. For example, an AI trained on a dataset of predominantly male-authored articles might unfairly favor submissions from male authors. This is a serious concern that competition organizers need to address proactively.

Leading AI judging platforms are taking steps to mitigate bias. Data diversification is a key strategy, ensuring that the training data is representative of the diverse range of submissions they’re likely to encounter. Algorithmic transparency is also important, allowing organizers to understand how the AI is making its decisions. Some platforms provide explanations for their scores, helping to identify potential biases.

However, even with these safeguards, AI bias remains a challenge. Human oversight is essential. AI should augment human judgment, not replace it entirely. Judges should review the AI’s scores and look for any patterns that suggest bias. It’s also important to audit AI systems regularly to ensure fairness. This involves testing the AI with a variety of submissions and analyzing the results for disparities.

Ultimately, mitigating bias is an ongoing process. It requires a commitment to fairness, transparency, and continuous improvement. Competition organizers need to be vigilant and proactive in addressing this critical issue.

- Audit the system every season to check for scoring disparities.

- Ensure data diversification in training datasets.

- Prioritize algorithmic transparency.

- Maintain human oversight of AI-generated scores.

Pricing and budget

The cost of AI-powered judging software varies widely depending on the platform, the number of submissions, and the features you need. Most platforms offer a range of pricing plans to suit different budgets. Per-entry fees are common, especially for smaller competitions. You pay a small fee for each submission that is judged by the AI.

Subscription plans are typically offered for organizations that run multiple competitions annually. These plans usually provide a fixed number of submissions per month or year. Custom quotes are available for large-scale competitions with unique requirements. Be sure to factor in potential hidden costs, such as data storage fees or support charges.

It’s difficult to provide precise pricing information, as it changes frequently. However, expect to pay anywhere from a few dollars per entry to several hundred dollars per month for a subscription plan. The cost of API access or custom integration may also add to the overall expense. Consider the long-term return on investment. The time savings and improved efficiency offered by AI judging can often justify the cost.

What comes next

The future of AI judging is bright. We’re already seeing the emergence of new technologies that promise to further enhance the capabilities of these platforms. Generative AI could be used to create judging rubrics tailored to specific competitions, or even to provide personalized feedback to participants. This would automate a significant amount of the judging process.

Explainable AI (XAI) is another promising development. XAI aims to make AI decision-making more transparent and understandable. This would allow judges to see why an AI made a particular decision, increasing trust and accountability. It addresses the "black box" problem that plagues many AI systems.

We can also expect to see greater integration of AI with virtual and augmented reality. Imagine judges being able to virtually "walk through’ a design submission or ‘experience" a performance in a simulated environment. This would provide a more immersive and realistic evaluation experience. The trend toward hybrid events will likely accelerate, further driving demand for AI-powered judging tools.

The goal is to let AI handle the data while humans make the final call. Even as these tools get better at spotting patterns, the final decision stays with the people in the room.xperienced human judges. The future of competition judging is a collaborative one, where humans and AI work together to ensure fairness, consistency, and innovation.

What's the biggest barrier to adopting AI judging in your competitions?

As AI-powered judging tools become more accessible, we want to hear from you. What is holding you or your organization back from integrating AI into your evaluation process? Vote below!

No comments yet. Be the first to share your thoughts!