The Rise of AI in Competition Judging: Why Now?

Competitions, in all their forms – from art and writing contests to business plan pitches and coding challenges – are experiencing an explosion in participation. This increased volume presents a significant challenge to traditional judging methods. What once worked for a few dozen entries simply doesn't scale to hundreds or thousands. Relying solely on human judges becomes incredibly time-consuming and expensive.

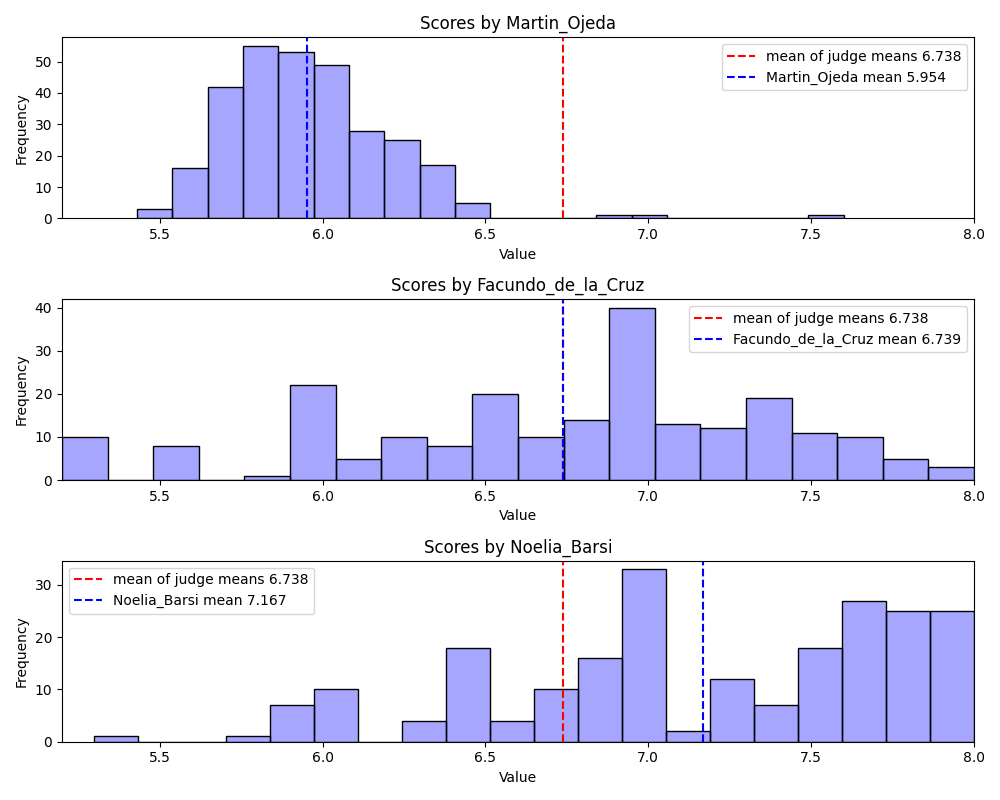

Human judging has limits. Subjectivity and bias lead to unfair outcomes. Fatigue also ruins objectivity when one person reviews 500 essays in a weekend. Coordinating schedules across time zones is a logistical mess.

Automated scoring isn’t new. For decades, we’ve used multiple-choice tests and simple algorithms to assess performance. However, current advancements in artificial intelligence, particularly in natural language processing and computer vision, have unlocked possibilities previously considered science fiction. AI can now analyze complex data – text, images, audio, video – with a level of speed and consistency that humans can’t match.

This isn’t about replacing human expertise entirely. Instead, AI offers a powerful toolkit to augment the judging process. It can handle the initial screening of submissions, identify potential plagiarism, flag outliers, and provide a preliminary scoring based on pre-defined criteria. This allows human judges to focus their attention on the most promising entries and make more informed, nuanced decisions.

this is same as judging software engineers based on line of code they wrote pic.twitter.com/bgEJIElWnH

— Chandan (@chandan1_) April 12, 2026

Key Features to Look for in AI Judging Software

When evaluating AI judging software, don’t get distracted by buzzwords. Focus on practical features that address the real needs of your competition. The ability to handle diverse submission formats is paramount. Your software should seamlessly accept text documents, image files, video submissions, audio recordings, and even code snippets, depending on the nature of your competition.

Plagiarism detection is non-negotiable. A robust system can scan submissions for originality and flag potential instances of academic dishonesty or copyright infringement. Customizable scoring rubrics are also essential. You need to be able to define the criteria for evaluation and assign weights to different aspects of the submission. A rigid, one-size-fits-all scoring system won't work for most competitions.

Blind judging helps stop bias. The software needs to anonymize submissions so judges don't see names or demographics. It should also connect to your registration and payment tools to save time on data entry. Judgify is one option that bundles these features.

Don’t overlook the importance of audit trails and data security. You need a clear record of all judging activity, including scores, comments, and any changes made. The software should also comply with relevant data privacy regulations and protect sensitive participant information. Look for platforms that offer encryption and secure data storage.

- Submission Format Support: Text, image, video, audio, code

- Plagiarism Detection: Robust originality checks

- Customizable Rubrics: Define evaluation criteria & weights

- Blind Judging: Anonymize submissions to reduce bias

- Integration: Connect with existing competition systems

- Audit Trails & Security: Secure data storage & compliance

Judgify: A Deep Dive into One Platform

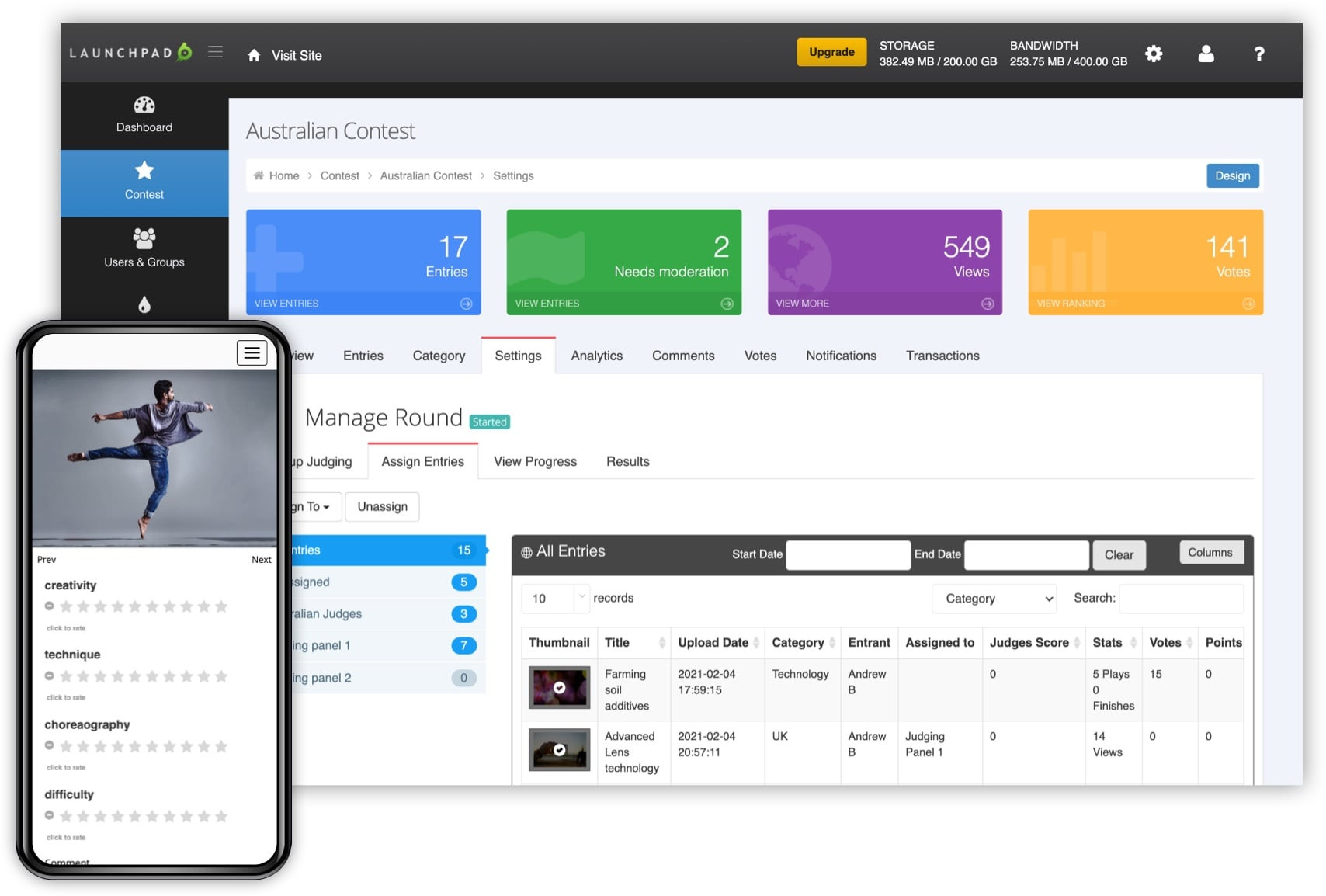

Judgify (judgify.me) positions itself as a comprehensive contest management system, encompassing everything from planning and submissions to judging and reporting. Their platform supports a wide range of event types, including academic contests, creative challenges, and business competitions. A key strength appears to be its branding and promotion tools, allowing organizers to create a professional and engaging experience for participants.

The judging management features within Judgify are fairly standard. They offer customizable scoring rubrics, blind judging options, and the ability to assign judges to specific submissions. Their "Advanced Scoring and Reporting" module promises detailed analytics and insights, but specifics on the types of reports available are limited. The platform also supports public voting, which can be useful for certain types of competitions.

A potential weakness is the platform’s complexity. With so many features packed into one system, it could be overwhelming for organizers who only need basic judging functionality. The user interface, while visually appealing, might have a steeper learning curve compared to more specialized AI judging platforms. Integration with third-party tools outside their specific ecosystem is difficult to verify without a custom demo.

Evalato and Beyond: Comparing the Competition

Evalato (evalato.com) distinguishes itself by focusing specifically on awards management software. Unlike Judgify’s broader scope, Evalato targets organizations running award programs – a subtly different but important distinction. They emphasize features like nomination collection, eligibility verification, and judging workflow automation. Their blog offers a wealth of resources on best practices for awards management.

Score Judge (scorejudge.com) takes a different approach, emphasizing real-time scoring and live leaderboards. This platform is particularly well-suited for competitions that involve live performance or ongoing challenges, such as coding competitions or sales contests. It allows participants to track their progress and see how they stack up against the competition. However, it appears less focused on the pre-judging phases like submission management.

A direct feature-by-feature comparison is challenging due to the lack of publicly available detailed information. Many platforms are hesitant to reveal the specifics of their AI algorithms. However, generally speaking, Judgify offers a more comprehensive suite of tools for managing the entire competition lifecycle, while Evalato excels at awards-specific workflows and Score Judge shines in real-time scoring scenarios.

The pricing models vary considerably. Judgify offers customized pricing based on event size and features, while Evalato provides subscription plans starting at around $299 per month. Score Judge is currently in a "brand new" phase and offers a free sign-up with the intention of gathering user feedback. This suggests that pricing is still being finalized. Ultimately, the best platform depends on the specific needs and budget of your competition.

- Judgify: All-in-one contest management

- Evalato: Awards-focused workflow

- Score Judge: Real-time scoring & leaderboards

AI-Powered Judging Software Comparison – 2026

| Platform | Primary Focus | Scoring Flexibility | Ease of Use | Integration Options | Overall Value |

|---|---|---|---|---|---|

| Judgify | Broad – adaptable to many competition types, strong in arts & culture | High – Customizable rubrics, weighted scoring, blind judging options | Medium – Feature-rich, potentially steeper learning curve for basic use cases | API access reported, details limited. Integrations with common marketing platforms likely. | Good – Comprehensive feature set, suitable for complex competitions. |

| Evalato | Science Fairs, Academic Competitions, Innovation Challenges | Medium – Primarily focused on rubric-based scoring, with some weighting capabilities | High – Designed for streamlined judging workflows, intuitive interface | Limited publicly available information. Likely integrates with standard academic platforms. | Good – Excellent for specific academic competition needs, good usability. |

| CompetitionSuite | Business Pitch Competitions, Startup Challenges | Medium – Supports scoring criteria and feedback, but less granular customization than Judgify | Medium – Focus on pitch deck review and scoring, may require adjustment for other formats | Integration with common presentation software reported, details limited. | Trade-off – Specialized for pitch competitions, less versatile for other event types. |

| AwardSpring | Scholarships, Grant Applications, Awards Programs | Low – Primarily focused on application review and ranking, limited scoring customization | High – Simple, user-friendly interface, easy for judges to navigate | Limited publicly available information. Likely integrates with student information systems. | Good – Best suited for straightforward application review processes. |

| Qualtrics Survey Platform (with scoring add-ons) | Highly adaptable, can be configured for various competition types | High – Leverages Qualtrics’ survey logic for complex scoring scenarios | Medium – Requires expertise in Qualtrics survey design and scoring features | Extensive – Integrates with a wide range of Qualtrics’ existing integrations. | Trade-off – Requires existing Qualtrics license and expertise, potentially higher cost. |

| Formstack Documents | Document-based competitions, essay contests | Medium – Scoring based on document content, limited rubric customization | Medium – Focus on document automation, judging interface is functional but not optimized | Integrates with various document storage and CRM systems. | Better for – Competitions heavily reliant on document submissions and automated scoring. |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

The Role of Human Oversight: AI as a Tool, Not a Replacement

AI judging doesn't eliminate human judges. It's about empowering them. The most effective approach involves a hybrid model, where AI handles the initial stages of the process and human judges provide the final assessment. AI can efficiently pre-screen submissions, identify potential plagiarism, and assign preliminary scores based on pre-defined criteria.

However, AI lacks the nuanced understanding and critical thinking skills of a human judge. It may struggle with subjective elements, such as creativity, originality, and artistic merit. Human judges are essential for evaluating these qualities and ensuring that the final results are fair and accurate. They can also identify potential biases in the AI’s scoring and make adjustments accordingly.

Best practices include using AI to flag submissions that deviate significantly from the norm, either positively or negatively. This allows human judges to focus their attention on the most exceptional or problematic entries. AI can also provide judges with a summary of the submission's key features, helping them to make more informed decisions. Transparency is paramount – judges should be aware of how the AI is being used and have access to the underlying data.

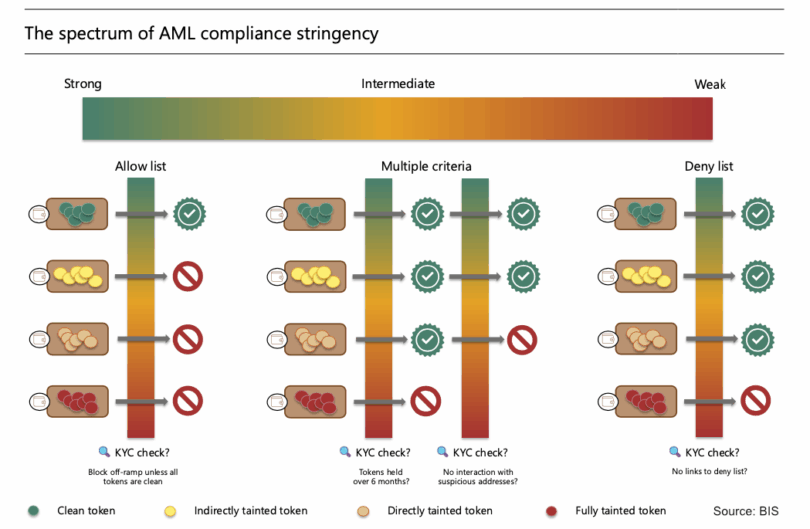

Ethical considerations are crucial. It’s important to ensure that the AI algorithms are not biased and that the judging process is fair and equitable. Competition organizers should be transparent with participants about how AI is being used and provide them with an opportunity to appeal the results.

AI Judging Platforms (2026)

- Judgify - Offers AI-powered scoring and ranking for various competition types, including hackathons and pitch contests. Focuses on rubric-based evaluation with customizable weighting. Integrates with popular event management systems.

- ReviewTrackers - While primarily a reputation management tool, ReviewTrackers’ AI-driven sentiment analysis is increasingly utilized by competition organizers to analyze qualitative feedback from judges, identifying key themes and potential biases. Offers API access for custom integration.

- Amazon Comprehend - A natural language processing (NLP) service allowing organizers to build custom judging tools. Judges can input free-text comments, and Comprehend can perform sentiment analysis, key phrase extraction, and entity recognition to aid in evaluation. Requires significant technical expertise for implementation.

- Google Cloud Natural Language API - Similar to Amazon Comprehend, Google’s offering provides NLP capabilities for analyzing judge feedback. Features include sentiment analysis, entity analysis, and syntax analysis. Also requires developer resources for integration into a competition platform.

- Microsoft Azure AI Language - Provides a suite of NLP features including sentiment analysis, key phrase extraction, and language detection. Can be used to process judge comments and identify areas of consensus or disagreement. Requires Azure subscription and development effort.

- Qualtrics XM - Often used for survey data, Qualtrics’ Text iQ feature leverages AI to analyze open-ended responses from judges, providing insights into common themes and sentiment. Can be integrated with Qualtrics’ broader survey and data analysis tools.

- Biasly - Specifically designed to mitigate bias in evaluations, Biasly uses machine learning to identify potential subjective influences in judge scoring. Offers features for blind judging and automated feedback analysis. Focuses on text-based submissions.

Future Trends: What's Next for AI Judging?

The future of AI in competition judging is incredibly promising. Advancements in natural language processing (NLP) will enable AI to better understand and evaluate the meaning and intent behind written submissions. Computer vision will allow AI to analyze images and videos with greater accuracy, identifying subtle details and patterns that humans might miss.

Machine learning algorithms will become more sophisticated, capable of learning from past competitions and adapting to new criteria. This could lead to personalized judging experiences, where the AI tailors its evaluation based on the individual participant’s strengths and weaknesses. AI could also provide more detailed feedback to participants, helping them to improve their skills.

We might see the emergence of AI-powered "virtual judges’ that can provide real-time feedback during live competitions. Imagine a coding competition where AI analyzes the code as it"s being written, providing suggestions for improvement and identifying potential errors. Or a debate competition where AI analyzes the arguments being made and provides feedback on their logical soundness.

However, challenges remain. Ensuring fairness, preventing manipulation, and maintaining trust are ongoing concerns. We need to develop robust safeguards to prevent participants from gaming the system and to ensure that the AI algorithms are not biased. The key is to embrace AI as a tool to enhance human judgment, not to replace it entirely.

Addressing Common Concerns: Bias, Security, and Data Privacy

Algorithmic bias is a legitimate concern. AI algorithms are trained on data, and if that data reflects existing societal biases, the algorithm will perpetuate those biases. Competition organizers must carefully vet the platforms they choose, asking about the data used to train the AI and the steps taken to mitigate bias. Regular audits of the AI’s scoring can help to identify and correct any unintended biases.

Data security is paramount. Competitions often involve sensitive personal information, such as contact details and creative works. Choose platforms that offer robust security measures, including encryption, access controls, and regular security audits. Ensure that the platform complies with relevant data privacy regulations, such as GDPR and CCPA.

Transparency is key to building trust. Participants should be informed about how their data is being used and have the right to access and correct their information. Competition organizers should also be transparent about the AI judging process, explaining how the algorithms work and how human judges are involved. It's vital to have clear terms of service outlining data usage and privacy policies.

The legal landscape surrounding AI judging is still evolving. Be aware of potential liability issues and consult with legal counsel to ensure that your competition complies with all applicable laws and regulations. Proactive measures to address bias, security, and privacy will not only protect your competition but also build trust with participants and stakeholders.

No comments yet. Be the first to share your thoughts!