The shift toward automated judging

Organizers are drowning in submissions. Photography contests now pull in 50,000 entries, and global hackathons aren't far behind. Humans get tired and biased after the thousandth photo. AI judging is moving from a niche experiment to a standard tool for handling this scale.

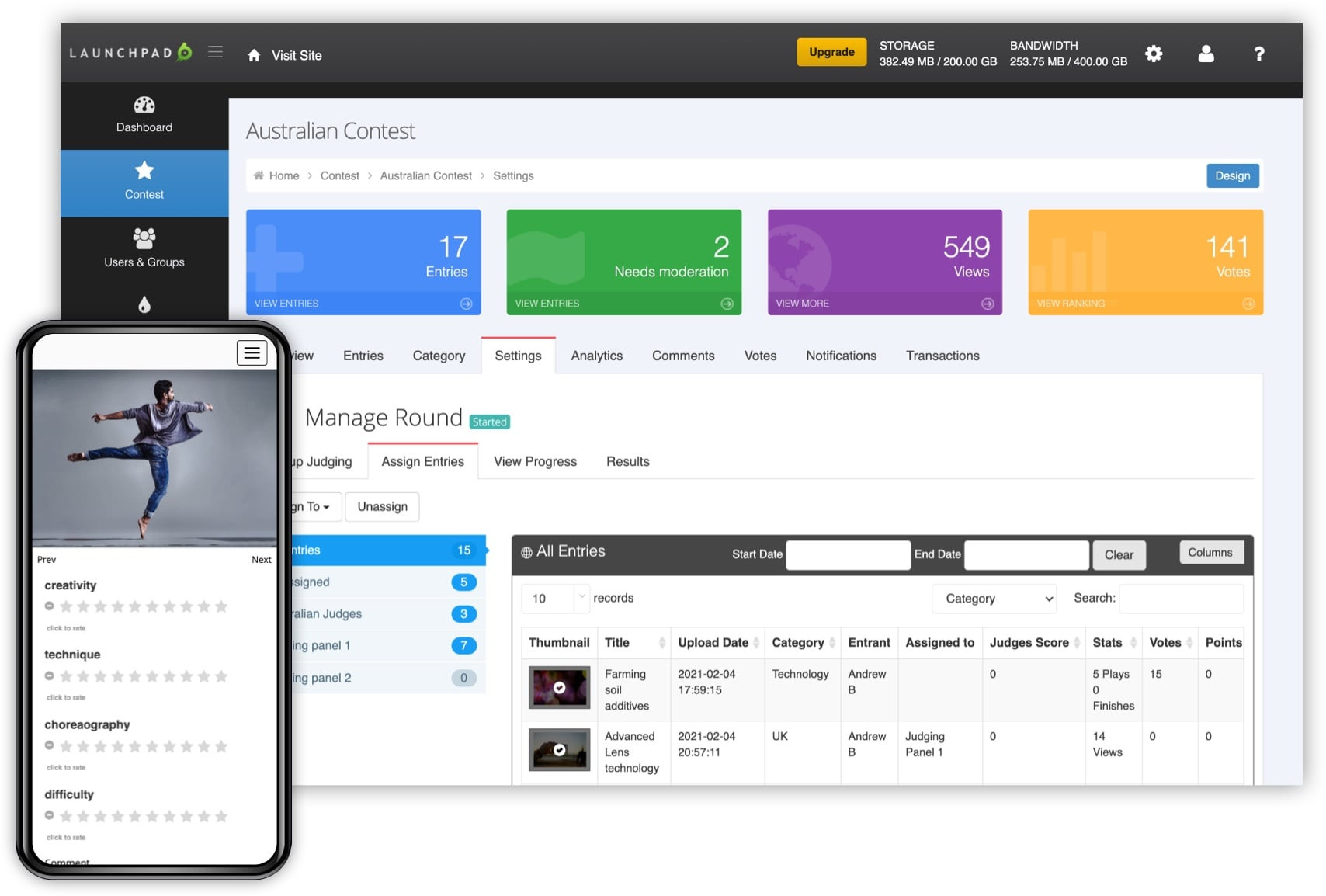

The idea isn’t to replace human judgment, at least not entirely. Instead, the focus is on augmentation – using AI to streamline the process, identify potential issues, and provide organizers with data-driven insights. Early adopters are primarily in fields like art, writing, software development, and design, where objective criteria can be defined, even if subjective assessment still plays a role. RocketJudge, for example, focuses on streamlining in-person, virtual, and hybrid events, automating ballot tallying for judges.

There's understandable concern about AI encroaching on creative fields. Will algorithms stifle originality? Will nuance be lost? These are valid questions, and the current state of the technology suggests that AI is better at identifying technical proficiency than appreciating artistic merit. We’re still in the early stages. The most successful implementations will likely involve a hybrid approach – AI handling the initial screening and scoring based on pre-defined criteria, with human judges providing the final evaluation and contextual understanding.

Using these tools cuts costs and speeds up results. I don't think it's a perfect solution—you still have to watch for algorithmic bias—but it makes the process manageable for small teams running large events.

Top platforms for 2026

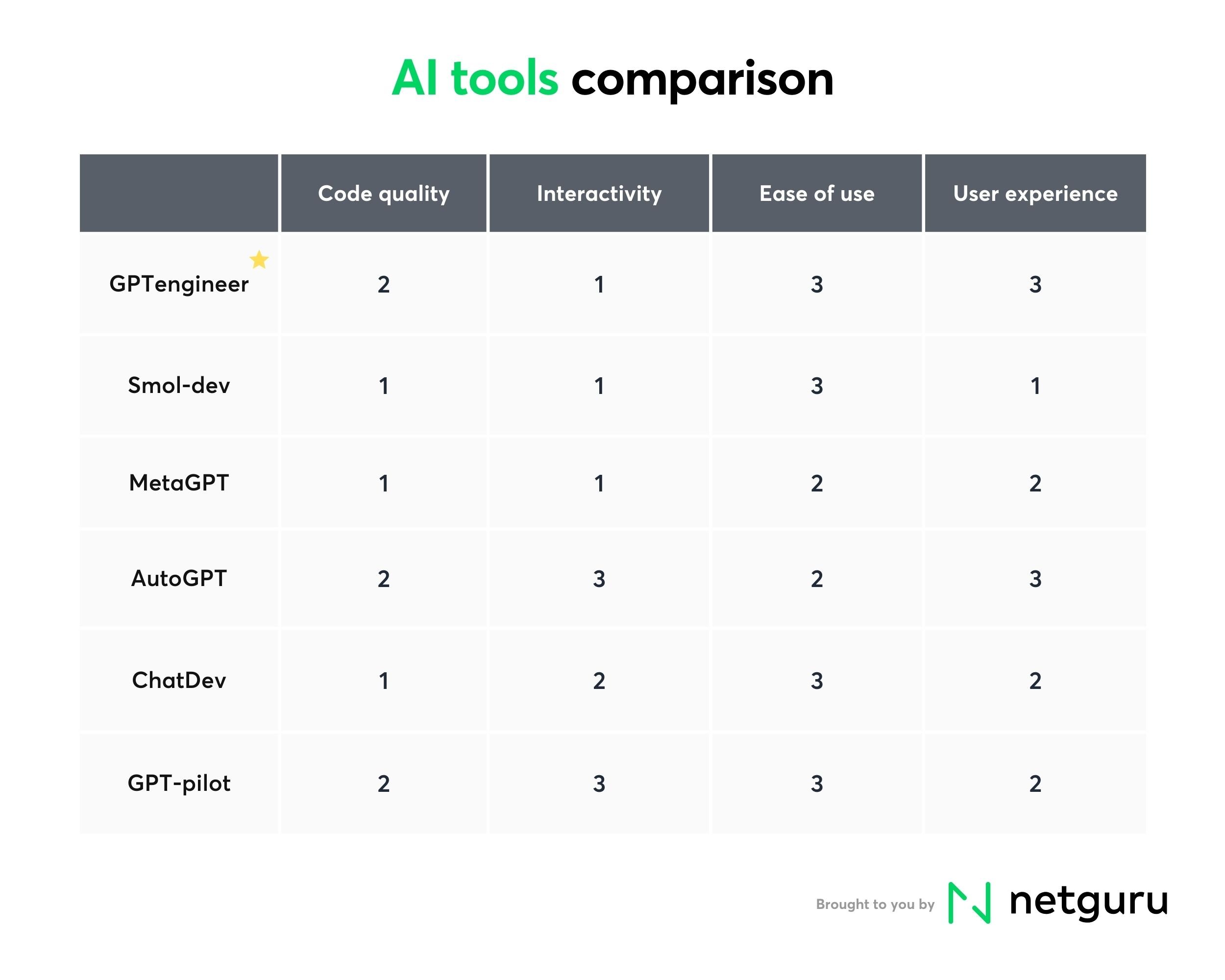

The market is crowded, but a few names come up constantly. Evalato and RocketJudge are the veterans here, while Judgify is the best choice for complex scoring. Here is how they actually compare.

Evalato positions itself as a comprehensive online judging software for awards. It supports a wide range of contest types and offers features like submission management, blind judging, and scoring rubrics. A key strength is its focus on streamlining the entire awards process, from initial call for entries to announcing the winners. Integration options appear to be somewhat limited, but it aims to be an all-in-one solution. However, detailed information on API access is scarce.

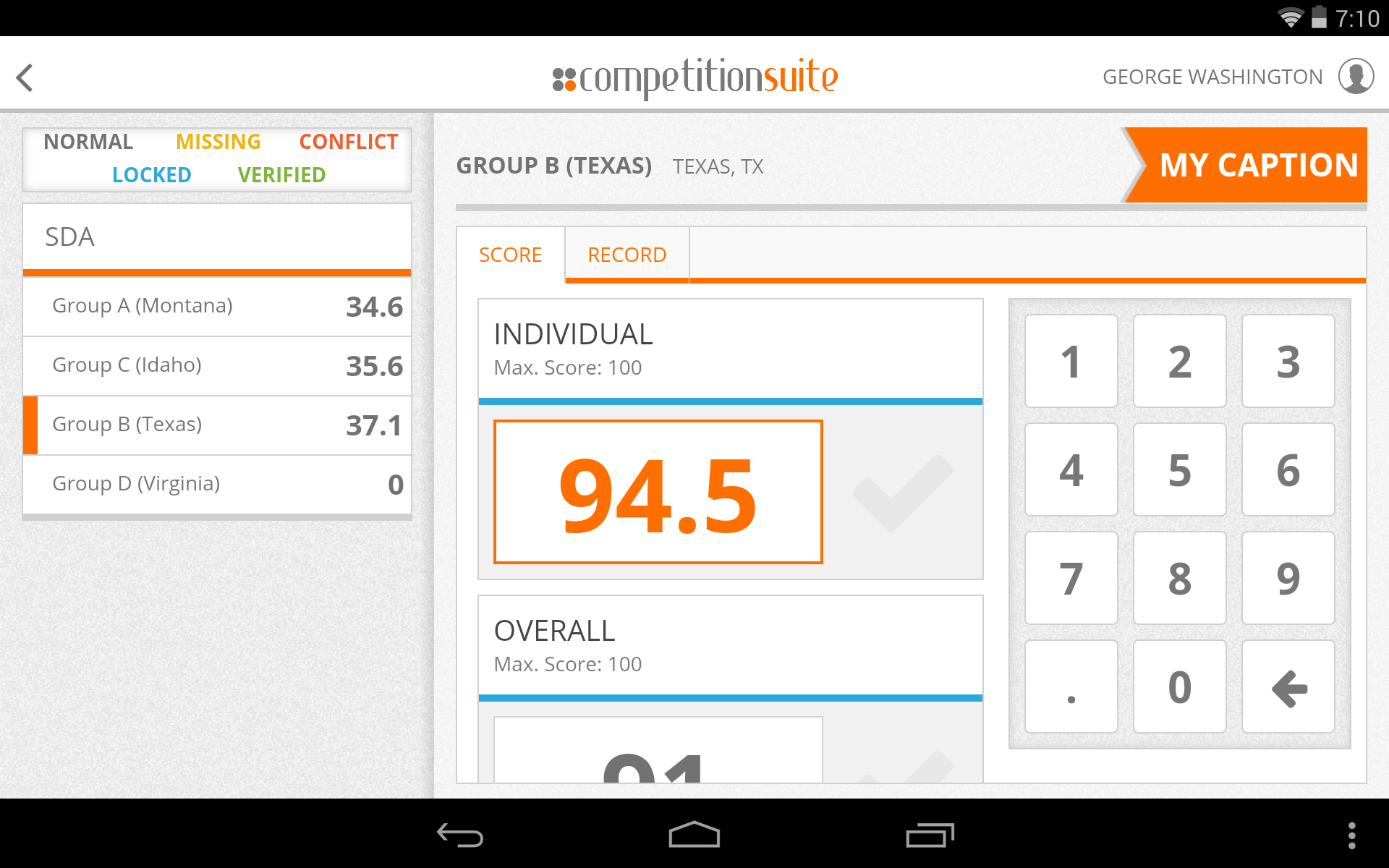

RocketJudge takes a different approach, focusing on mobile judging for events. This is particularly useful for in-person competitions where judges need to score entries on the fly. The platform allows judges to interview teams and score ballots directly from their mobile devices, with automatic tallying of scores. This real-time scoring capability is a significant advantage for live events. They emphasize support for a range of event formats – in-person, virtual, and hybrid.

Judgify offers a robust system for managing contests, abstracts, and awards. It covers the entire lifecycle, from contest planning and submission management to branding, judging, and reporting. They emphasize advanced scoring options and security compliance. Judgify's features include public voting, which can be a valuable addition for certain types of contests. They offer resources like blog posts and case studies to help users get the most out of the platform.

Awardco (while not solely focused on judging) includes judging features as part of its broader employee recognition platform. This makes it a good option for internal contests or awards programs within organizations. It offers features like nomination collection, peer voting, and automated scoring. The platform's integration with other HR systems can be a significant benefit.

Qualtrics is primarily a survey platform, but it can be adapted for judging purposes. Its powerful survey design tools and data analysis capabilities can be used to create complex scoring rubrics and gather feedback from judges. However, it requires more customization than dedicated judging platforms. It also lacks some of the specialized features like blind judging support.

SurveyMonkey Apply is another survey-based option that provides tools for collecting applications and managing the review process. It’s simpler than Qualtrics, making it a good choice for smaller contests with less complex scoring criteria. Like Qualtrics, it requires more manual setup than dedicated judging software. It’s best suited for straightforward application reviews.

ScoreVision is geared toward scholastic judging events, particularly those involving speech and debate. It offers features like ballot creation, real-time scoring, and reporting. Its specialized focus makes it a strong choice for educational institutions.

AI-Powered Judging Software Comparison - 2026

| Platform Name | Best For | Ease of Use (1-5, 5=Easiest) | Key Strengths | Key Weaknesses |

|---|---|---|---|---|

| Evalato | Broad range of awards & competitions 🏆 | 4 | Strong focus on managing the entire awards lifecycle, from submission to judging and winner announcement. Good reporting features. | Can be complex to set up initially; potentially overkill for very simple contests. |

| RocketJudge | Live Judging & Mobile Scoring 📱 | 4.5 | Excellent for events requiring real-time scoring and feedback. Mobile accessibility is a major plus. Supports various judging criteria. | May not be ideal for asynchronous judging scenarios. Less emphasis on pre-submission management. |

| AwardStage | Scholarships, Grants, & Complex Applications | 3.5 | Designed for handling detailed applications and eligibility criteria. Robust workflow automation. | User interface can feel dated. Steeper learning curve compared to some platforms. |

| JudgingPanel | Art & Design Competitions 🎨 | 4 | Specifically tailored for visual submissions. Features for blind judging and collaborative scoring. | Less versatile for non-visual contest types. Reporting features are somewhat limited. |

| Qualtrics | Research-backed Judging & Surveys | 3 | Leverages a powerful survey platform for gathering judging data. Strong analytics capabilities. | Requires significant customization to function optimally as a judging platform. Not purpose-built for competitions. |

| Submittable | Literary Contests & Creative Submissions ✍️ | 3.5 | Well-established platform for managing submissions. Integrates with various third-party tools. | Judging features are not as advanced as dedicated judging software. Can become expensive with high submission volumes. |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

Featured Products

Automated test case generation · Intelligent bug detection · Predictive analysis for test coverage

This tool leverages generative AI to automate and enhance the software testing process, identifying potential issues before they impact users.

Manual scorekeeping · Score flipping functionality · Suitable for various sports

While not AI-powered, this simple scoreboard is a reliable and affordable tool for manual scorekeeping in sports like basketball and tennis.

Automated rubric scoring · Bias detection algorithms · Performance analytics dashboard

This AI-powered platform offers sophisticated tools for judges, automating scoring, identifying potential biases, and providing valuable performance insights.

AI-assisted task management · Automated project scheduling · Resource allocation optimization

This free AI edition of Project Management Lite uses artificial intelligence to simplify project planning, scheduling, and resource management.

AI-driven judging workflows · Real-time feedback generation · Participant performance tracking

This next-generation software utilizes AI to create dynamic judging workflows, offer immediate feedback, and meticulously track participant progress.

As an Amazon Associate I earn from qualifying purchases. Prices may vary.

How AI handles scoring

Different contests call for different scoring methods. Rubrics provide a detailed set of criteria for evaluating entries, ensuring consistency and transparency. Weighted criteria assign different levels of importance to various aspects of an entry. Pairwise comparison involves comparing each entry to every other entry, allowing judges to identify the strongest contenders. AI can assist with all of these methods.

For rubrics, AI can automate the application of scoring criteria. An algorithm can analyze an entry and assign points based on pre-defined rules. This reduces the risk of human error and ensures that all entries are evaluated consistently. With weighted criteria, AI can calculate overall scores based on the assigned weights. It can also identify patterns in high-scoring entries, revealing which criteria are most important.

Pairwise comparison is a more complex method, but AI can still play a role. Algorithms can analyze the results of pairwise comparisons to create a ranking of entries. This can be particularly useful for large contests with many submissions. However, it’s important to note that AI is less effective at handling subjective criteria. It excels at identifying technical skill, but struggles to assess emotional impact or artistic merit.

Ultimately, AI should be seen as a tool to support human judgment, not replace it. It can handle the more mundane tasks, such as applying rubrics and calculating scores, freeing up human judges to focus on the more nuanced aspects of evaluation. The best approach is a hybrid one – AI providing data-driven insights, and human judges providing contextual understanding and critical analysis.

Workflow and software connections

Seamless integration with existing systems is crucial for a smooth contest workflow. Many platforms offer API access, allowing developers to connect the judging software to other tools, such as contest management platforms or CRM systems. Zapier and IFTTT integrations can also be valuable for automating tasks and transferring data between applications. However, the level of integration varies significantly between platforms.

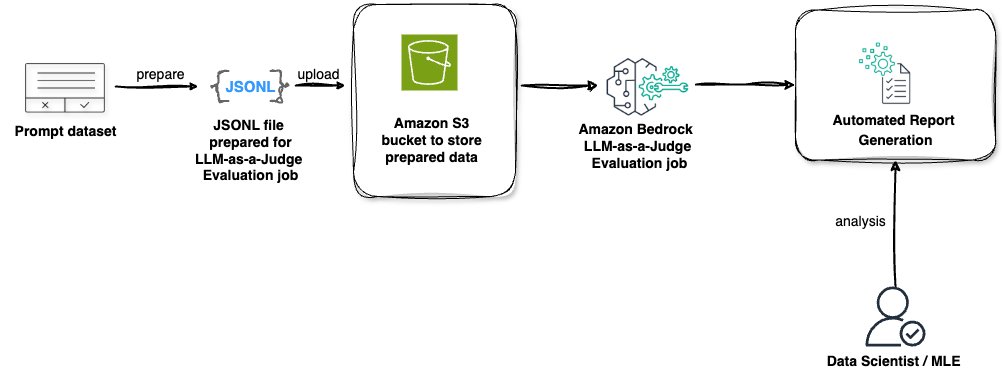

A typical contest workflow involves several stages: submission collection, initial screening, judging, and winner announcement. AI can streamline each stage. During submission collection, AI can be used to detect plagiarism or identify submissions that don’t meet the eligibility criteria. During judging, AI can automate scoring and provide judges with data-driven insights. And during winner announcement, AI can help to generate reports and communicate the results.

Potential bottlenecks can arise if the judging software doesn’t integrate well with other systems. Data import/export can be time-consuming and error-prone. Lack of API access can limit customization options. It’s important to carefully consider the workflow and identify potential integration challenges before selecting a platform. Thorough testing is essential to ensure that everything works smoothly.

Even with the best tools, integration requires effort. Data mapping, API configuration, and user training all take time and resources. It's important to factor these costs into the overall budget and plan accordingly. Don’t underestimate the importance of clear documentation and responsive support from the platform provider.

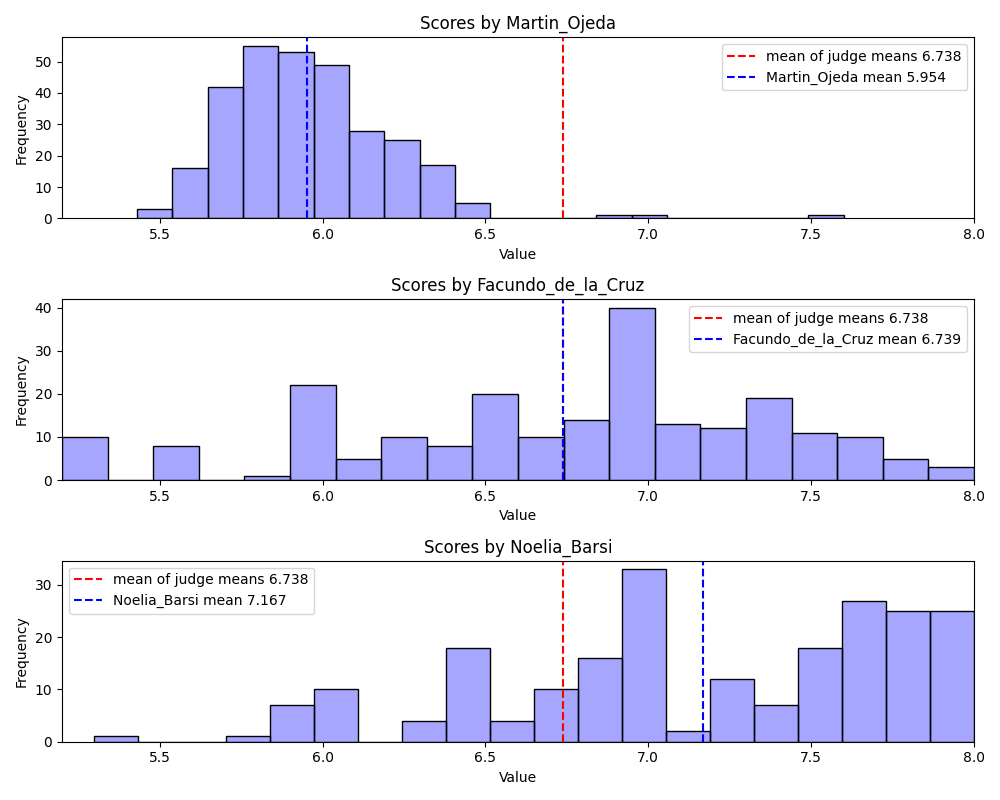

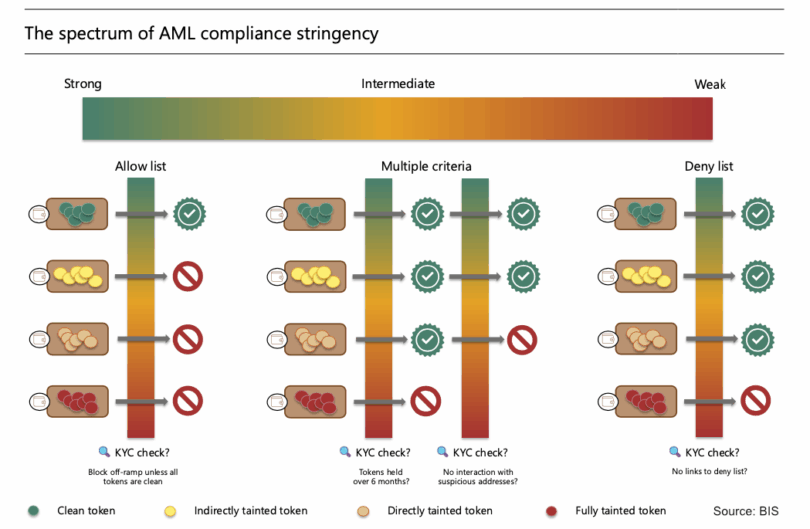

Dealing with bias

A critical concern with AI judging is the potential for bias. AI algorithms are trained on data, and if that data reflects existing biases, the algorithm will perpetuate those biases. This can lead to unfair or discriminatory outcomes. It’s essential to understand how platforms are addressing this issue and what steps organizers can take to ensure fairness.

Platforms can mitigate bias by using diverse training datasets and employing techniques like algorithmic fairness. Algorithmic fairness aims to ensure that the algorithm treats all groups of people equally, regardless of their protected characteristics. However, achieving true fairness is a complex challenge, and there’s no one-size-fits-all solution.

Organizers can also play a role in promoting fairness. This includes carefully reviewing the scoring criteria to ensure that they are objective and unbiased. It also involves monitoring the results of AI judging and identifying any patterns of bias. Human oversight is crucial – AI should not be used as a black box.

Transparency is key. Organizers should understand how the AI algorithm works and what data it’s trained on. This allows them to identify potential biases and take corrective action. It’s also important to be open and honest with contestants about how the judging process works, including the role of AI.

No comments yet. Be the first to share your thoughts!