Software options for 2026

The market for AI-powered judging software is evolving quickly, with new platforms emerging all the time. Several established players offer comprehensive solutions for contest management, while others specialize in specific areas like plagiarism detection or rubric scoring. I'll highlight some of the leading options available as of late 2026.

Evalato positions itself as a top-tier online judging software, and focuses on awards programs. They emphasize features like customizable judging workflows, blind judging capabilities, and detailed reporting. Evalato supports a wide range of contest types, including business awards, design competitions, and academic scholarships. Integration options are somewhat limited, but they offer API access for custom integrations.

Judginghub provides a complete suite of tools for professional competitions. Key features include blind judging, weighted scoring criteria, and a collaborative judging interface. Judginghub is particularly well-suited for contests with complex evaluation requirements, such as science fairs and innovation challenges. They promote a streamlined workflow and emphasize ease of use for both judges and organizers.

Judgify.me is more than just judging software; it's an end-to-end award management system. It handles everything from entry submission and abstract review to judging and award distribution. Judgify is a solid choice for organizations that need a comprehensive solution for managing their entire awards program. It's especially strong on the administrative side, offering features like automated reminders and payment processing.

DOMjudge, while originating as a platform for programming contests, is surprisingly versatile. It’s an open-source option, meaning it’s free to use and customize, but requires technical expertise to set up and maintain. It excels at handling large-scale coding competitions, but can also be adapted for other types of contests. It’s a good fit for organizations with in-house development resources.

Other notable platforms include Awardco (focused on employee recognition programs), SmarterEntry (specializing in grant applications), and OpenJudge (another open-source option with a strong community). Each platform has its strengths and weaknesses, and the best choice will depend on the specific needs of the contest.

It’s important to note that many platforms offer tiered pricing plans, based on the number of entries, features used, and level of support. Some platforms also offer custom pricing for large-scale events. When evaluating different options, carefully consider your budget and future growth plans.

I’m hesitant to declare a single “best” platform. Each system is designed with different priorities in mind. The key is to find a platform that aligns with your contest’s specific requirements and offers the features you need at a price you can afford.

How rubrics work with automation

AI judging systems are only as good as the rubrics they use. A well-defined rubric is essential for fair and consistent evaluation, regardless of whether the judging is done by humans or machines. The rubric provides a clear set of criteria for assessing entries, ensuring that all judges are evaluating submissions based on the same standards.

AI excels at enforcing rubric adherence. It can automatically check whether judges have considered all of the criteria and assigned scores accordingly. It can also identify scoring discrepancies, flagging entries where judges have significantly deviated from the average score. This helps to ensure consistency and reduce the risk of bias.

Some platforms even offer rubric generation assistance. They can analyze past entries and suggest criteria based on common themes and patterns. This can be particularly helpful for contests that are new or evolving. However, it’s important to carefully review and refine any automatically generated rubric to ensure that it accurately reflects the goals of the contest.

The combination of rubrics and AI isn’t just about automating scoring; it’s about improving the quality of the evaluation process. By providing judges with clear guidelines and automated feedback, AI can help them make more informed and objective decisions.

- Write specific and measurable criteria for every rubric item.

- Stick to a consistent scale, like 1-5 or 1-10, across the whole contest.

- Provide detailed descriptions: Explain what each score level represents.

- Pilot test the rubric: Have a group of judges evaluate a sample of entries to identify any ambiguities or inconsistencies.

AI-Powered Judging Software Feature Comparison (2026)

| Software Name | Blind Judging | Plagiarism Check | Rubric Support | Entry Management | Reporting/Analytics |

|---|---|---|---|---|---|

| Judgify | Yes | No | Yes | Yes | Basic |

| Evalato | Partial | Yes | Partial | Yes | Advanced |

| AwardForce | Yes | Partial | Yes | Yes | Better for larger events |

| Submittable | No | Yes | Partial | Yes | Strong workflow features |

| FilmFreeway | No | No | No | Yes | Primarily film-focused |

| JudgePunk | Yes | Yes | Yes | Yes | Trade-off: steeper learning curve |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

Spotting and fixing bias

Addressing bias in judging is a critical concern. While AI can help identify and mitigate potential biases, it’s not a perfect solution. It’s important to understand both the capabilities and limitations of AI in this area. Blind judging, where identifying information is removed from entries, is a common technique for reducing conscious bias.

AI can automate this process by automatically anonymizing submissions before they are presented to judges. Demographic analysis of scoring patterns can also reveal potential biases. For example, if entries from a particular demographic group consistently receive lower scores, it may indicate a systemic bias.

Fairness-aware machine learning algorithms are being developed to mitigate bias in AI systems. These algorithms are designed to account for potential biases in the training data and make more equitable predictions. However, it's crucial to remember that AI is trained on data, and if that data reflects existing biases, the AI will likely perpetuate them.

Responsible AI use requires ongoing monitoring and evaluation. Regularly audit the AI system’s performance to identify and address any biases that may emerge. Transparency is also key – be open about how the AI system works and how it is being used to ensure fairness. It’s a continuous process, not a one-time fix.

Managing the contest workflow

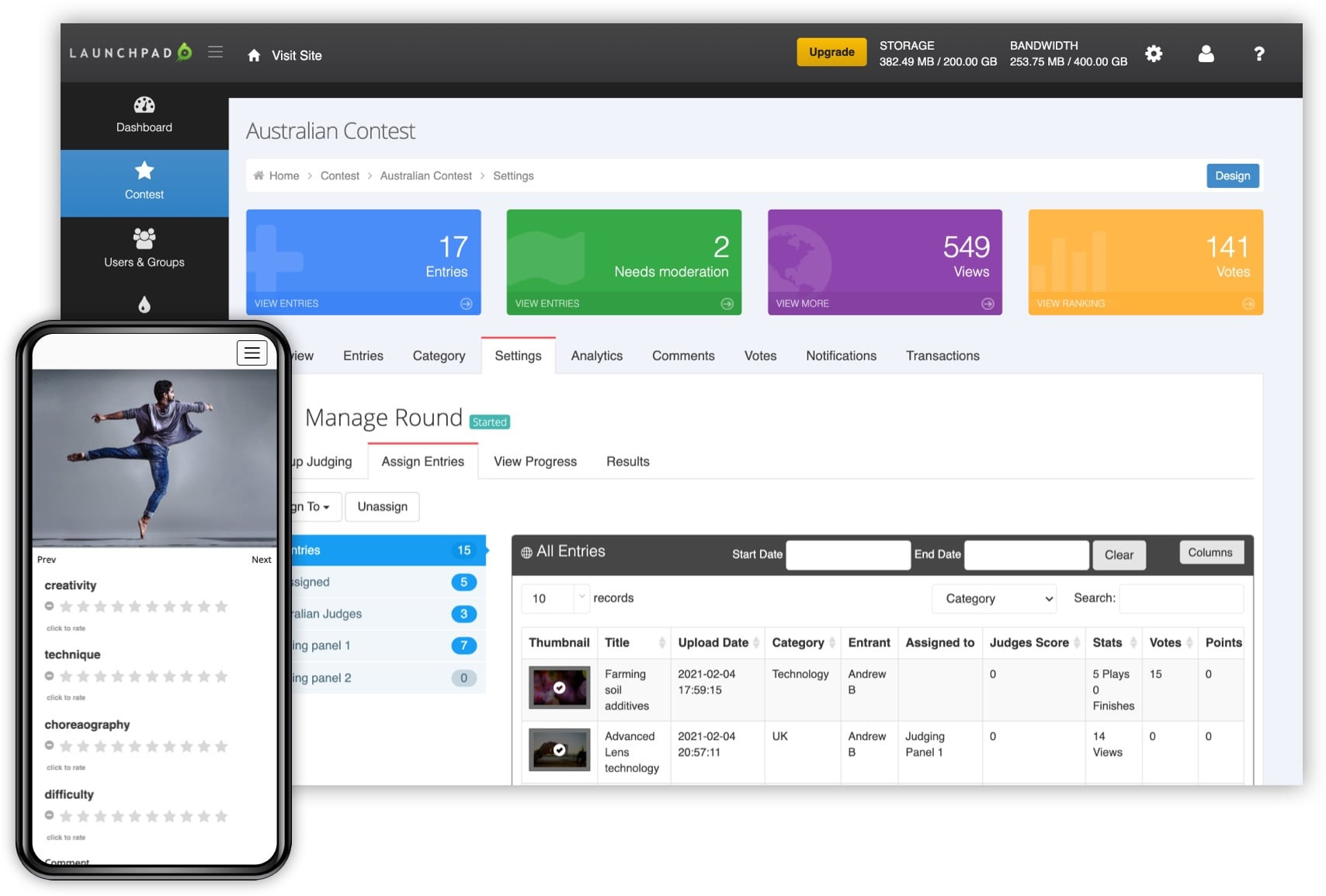

AI judging software offers more than just automated scoring. Most platforms include a range of features designed to streamline the entire contest workflow, from entry submission to award distribution. Entry submission management tools allow organizers to collect and organize entries efficiently. These tools often include features like online forms, file upload capabilities, and automated confirmation emails.

Communication tools facilitate collaboration between judges and organizers. These tools may include messaging systems, discussion forums, and video conferencing capabilities. Automated reminders help keep judges on track and ensure that deadlines are met. Reporting and analytics provide valuable insights into the judging process, such as the average score for each entry and the distribution of scores across different criteria.

Integration with other contest management platforms, like Judgify.me, can further streamline the workflow. For example, you might integrate your judging software with your email marketing platform to automatically notify winners. These features can save organizers a significant amount of time and effort, allowing them to focus on other important aspects of the contest.

By automating repetitive tasks and providing a centralized platform for managing all aspects of the contest, AI judging software can help organizers run more efficient and successful events.

The move toward multimodal judging

We’re seeing a growing trend toward multimodal judging – systems that can analyze multiple data types to provide a more comprehensive evaluation. Traditionally, judging has focused on a single modality, such as text (for essays) or images (for photography). However, many contests involve entries that combine multiple modalities, such as videos with accompanying scripts or designs with explanatory presentations.

Multimodal AI can analyze all of these data types simultaneously, providing a more holistic assessment of the entry’s quality. For example, in a video editing competition, the AI could analyze the video’s visual composition, audio quality, and overall storytelling. This is a challenging area, as it requires sophisticated machine learning models that can integrate information from different sources.

Contests where multimodal judging could be particularly useful include video editing competitions, design challenges, music competitions, and multimedia presentations. While the technology is still evolving, it has the potential to significantly improve the accuracy and fairness of judging in these types of events.

Currently, much of this relies on combining separate AI tools – one for image analysis, one for text analysis, and so on – and integrating their results. The future likely holds more integrated multimodal AI platforms.

What's next for automated scoring

The next few years will likely see continued advancements in AI judging technology. More sophisticated machine learning models will enable AI systems to analyze entries with greater nuance and accuracy. Improved bias detection techniques will help to mitigate the risk of unfair judgments. Greater integration with virtual reality (VR) and augmented reality (AR) could create immersive judging experiences.

Imagine judges evaluating architectural designs within a VR environment, or assessing product prototypes using AR overlays. The potential for personalized judging experiences is also exciting. AI could tailor the evaluation criteria to the specific skills and interests of each judge, leading to more relevant and insightful feedback.

I anticipate a shift toward more explainable AI (XAI), where the AI system can explain its reasoning behind its scoring decisions. This will build trust and transparency, and allow judges to better understand and validate the AI’s assessments. We’ll also likely see increased adoption of federated learning, where AI models are trained on decentralized data sources without compromising privacy.

I don't think we'll see AI replace human judges entirely. Software is still bad at spotting genuine creativity or the 'soul' of a project. We're heading toward a hybrid setup where the machine handles the math and the humans handle the nuance.sightful evaluation process.

Contest Types & AI

- Video Production - AI can analyze visual elements like shot composition, color grading, and editing pace, as well as audio quality and synchronization, offering objective feedback alongside human review.

- Graphic Design - Automated assessment of design principles (balance, contrast, hierarchy) and adherence to brand guidelines is possible with AI, aiding in evaluating logo designs, posters, and marketing materials.

- Music Composition - AI tools can evaluate musical elements like harmony, melody, rhythm, and instrumentation, providing scores based on established musical theory or stylistic preferences.

- Photography - AI can assess technical aspects of photos (exposure, focus, white balance) and artistic elements (composition, color, subject matter) to provide a preliminary scoring framework.

- Creative Writing (with visuals) - AI can analyze text for grammar, style, and narrative structure, and, when paired with accompanying images, assess the synergy between the written word and visual representation.

- Product Design - AI can analyze 3D models and renderings for aesthetic appeal, ergonomic considerations, and manufacturability, supporting evaluations of industrial and consumer product designs.

- Combined Arts Contests - Events requiring submissions across multiple disciplines (e.g., a short film with original music and poster design) can benefit from AI’s ability to evaluate each component individually and potentially assess overall coherence.

Choosing the Right System: Key Considerations

Selecting the right AI-powered judging software requires careful consideration. Start by defining your contest’s specific requirements. What type of contest are you running? What is your budget? How many entries do you expect to receive? What level of technical expertise do you have in-house?

Integration requirements are also important. Does the software need to integrate with your existing contest management platform? Data privacy is another critical concern. Ensure that the software complies with all relevant data privacy regulations. Consider the platform’s security measures and data storage policies.

Don't underestimate the importance of user-friendliness. The software should be easy to use for both judges and organizers. Look for platforms with intuitive interfaces and comprehensive documentation. Finally, consider the vendor’s reputation and level of support. Choose a vendor with a proven track record and a responsive customer support team.

A well-informed decision will save you time, money, and headaches in the long run. Take the time to research your options and choose a system that aligns with your contest’s needs and goals.

- Contest Type: What kind of entries will be judged?

- Budget: How much can you spend on software?

- Entry Volume: How many submissions do you anticipate?

- Technical Expertise: Do you have the skills to manage the software?

- Integration: Does it need to work with other systems?

- Data Privacy: Is your data secure?

No comments yet. Be the first to share your thoughts!