The Rise of AI in Competition Judging: Why Now?

Competitions, from science fairs to literary contests, are growing in scale and complexity. This presents significant challenges for organizers, particularly when it comes to judging. The sheer volume of submissions often strains the capacity of human judges, leading to delays and increased costs. More subtly, relying solely on human evaluation introduces inherent risks of subjectivity and bias, potentially undermining the fairness and credibility of the results.

For a long time, these limitations were simply accepted as the cost of doing business. However, recent advancements in artificial intelligence – specifically in areas like large language models (LLMs) and computer vision – are changing the equation. These technologies now offer the potential to automate or significantly assist in the judging process, addressing many of the challenges associated with traditional methods. It’s not about replacing judges, but equipping them with tools to be more efficient and consistent.

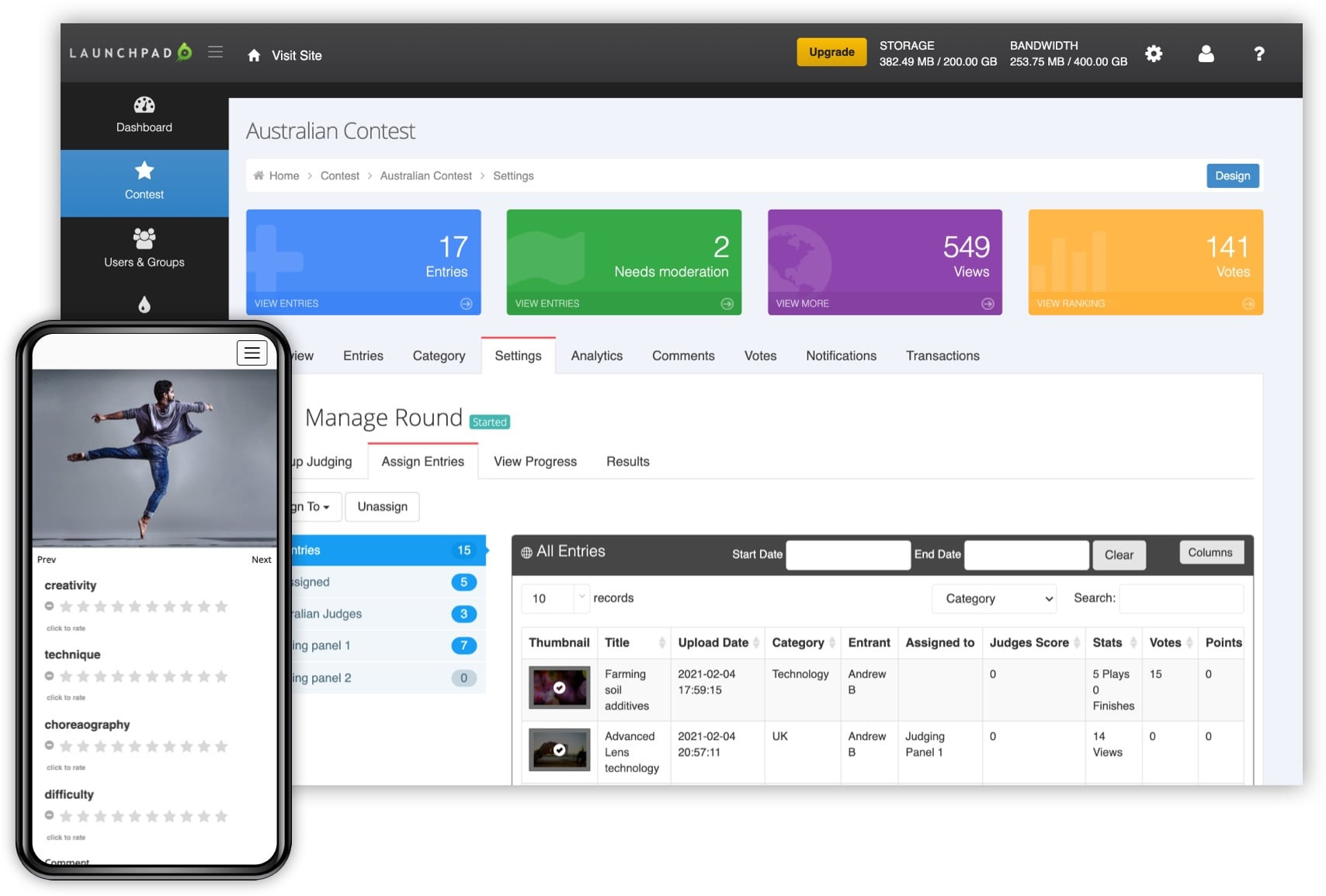

The practical benefits are becoming increasingly clear. AI can quickly screen submissions for basic criteria, identify potential plagiarism, and provide initial scoring based on predefined rubrics. This frees up human judges to focus on more nuanced evaluations, such as assessing creativity, originality, and overall impact. I believe the initial wave of adoption will be in competitions with large numbers of entries and well-defined evaluation criteria, like coding challenges and design contests. RocketJudge, for example, focuses specifically on mobile judging for real-world events, suggesting a need for immediate feedback and scoring.

AI isn't making final decisions here. It is an assistant that provides insights and recommendations to human judges. This hybrid approach combines the strengths of both – the speed and scalability of AI with the critical thinking and contextual understanding of humans. The technology is maturing rapidly, and the cost of implementation is decreasing, making it accessible to a wider range of competition organizers.

this is same as judging software engineers based on line of code they wrote pic.twitter.com/bgEJIElWnH

— Chandan (@chandan1_) April 12, 2026

Key Features to Look for in AI Judging Platforms

When evaluating AI judging platforms, it's essential to look beyond the marketing hype and focus on features that genuinely improve the quality and efficiency of the judging process. Automated rubric scoring is a foundational element. The system should be able to parse submission content and assign scores based on a predefined rubric, reducing subjectivity and ensuring consistency. This requires the platform to reliably interpret complex scoring guidelines, not just keyword matching.

Plagiarism detection is necessary for text-based competitions. You need integration with established checkers. Identifying bias—like gender or cultural stereotypes—is also becoming a priority. Some platforms are beginning to incorporate bias detection algorithms, but this is still an evolving area. Support for various media types is also critical. A platform that can handle text, images, audio, and video will be more versatile and applicable to a wider range of competitions.

Integration with existing competition management systems, like Submittable, is essential for a seamless workflow. A platform that can import submissions directly from your existing system and export scores back into it will save significant time and effort. Reporting and analytics features are also important. You should be able to track judging progress, identify potential bottlenecks, and analyze scoring patterns. However, explainability is perhaps the most overlooked, yet most important, feature. You need to understand why the AI assigned a particular score to a submission.

The ability to review the AI’s reasoning – the specific criteria it used and the evidence it considered – is crucial for building trust and ensuring fairness. A "black box’ AI system is unlikely to be accepted by judges or participants. Finally, consider the platform"s scalability and security. It needs to be able to handle a large volume of submissions without performance issues and protect sensitive data from unauthorized access.

- Automated rubric scoring

- Plagiarism detection

- Bias detection

- Support for multiple media types

- Integration with competition management systems

- Reporting and analytics

- Explainability of AI scoring

Platform Deep Dive: Evalato vs. Submittable and Emerging Players

Currently, Evalato and Submittable are two of the more prominent platforms addressing competition management, and increasingly, AI-assisted judging. Evalato positions itself specifically as online judging software for awards, offering tools for managing submissions, judging, and reporting. Their platform emphasizes workflow automation and collaboration features. However, details on specific AI-powered judging features beyond basic automation are somewhat limited in publicly available information as of late 2026.

Submittable, while initially focused on grants and application management, has expanded its capabilities to include competition judging. They offer a robust platform for collecting and reviewing submissions, but their AI features appear to be less focused on automated scoring and more on streamlining the workflow for human judges. Submittable’s strength lies in its ability to handle complex application processes and provide a centralized hub for all competition-related activities. They also emphasize features for promoting diversity and inclusion in the judging process.

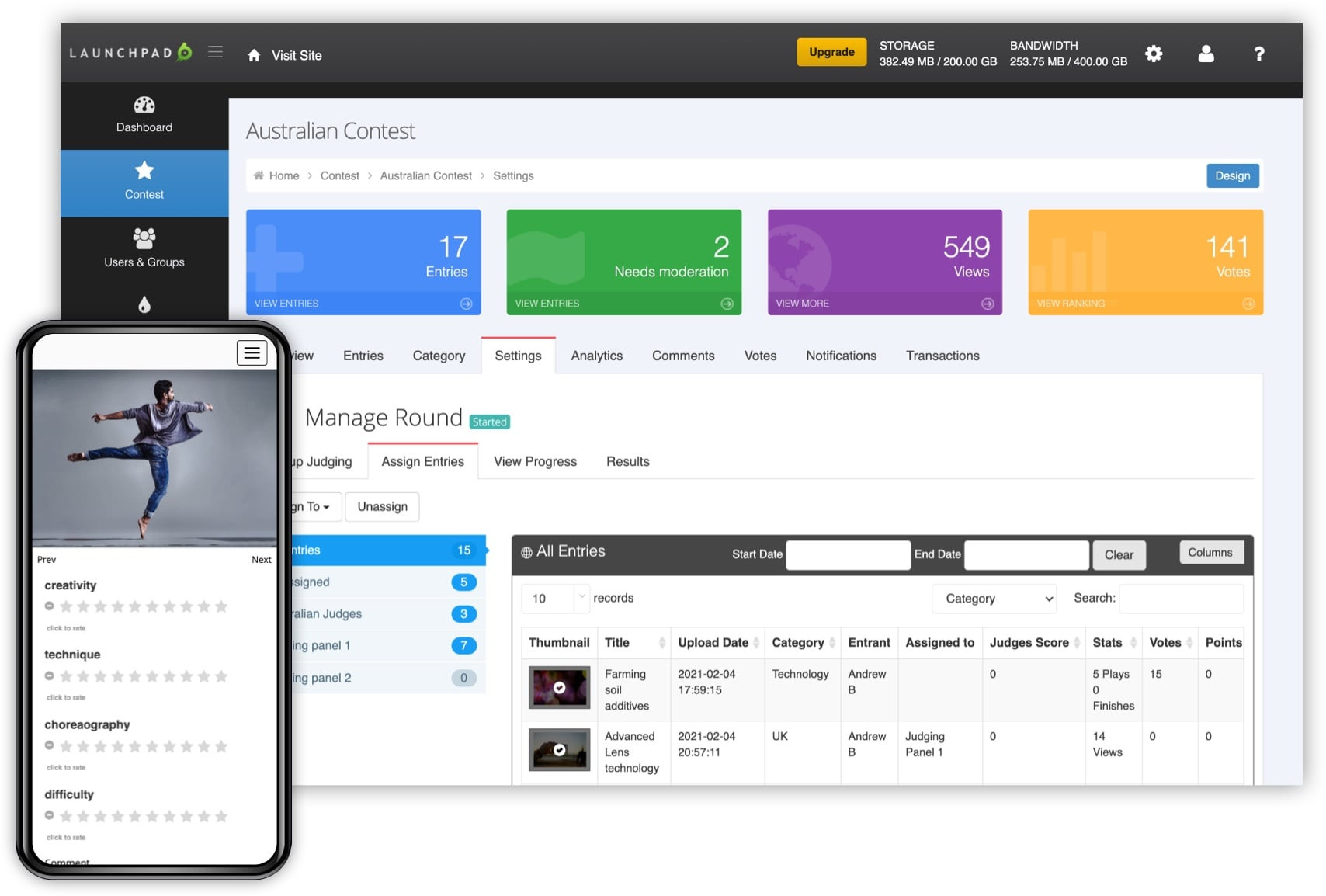

RocketJudge takes a different approach, focusing on mobile judging for events in the real world. This is particularly relevant for competitions that involve live performances or demonstrations. Their platform allows judges to score submissions directly from their smartphones or tablets, providing immediate feedback and reducing the need for paper-based scorecards. They emphasize flexibility and customization to fit the specific needs of each event.

Several emerging players are also entering the market, often focusing on niche areas. I've observed some platforms specializing in AI-powered code review for programming competitions, and others focusing on automated analysis of visual submissions for art and design contests. These specialized platforms often offer more sophisticated AI features tailored to their specific domain. However, it’s important to carefully evaluate their scalability and long-term viability before committing to a particular platform. The market is rapidly evolving, and new players are constantly emerging.

Scoring Methodologies: How AI Approaches Evaluation

AI judging isn’t a monolithic approach; it encompasses a range of techniques. Rubric-based scoring is the most common, where the AI is trained to evaluate submissions based on a predefined set of criteria and associated point values. This relies on the AI’s ability to accurately interpret the rubric and map submission content to the relevant criteria. Natural Language Processing (NLP) is crucial for text-based competitions, allowing the AI to analyze the content for sentiment, style, clarity, and other relevant factors.

Computer vision is used extensively for image and video analysis. The AI can identify objects, patterns, and aesthetic qualities within visual submissions, assigning scores based on predefined criteria. For example, in a photography contest, the AI might assess composition, lighting, and subject matter. Machine learning models, trained on past judging data, can also be used to predict scores based on historical patterns. However, this approach requires a large and representative dataset of labeled submissions.

Each method has its pros and cons. Rubric-based scoring is relatively straightforward to implement but can be limited by the specificity of the rubric. NLP analyzes text for sentiment and clarity.werful for analyzing text but can struggle with nuance and context. Computer vision is effective for visual assessments but can be susceptible to biases in the training data. Machine learning models can be highly accurate but require significant data and expertise to develop and maintain.

A key challenge is ensuring fairness and preventing gaming of the system. Participants may attempt to manipulate their submissions to exploit weaknesses in the AI’s scoring algorithms. Therefore, it’s crucial to use a combination of techniques and to continuously monitor and refine the AI’s performance. Regular audits and human oversight are essential for maintaining the integrity of the judging process.

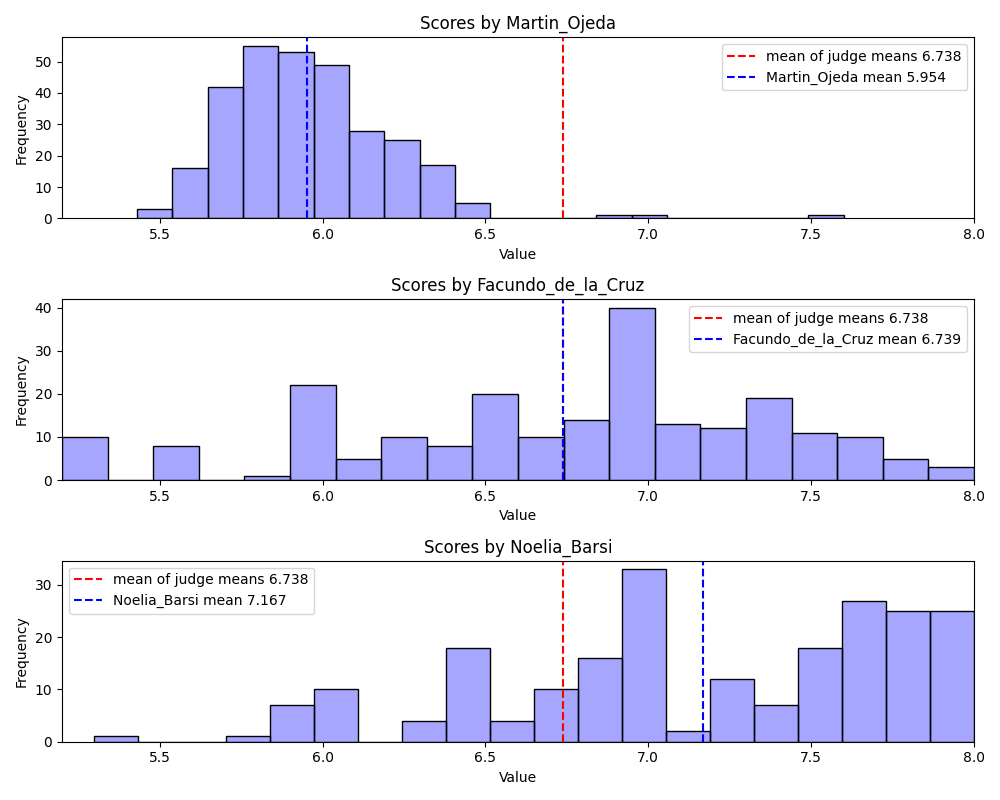

Bias Mitigation and Ensuring Fairness in AI Judging

AI systems are only as unbiased as the data they’re trained on. If the training data reflects existing societal biases, the AI will likely perpetuate those biases in its scoring. This is a significant concern in competition judging, where fairness and impartiality are paramount. Potential sources of bias include historical data that favors certain demographics, algorithm design that inadvertently disadvantages certain groups, and data representation that underrepresents certain perspectives.

Platforms are employing several techniques to mitigate these biases. Data augmentation involves creating synthetic data to balance the training dataset. Adversarial training pits two AI models against each other – one that tries to predict scores and another that tries to identify and exploit biases. Fairness-aware algorithms are designed to explicitly optimize for fairness metrics, such as equal opportunity and demographic parity. However, these techniques are not foolproof, and bias can still creep into the system.

Human oversight is critical. Judges should review the AI’s scores and provide feedback, particularly in cases where the AI’s reasoning is unclear or questionable. A clear appeals process is also essential, allowing participants to challenge scores they believe are unfair. Transparency is key – participants should understand how the AI is being used and what steps are being taken to ensure fairness.

It’s also important to recognize that fairness is a complex and multifaceted concept. Different fairness metrics may conflict with each other, and there is no one-size-fits-all solution. Organizations need to carefully consider their values and priorities when designing and implementing AI judging systems. Ongoing monitoring and evaluation are essential for identifying and addressing potential biases over time.

Integration and Workflow: Making AI Judging Work for You

Implementing an AI judging platform requires careful consideration of integration and workflow. The ideal scenario is a seamless integration with your existing competition management system, such as Submittable. This allows for automated data transfer, eliminating the need for manual data entry and reducing the risk of errors. APIs (Application Programming Interfaces) and webhooks are common integration methods, allowing different systems to communicate with each other.

However, even with a well-integrated system, some manual configuration is typically required. You’ll need to define the judging criteria, configure the AI’s scoring algorithms, and train the system on relevant data. This may require some technical expertise, or you may need to work with the platform vendor to get assistance. The impact on judges’ roles will also need to be considered. AI assistance will likely free up judges to focus on more complex evaluations, but they may also need to learn how to interpret the AI’s output and provide feedback.

Training for judges is essential. They need to understand how the AI works, what its limitations are, and how to effectively use its insights. It’s also important to emphasize that the AI is a tool, not a replacement for human judgment. Judges should always exercise their own critical thinking and contextual understanding. The implementation effort can vary significantly depending on the complexity of the competition and the chosen platform.

Smaller competitions may be able to implement a basic AI judging system with minimal effort, while larger and more complex competitions may require a more extensive and customized solution. A phased approach, starting with a pilot program, can be a good way to test the waters and identify potential challenges before rolling out the system to a wider audience.

Future Trends: What’s Next for AI in Competitions?

The field of AI is evolving rapidly, and we can expect to see even more sophisticated applications in competition judging in the coming years. The use of generative AI for providing personalized feedback to participants is a particularly promising trend. Imagine an AI system that can analyze a submission and provide detailed suggestions for improvement, tailored to the participant’s skill level and goals.

More sophisticated bias detection techniques are also on the horizon. Researchers are developing algorithms that can identify and mitigate subtle forms of bias that are difficult for humans to detect. The potential for AI to personalize the judging experience is another exciting area of development. AI could adapt the judging criteria and scoring algorithms based on the individual participant’s background and experience.

However, ethical considerations will become increasingly important. As AI becomes more powerful, we need to ensure that it is used responsibly and ethically. This includes addressing concerns about fairness, transparency, and accountability. Ongoing research and development are essential for pushing the boundaries of what’s possible and for addressing the challenges that arise.

I anticipate a shift towards more hybrid models, where AI and human judges work together seamlessly. The AI will handle the routine tasks, freeing up human judges to focus on the creative and nuanced aspects of evaluation. Ultimately, the goal is to create a judging process that is fair, efficient, and insightful, benefiting both participants and organizers.

No comments yet. Be the first to share your thoughts!