How AI is changing competition judging

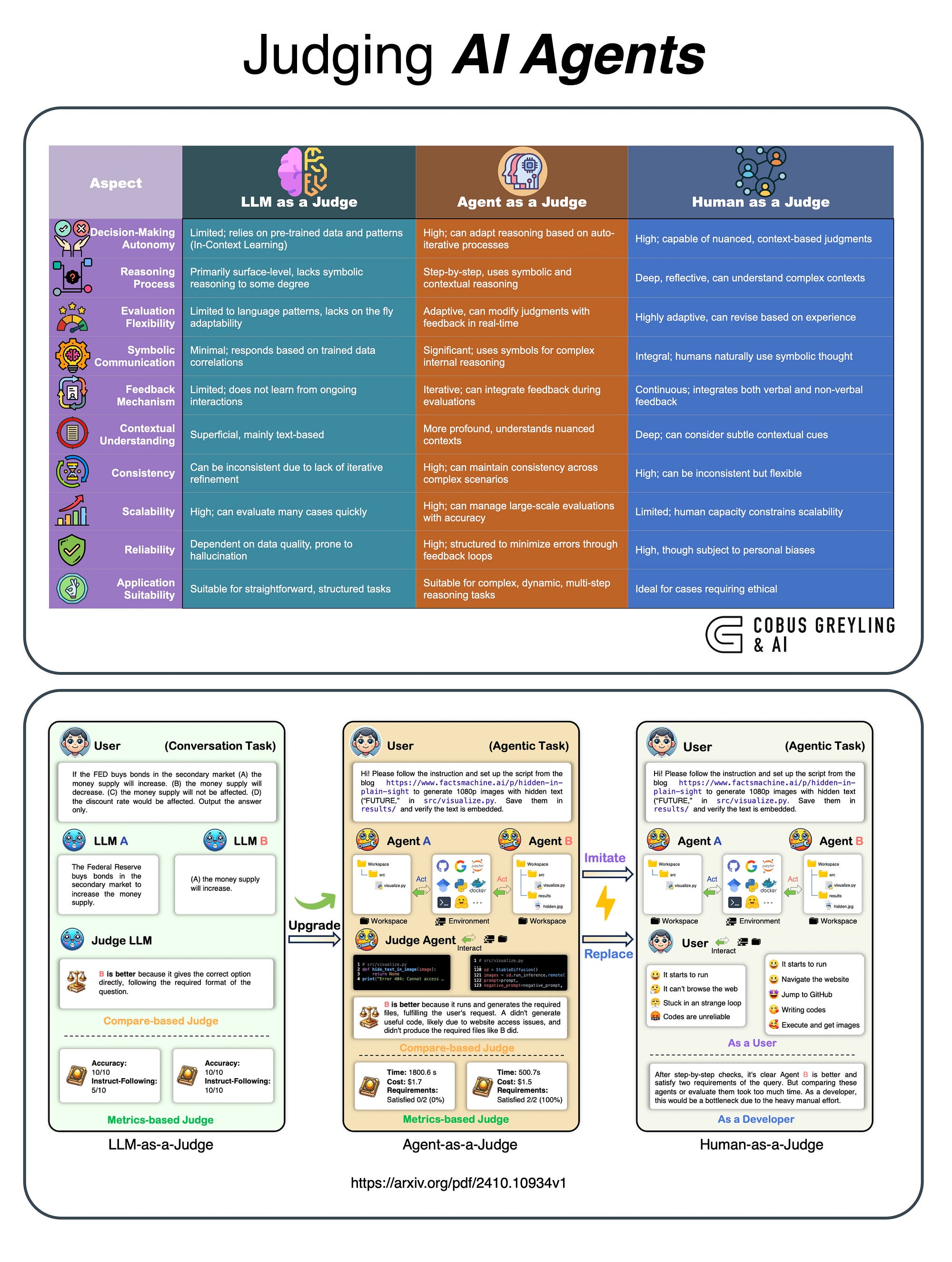

Competition judging, for centuries, has relied on human evaluation. While valuable, this approach isn't without its drawbacks. Traditional judging can be susceptible to subjective biases, proving difficult to scale for large events, and often carries a significant cost. The increasing complexity of submissions – particularly in fields like coding and data science – further strains the capacity of human judges.

AI doesn't replace judges; it handles the grunt work. These platforms process thousands of entries in minutes, flagging patterns or errors that a tired human eye might skip after eight hours of reviewing. It keeps the scoring consistent from the first entry to the last.

We’re already seeing AI impact a diverse range of competitions. Art contests utilize AI for stylistic analysis and originality checks. Writing competitions employ natural language processing to assess grammar, clarity, and creativity. Coding challenges benefit from automated testing and code quality assessment. Even scientific competitions are leveraging AI to analyze data and identify innovative research. The applications are expanding rapidly.

The core promise of AI in judging is objectivity, scalability, and cost-effectiveness. However, realizing this promise requires careful consideration of the technologies involved and a commitment to mitigating potential biases. It’s a developing field, and organizers need to be informed about the available tools and best practices. The goal isn’t to eliminate human judgment, but to elevate it.

Seven platforms to watch in 2026

The AI judging platform market is evolving quickly. Several platforms are vying to become the standard for competition organizers. Here's a breakdown of seven leading contenders as of 2026, evaluating their features, strengths, and weaknesses. Note that the competitive landscape is dynamic, and specific features may change.

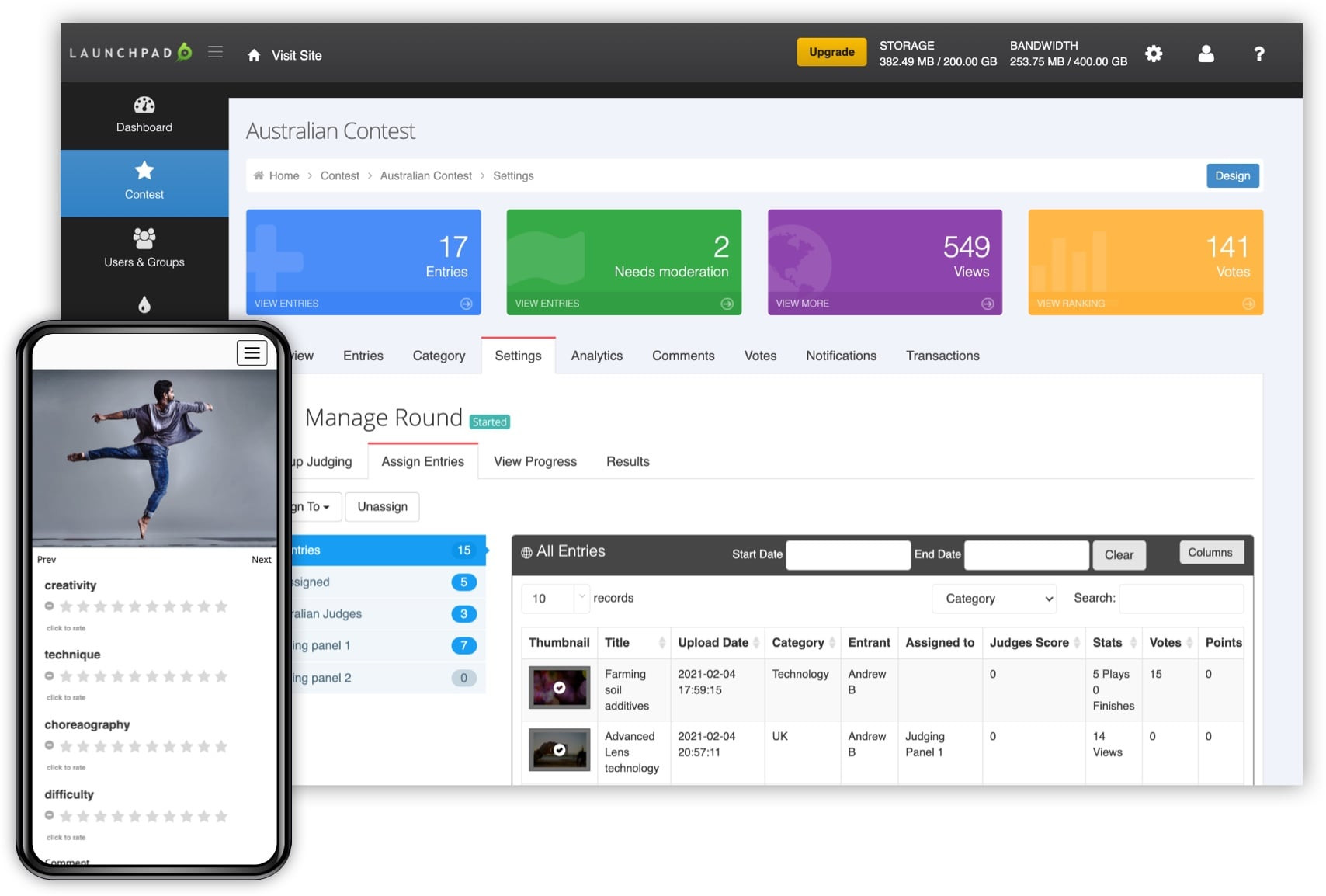

Judgify positions itself as an all-in-one contest management system. Beyond judging, it handles submission management, branding, promotion, and reporting. It supports a wide range of competition types and offers robust security features. A strength is its comprehensive nature, but it can be complex to set up and may be overkill for smaller events. Pricing is tiered, based on the number of submissions and features used.

Evalato focuses specifically on awards programs. It boasts advanced scoring and reporting, plagiarism detection, and features designed to streamline the judging workflow. Their platform is known for its user-friendly interface and strong support for complex judging criteria. However, it lacks the broader event management capabilities of Judgify and may require integration with other tools.

Awardly is a newer entrant that’s gaining traction, particularly among smaller organizations. It emphasizes ease of use and affordability. Its core features include online submission collection, scoring rubrics, and basic reporting. Awardly's simplicity is its strength, but it lacks the advanced features of more established platforms. It's a good option for straightforward competitions with limited budgets.

ScoreVision differentiates itself with a strong focus on visual and performing arts competitions. It offers specialized tools for evaluating artwork, music performances, and dance routines. Its computer vision capabilities allow for automated analysis of visual submissions. However, it’s less versatile for competitions outside the arts and entertainment sector.

Cvent is a well-established event management platform that has expanded into AI-powered judging. Its strength lies in its integration with other Cvent event tools, making it a good choice for organizations already using their platform. However, its judging features may not be as specialized or advanced as those offered by dedicated judging platforms. Pricing can be substantial, making it best suited for large-scale events.

RocketJudge concentrates on mobile judging for real-world events. This platform allows judges to score submissions directly from their smartphones or tablets, streamlining the process for live competitions. It’s particularly well-suited for events like hackathons, pitch competitions, and trade show judging. Its reliance on mobile devices can be a limitation in areas with poor connectivity.

Finally, several smaller, niche platforms are emerging, often specializing in specific competition types (e.g., coding challenges, scientific research). These platforms often offer innovative features but may lack the maturity and support of the larger players. Thorough research is crucial when considering these options. The best platform depends heavily on the specific needs of the competition.

Essential Judging Tools for Seamless Competition Management

High-speed printing · Plug & Label feature · Supports genuine DK pre-sized labels

This printer is essential for generating clear, professional labels for competition entries and participant identification, ensuring seamless administrative operations.

Industrial-grade durability · Multiple connectivity options (Ethernet, Serial, USB) · High print speed (6 inches/second)

The Zebra ZT230 offers robust and reliable label printing for high-volume competition needs, ensuring durable and precise identification of assets and entries.

200 pieces of first-place award ribbons · Includes event cards and strings · Suitable for various events (contests, sports, adult winners)

These award ribbons provide a traditional and visible means of recognizing winners, complementing digital judging results with tangible accolades.

Adjustable for letter-size files · Fits drawers from 24" to 27" · Heavy-duty steel construction

This file frame system ensures organized and accessible storage for competition documentation and records, maintaining order throughout the event lifecycle.

Wireless high-speed scanning · Scans photos and documents · ENERGY STAR certified

The Epson FastFoto scanner is crucial for digitizing physical submissions or historical records, integrating them seamlessly with AI judging platforms for comprehensive analysis.

As an Amazon Associate I earn from qualifying purchases. Prices may vary.

How these platforms actually score entries

The effectiveness of an AI judging platform hinges on its scoring methods. These methods vary significantly depending on the type of submission being evaluated. At a high level, we can categorize them into three main approaches: natural language processing (NLP), computer vision, and machine learning.

Natural Language Processing (NLP) is used for text-based submissions – essays, reports, creative writing, etc. AI algorithms analyze the text for grammar, spelling, clarity, style, and even sentiment. Platforms like Judgify and Evalato utilize NLP to provide objective scores based on predefined criteria. The quality of NLP scoring depends heavily on the quality of the training data and the sophistication of the algorithms.

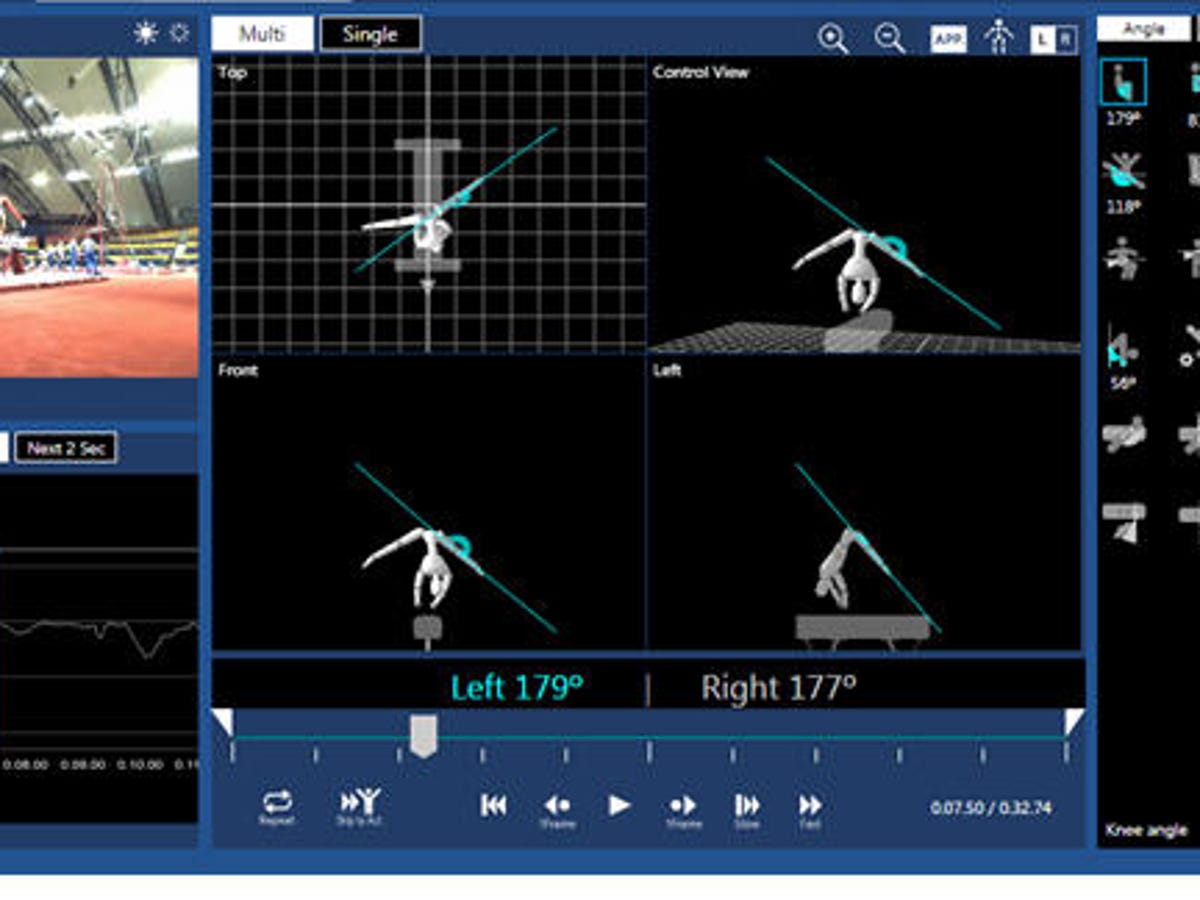

Computer Vision is employed for image and video submissions. AI algorithms analyze visual content for composition, color, lighting, and other aesthetic qualities. This is commonly used in art competitions and photography contests. ScoreVision excels in this area, utilizing advanced computer vision techniques to assess visual submissions. It can also detect potential plagiarism by identifying similar images or videos.

Machine Learning (ML) offers a more flexible approach. Platforms can train ML models on past winning entries to identify patterns and characteristics associated with success. These models can then be used to score new submissions. This requires a significant amount of high-quality training data, but it can lead to more accurate and nuanced evaluations. The challenge is ensuring the training data isn't biased.

Regardless of the method, rubric design is paramount. AI platforms often allow organizers to customize scoring rubrics, defining the specific criteria and weighting them accordingly. The AI then enforces these rubrics consistently across all submissions. This helps to minimize subjectivity and ensure fairness. A well-designed rubric is more important than the sophistication of the AI algorithm.

Bias Detection and Mitigation

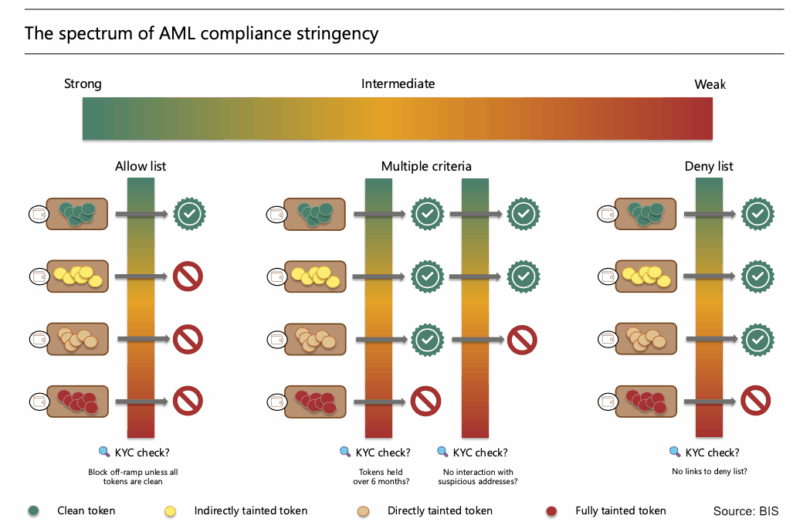

While AI aims to reduce bias in judging, it’s crucial to recognize that AI itself can introduce bias. This can occur if the training data used to develop the AI algorithms is biased, reflecting existing societal prejudices or historical inequalities. For example, an NLP model trained primarily on texts written by men might unfairly penalize submissions written by women.

Leading AI judging platforms are implementing techniques to mitigate bias. Data diversity is a key strategy – ensuring that training data includes a representative sample of submissions from all demographics and backgrounds. Algorithmic fairness checks are also used to identify and correct biases in the AI algorithms themselves. These checks involve analyzing scoring patterns to ensure that different groups are being evaluated fairly.

Explainable AI (XAI) is another important development. XAI aims to make the AI’s decision-making process more transparent. Instead of simply providing a score, the AI can explain why it assigned that score, highlighting the specific criteria that were considered. This allows judges to review the AI’s assessment and identify potential biases. However, XAI is still a developing field, and not all platforms offer this feature.

Ultimately, human oversight remains essential. AI should be viewed as a tool to augment human judgment, not replace it entirely. Judges should review the AI’s scores and explanations, and have the ability to override them if necessary. A hybrid approach – combining the efficiency of AI with the critical thinking of human judges – is the most effective way to ensure fairness and accuracy.

- Use diverse training data to avoid narrow results

- Implement algorithmic fairness checks.

- Prioritize explainable AI features.

- Maintain human oversight.

Integration & Workflow Considerations

The seamless integration of an AI judging platform into an existing competition workflow is critical for success. A platform that requires significant manual data entry or disrupts existing processes will likely be met with resistance. Integration capabilities vary widely among platforms.

Many platforms offer APIs (Application Programming Interfaces) that allow them to connect with other systems, such as submission management platforms (e.g., Submittable), event registration platforms (e.g., Eventbrite), and communication tools (e.g., Mailchimp). This allows for automated data transfer and streamlined workflows. Check compatibility with your existing tools before committing to a platform.

Data import/export capabilities are also important. You should be able to easily import submissions from your existing system and export scores and reports for analysis. Ensure the platform supports common data formats, such as CSV and Excel. Data security is paramount, especially when dealing with sensitive information. Choose a platform that offers robust security features and complies with relevant data privacy regulations.

User training is another consideration. The platform should be easy to use for both judges and organizers. Look for platforms that offer comprehensive documentation and support resources. Technical support should be readily available in case of issues. A responsive and knowledgeable support team can save you significant time and frustration.

No comments yet. Be the first to share your thoughts!