The shift toward automated judging

Competition judging, historically a human-driven process, is undergoing a significant shift with the integration of artificial intelligence. This isn't about replacing human judgment entirely, but about augmenting it to address inherent limitations. Traditional judging faces challenges with scalability, consistency, and, crucially, potential biases. As competition volumes increase – think coding challenges with thousands of submissions or global art contests – relying solely on human judges becomes impractical and expensive.

Human judges get tired and play favorites, even if they don't mean to. A judge's background or even the order they see entries can skew the results. AI platforms help by sticking to the same rules for every entry and flagging weird scoring patterns. But AI isn't a magic fix; if the training data is skewed, the algorithm will be too.

We’re already seeing AI judging platforms adopted across diverse competition types. Coding challenges like those hosted on HackerRank and Topcoder have long used automated testing to evaluate code functionality. Art and design competitions are experimenting with AI tools to assess aesthetic qualities, though this remains a more controversial area. Writing contests, scientific research evaluations, and even business plan competitions are also exploring AI’s capabilities. The common thread is the need for objective, scalable assessment.

Skepticism remains, and rightly so. Concerns about the "human element" in judging, the potential for algorithmic bias, and the lack of transparency in some AI systems are valid. The most successful implementations will likely be hybrid models – combining the efficiency and objectivity of AI with the nuanced understanding and contextual awareness of human judges. The shift isn't about eliminating humans, but about empowering them with better tools.

What to prioritize in your software

When evaluating AI judging software, organizers need to move beyond the hype and focus on features that directly address their specific needs. Submission handling is fundamental. The platform should support a wide range of file types relevant to your competition – images, videos, documents, code files, audio recordings – and handle large volumes of submissions without performance issues. Support for various submission formats (e.g., zip files, online forms) is also important.

Scoring methodologies are where AI can truly shine. Look for platforms that allow for detailed rubric creation, with the ability to define weighted criteria. This lets you specify the relative importance of different aspects of the submission. Features like blind judging – where judge identities are hidden – are essential for mitigating bias. The ability to easily adjust scoring weights and rubrics after the competition has begun can also be valuable.

Bias detection and mitigation are paramount. While AI can introduce bias, it can also be used to identify potential disparities in scoring. Features like analysis of judge scoring patterns, flagging of submissions with unusually high or low scores, and anonymization of submissions can help ensure fairness. Integration capabilities are also critical. The platform should integrate seamlessly with your existing competition management system, submission platform, and communication tools.

Finally, robust reporting and analytics are a must. You need to be able to track scoring trends, identify outliers, and generate reports that provide insights into the judging process. Features like data visualization and the ability to export data in various formats are highly desirable. These features solve the problems of manual data aggregation and subjective interpretation, providing a clear, data-driven assessment of the competition’s results.

- Flexible submission handling for images, video, and code

- Customizable Rubrics: Allows for weighted criteria and detailed scoring guidelines.

- Blind judging to hide submitter identities

- Bias Detection: Analyzes scoring patterns to identify potential disparities.

- Seamless Integration: Connects with existing competition management systems.

- Robust Reporting: Provides data visualization and export options.

Top platforms for 2026

The AI judging platform market is evolving rapidly. Several key players are emerging, each with its own strengths and weaknesses. Judgify and Evalato are established contenders, offering comprehensive solutions for contest management, while newer platforms are focusing on specialized features and niche markets. This overview highlights several leading options as of late 2026.

Judgify (judgify.me) is a well-rounded platform offering contest planning, submission management, judging, and reporting features. Its strength lies in its comprehensive suite of tools, covering the entire competition lifecycle. However, its AI capabilities are somewhat limited compared to more specialized platforms. It targets a broad range of competitions, from academic contests to creative awards. Integrations include common event management systems and payment gateways.

Evalato (evalato.com) focuses specifically on awards management and offers a robust set of features for judging and scoring. It stands out for its advanced scoring options and its ability to handle complex rubrics. A key weakness is its comparatively limited submission management capabilities. Evalato is particularly well-suited for awards programs with established judging criteria. It integrates with various CRM and marketing automation platforms.

Judging Hub (judginghub.com) positions itself as a dedicated judging platform, offering features tailored to the needs of awards organizers. It emphasizes ease of use and a streamlined judging workflow. Its AI capabilities are still developing, but it offers features like automated judge assignment and scoring analysis. Judging Hub targets a wide range of awards programs, from industry-specific accolades to public recognition awards.

Scorechain is a newer entrant gaining traction with its focus on unbiased scoring. It uses a proprietary algorithm to analyze judge scoring patterns and identify potential biases, offering real-time feedback to judges. Scorechain’s main weakness is its limited integration options. It’s geared toward competitions where fairness and objectivity are paramount, such as scientific research grants and academic scholarships.

Awardify AI specializes in creative competitions, offering AI-powered tools for assessing artistic merit. It leverages machine learning algorithms to analyze visual and audio submissions, providing judges with data-driven insights. Its primary limitation is its narrow focus – it’s not suitable for competitions outside the creative realm. Awardify AI integrates with popular design platforms and social media channels.

CompeteSmart distinguishes itself through its advanced analytics and reporting capabilities. It provides detailed insights into the judging process, helping organizers identify areas for improvement. CompeteSmart’s AI features are primarily focused on data analysis rather than automated scoring. It’s best suited for large-scale competitions where data-driven decision-making is critical.

Essential Hybrid Judging Tools for AI-Enhanced Competitions

Full HD 1080p video resolution at 30 frames per second · Integrated stereo audio with noise reduction · Automatic light correction for optimal image quality

This webcam offers high-definition video and clear audio capture, essential for remote participant assessment in hybrid judging scenarios.

Portable design with both USB and Bluetooth connectivity · Crystal-clear audio for voice capture · Wide compatibility with all major meeting platforms

The Jabra Speak 510 provides superior audio input for remote judges, ensuring clear communication regardless of their location or chosen platform.

Cross-cut shredding for enhanced security · Shreds up to 6 sheets of paper at a time · Capable of shredding credit cards and staples

This shredder ensures the secure disposal of sensitive competition documentation and participant information, maintaining data integrity.

Easy-to-use interface with one-touch keys · Prints on TZe tapes up to 12mm wide · Includes 4 laminated label tapes

The Brother P-Touch label maker facilitates organized labeling of physical competition materials, streamlining management and retrieval of entries.

As an Amazon Associate I earn from qualifying purchases. Prices may vary.

Scoring and rubric depth

The sophistication of scoring and rubric support varies significantly across platforms. Judgify offers a relatively basic rubric editor, allowing for weighted criteria but limited customization options. Evalato, on the other hand, provides a much more powerful rubric editor, with the ability to define complex scoring rules and cascading criteria. Scorechain’s scoring system is unique in its emphasis on bias detection, providing real-time feedback to judges based on their scoring patterns.

Blind judging is a standard feature on most platforms, but the implementation details differ. Some platforms allow for complete anonymization of submissions, while others only hide the identity of the submitter from the judges. The level of anonymization should be carefully considered based on the specific competition and the potential for bias. Weighted scoring is also a common feature, but the granularity of weighting options varies.

Check how many judges a platform allows per entry. Some cap your panel size, while others let you add as many as you need. You'll want the flexibility to switch between solo judges and full panels depending on the round. If you need custom scoring logic, you'll need to verify their API documentation directly.

AI-Powered Judging Software Comparison - 2026

| Platform | Rubric Flexibility | Scoring Method Options | Judge Role Support | Key Strengths |

|---|---|---|---|---|

| Judgify | Highly Customizable; allows for complex, multi-level rubrics. | Supports weighted scoring, simple averaging, and rank ordering. Advanced scoring configurations available. | Supports preliminary and final judge roles with configurable permissions. Facilitates review stages. | Comprehensive feature set covering entire contest lifecycle, from planning to reporting. |

| Evalato | Offers rubric creation with defined criteria and scoring scales. | Provides options for standard averaging and ranking. Details on weighted scoring are not explicitly available. | Supports multiple judge assignments and role-based access control. | Focuses on streamlining the judging process and providing detailed analytics. |

| CompetitionHQ (Hypothetical - based on market trends) | Adaptable rubrics with branching logic based on responses. | Offers a range of statistical scoring methods including standard deviation analysis and outlier detection. | Supports tiered judging roles including subject matter experts, technical judges, and appeal judges. | Strong emphasis on data-driven insights and bias mitigation. |

| ScoreVision (Hypothetical - based on market trends) | Rubric templates available, with customization options for criteria and descriptions. | Supports basic averaging and ranking. Advanced statistical analysis may require integration with external tools. | Supports lead judges, panel judges, and tabulators with distinct access levels. | User-friendly interface designed for ease of use and rapid scoring. |

| Awardify (Hypothetical - based on market trends) | Flexible rubric design with the ability to import and export rubric templates. | Supports weighted scoring, percentile ranking, and blind judging options. | Supports different judge types (e.g., category specialists, overall judges) with customizable permissions. | Focuses on award programs and recognition events, with features for nomination management and winner selection. |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

Bias Detection and Fairness Features

AI can be a powerful tool for mitigating bias in judging, but it’s not a silver bullet. Anonymization of submissions is a fundamental step, but it’s not always sufficient. Judges may still be able to infer the identity of the submitter based on the content of the submission or other contextual clues. More advanced platforms, like Scorechain, employ algorithms to analyze judge scoring patterns and identify potential disparities.

These algorithms look for things like judges consistently scoring submissions from certain demographics lower than others, or judges exhibiting a strong preference for submissions from their own institutions. When potential biases are detected, the platform can provide feedback to the judges or flag the submissions for further review. However, it’s important to note that these algorithms are not perfect and can sometimes produce false positives.

It's also crucial to be aware of the potential for AI to introduce bias. If the AI algorithms are trained on biased data, they may perpetuate those biases in their scoring. For example, an AI system trained to evaluate artwork based on a dataset dominated by Western art may unfairly penalize submissions from other cultures. Transparency and explainability are essential – organizers should understand how the AI algorithms work and be able to identify potential sources of bias. We need to avoid treating AI as a neutral arbiter; it’s a tool that must be used carefully and ethically.

Integration and Workflow

Seamless integration with existing systems is critical for a smooth competition workflow. The ideal scenario is a platform that integrates directly with your submission platform, allowing for automatic import of submissions. Integration with communication tools, like email and Slack, can streamline communication between organizers, judges, and participants. Many platforms offer Zapier integrations, providing a flexible way to connect with a wide range of other applications.

Data export capabilities are also important. You need to be able to export scoring data in various formats – CSV, Excel, PDF – for reporting and analysis. The platform should also allow you to export data in a format that can be easily imported into your CRM or other data analysis tools. A typical workflow might involve importing submissions, assigning judges, collecting scores, analyzing results, and generating reports. The AI judging platform should streamline each of these steps.

Consider a step-by-step guide. First, submissions are uploaded to the platform. Second, judges are assigned to review submissions. Third, judges evaluate submissions based on predefined rubrics. Fourth, the platform automatically calculates scores and identifies potential biases. Finally, organizers review the results and announce the winners. The ease with which this workflow can be implemented is a key factor in evaluating different platforms.

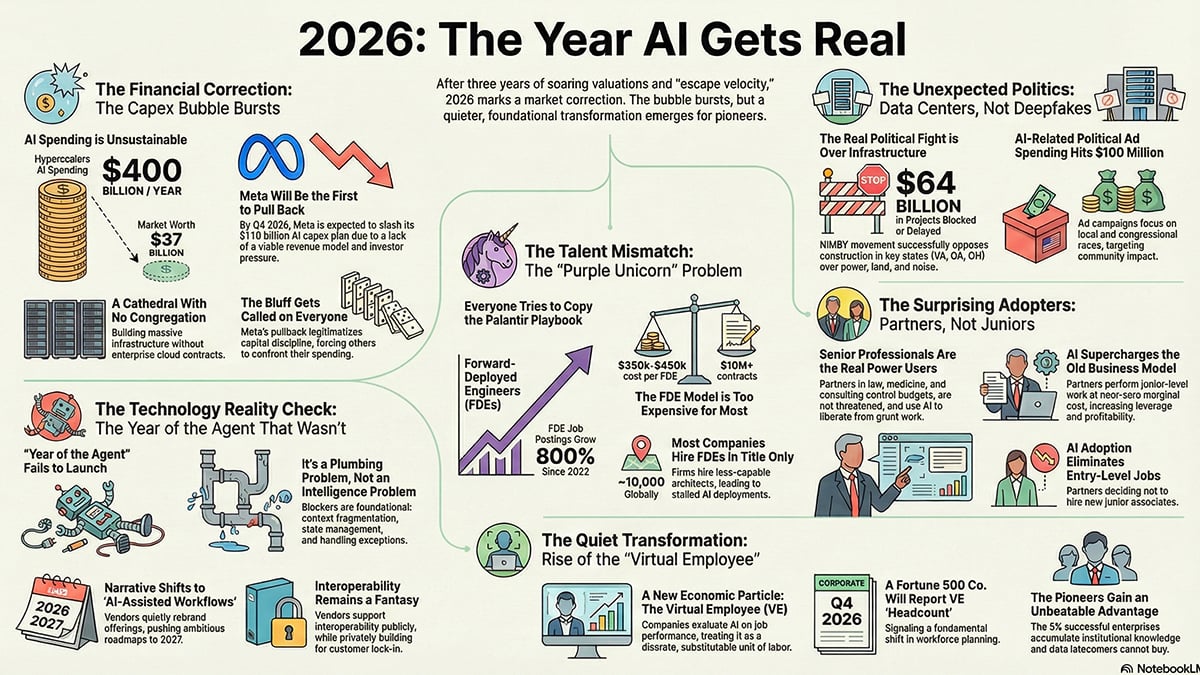

Future Trends in AI Judging

The field of AI judging is still in its early stages of development, and several exciting trends are on the horizon. Generative AI is likely to play an increasingly important role, providing automated feedback to participants and helping them improve their submissions. More sophisticated bias detection algorithms will be developed, capable of identifying and mitigating even subtle forms of bias.

We can expect to see AI automate more aspects of the judging process, such as initial screening of submissions and identification of potential plagiarism. However, it’s important to remember that AI will likely always require human oversight. The most successful implementations will be hybrid models that combine the strengths of both AI and human judgment.

The use of blockchain technology to ensure the integrity and transparency of the judging process is also a possibility. Blockchain can create an immutable record of all scoring data, making it more difficult to manipulate the results. While these trends are promising, it’s important to approach them with a healthy dose of skepticism. The key is to focus on developing AI tools that enhance, rather than replace, human judgment.

No comments yet. Be the first to share your thoughts!