Do you have the right people in-house?

Before you hire outside help, look at who you already have. Internal staff often handle small innovation challenges or employee awards well, especially when the rules are simple. But as an event grows, relying on the same three people from HR to pick winners becomes a liability.

Just because you can train your staff doesn't mean you should. Building a real judging program is expensive and time-consuming. If the training is weak, the judging will be too, which insults the participants and makes the organization look amateur. You have to decide if your team actually has the time to do this right.

Key skill gaps to watch for include the ability to recognize and mitigate personal biases, apply scoring rubrics consistently across all entries, and provide constructive feedback. Without specific training in these areas, judging can become subjective and unfair. The potential for favoritism, whether conscious or unconscious, is a serious concern. A lack of experience in evaluating a diverse range of submissions can also lead to inconsistent scoring and inaccurate results.

The hidden price of DIY judging

Many organizations underestimate the true expense of building and maintaining an internal judging program. It extends far beyond the time judges spend evaluating entries during the event itself. Significant hours are required for initial training, ongoing professional development, the creation and maintenance of judging materials (rubrics, guidelines, etc.), and quality control measures to ensure consistency.

Let's break down the costs. Direct costs include training materials (workshops, online courses), potential software licenses for judging platforms, and administrative time. Indirect costs are often more substantial – the lost productivity of staff pulled away from their primary responsibilities, the potential for errors due to lack of experience, and the administrative overhead of managing the entire process. A full accounting is essential.

Staff morale is another factor. Is judging viewed as a valuable opportunity for professional development, or as an unwelcome burden? If it’s perceived negatively, it can lead to disengagement and lower-quality judging. It’s important to incentivize participation and recognize the contributions of your internal judges. A poorly managed program can quickly become a source of resentment.

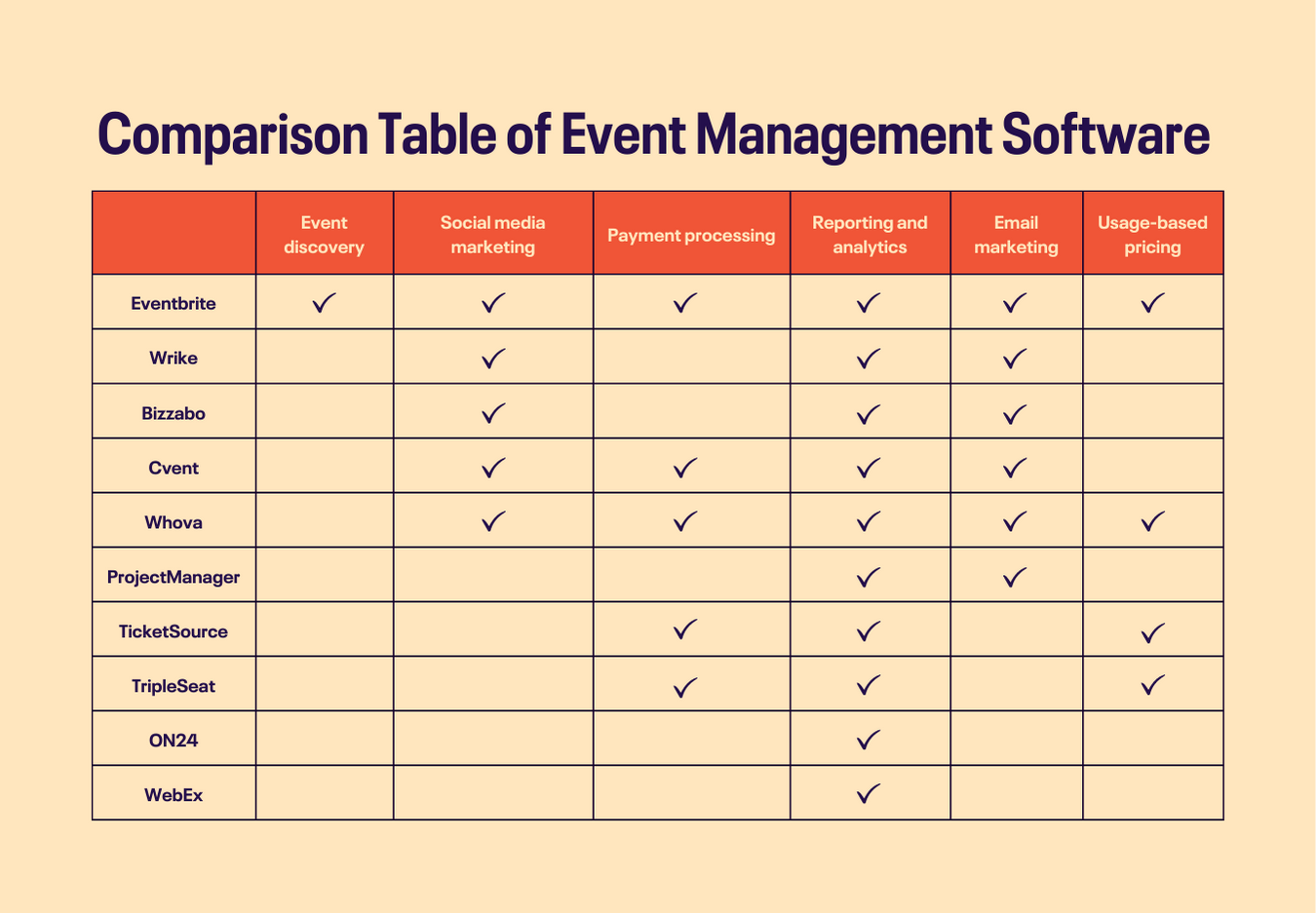

Internal vs. External Judging: A Comparative Assessment

| Factor | Internal Judging | External Judging |

|---|---|---|

| Cost | Lower initial cost | Potentially higher cost |

| Control | High degree of control over judge selection & training | Reduced control over judge selection |

| Expertise | Dependent on existing staff skillsets; may require significant training | Access to specialized expertise relevant to the competition |

| Implementation Speed | Faster implementation, leveraging existing personnel | Slower implementation; requires sourcing and onboarding |

| Bias Mitigation | Potential for inherent bias due to internal relationships | Reduced potential for bias due to judge independence |

| Scalability | May be limited by staff availability | Easily scalable to accommodate event size |

| Consistency | Requires robust training to ensure consistent scoring | Potentially higher consistency due to standardized judging practices |

| Administrative Overhead | Higher administrative burden for training and management | Lower administrative burden; vendor manages judging process |

Qualitative comparison based on the article research brief. Confirm current product details in the official docs before making implementation choices.

When it makes sense to hire pros

There are numerous scenarios where hiring professional judging services is the demonstrably better option. Events with complex scoring rubrics, particularly those requiring specialized knowledge, are prime candidates. Consider technical robotics competitions like those governed by the Robotics Education & Competition Foundation (RECF), where judges need a strong understanding of engineering principles and competition rules. These events benefit immensely from the expertise of seasoned professionals.

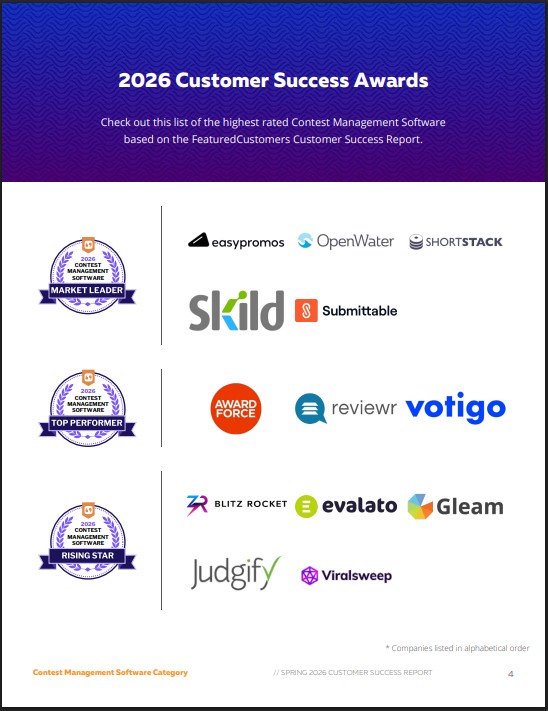

Events with high stakes – those offering significant scholarships, substantial cash prizes, or career-altering opportunities – demand a higher level of impartiality and expertise. The perception of fairness is paramount in these situations. Hiring professional judges demonstrates a commitment to objectivity and can enhance the credibility of the event. Services like RocketJudge and Judgify.me offer tools to streamline the process, manage submissions, and automate scoring.

Pros bring a level of distance that internal staff can't match. They are used to keeping secrets and sticking to the rubric even when things get subjective. In creative categories, where 'good' is a matter of opinion, having an outsider's eye makes the result harder to argue with. They often spot flaws in your scoring system that you're too close to see.

How to vet a judging service

Deciding to outsource judging is only the first step. Selecting the right provider requires careful vetting. Begin by asking about their experience in your specific event type. A judging service specializing in science fairs may not be the best fit for a marketing awards competition. Look for providers who can demonstrate a track record of success in similar events.

Inquire about their judge training protocols. What qualifications do their judges possess? What kind of training do they receive on bias awareness, scoring consistency, and event-specific criteria? A reputable service will have a rigorous training program in place. Also, prioritize data security – how will they protect sensitive information submitted by participants? What security measures are in place to prevent data breaches?

Finally, carefully review contract terms and pricing models. What’s included in the base price? Are there additional fees for travel, lodging, or customization? What level of support is provided? Be wary of providers who make overly broad promises or lack transparency about their fees and procedures. A clear, detailed contract is essential to avoid misunderstandings and ensure a smooth event.

- Check if they have worked on your specific type of event before.

- Ask about the specific qualifications and bias training their judges undergo.

- Security: How do they protect sensitive data?

- Contract: Is the contract clear, detailed, and transparent about fees?

Mixing internal and external teams

A hybrid approach – combining internal and external judging resources – can often deliver the best of both worlds. For example, internal judges can handle preliminary rounds of evaluation, narrowing down a large pool of submissions. Then, external judges can focus on the finalists, providing a more in-depth and objective assessment. This reduces the workload on external judges and leverages the knowledge of your internal team.

Another effective strategy is to use external consultants to train your internal judges. This provides your staff with the skills and knowledge they need to evaluate entries effectively, while still benefiting from the expertise of seasoned professionals. This is particularly useful for events that are held regularly, allowing you to build internal capacity over time. It can also improve the quality of judging at all stages of the competition.

Clear roles and responsibilities are critical for a successful hybrid model. Define precisely which stages of the judging process will be handled internally and which will be outsourced. Establish clear communication channels and ensure that all judges – internal and external – are aligned on the scoring criteria and event objectives. Avoiding overlap and confusion is paramount.

Using software to stay organized

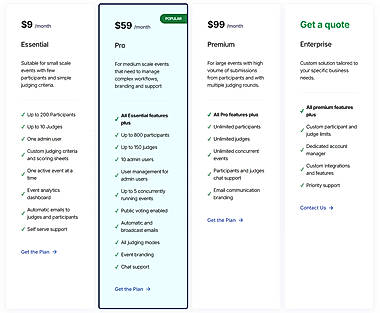

Technology is now integral to modern event judging, regardless of whether you employ internal or external judges. Digital scoring platforms like Submittable and Judgify offer significant advantages over traditional paper-based systems. These platforms streamline the process, reduce errors, and provide valuable data insights. Features like blind judging (hiding participant identities) and weighted scoring (assigning different weights to different criteria) can enhance objectivity and fairness.

Automated reporting capabilities save time and effort, providing you with detailed analytics on judging trends and participant performance. These insights can be used to improve the event in future years. However, the key is to choose a platform that is user-friendly and fits your specific needs. Avoid overly complex systems that require extensive training or are difficult to navigate.

Software is a tool, not a replacement for a brain. It helps organize the mess, but you still need people to provide feedback and understand the context of an entry. I don't think AI is ready to judge anything that requires a human touch or subjective taste yet.

- Blind judging helps by hiding names and faces to keep things fair.

- Weighted Scoring: Allows different criteria to have varying importance.

- Automated Reporting: Provides data insights and analytics.

Featured Products

Comprehensive Intune administration · Endpoint security management · Device and application deployment

This resource provides in-depth knowledge for managing enterprise endpoint security and device management using Microsoft Intune, crucial for organizations seeking to standardize and secure their IT infrastructure.

Online competition scoring · Event management tools · Digital evaluation workflows

These platforms provide robust digital scoring and event management capabilities, enabling efficient and objective evaluations for a variety of competitions.

Digital tools for event resource management · Scalable solutions for live communication · Measurable impact of digitalization

This German-language resource explores how digitalization transforms live communication through scalable and measurable digital tools for event resource management.

Software license compliance · License optimization strategies · Cost reduction through management

This guide offers a comprehensive overview of software license management, essential for organizations aiming to ensure compliance, optimize expenditures, and mitigate risks associated with software assets.

As an Amazon Associate I earn from qualifying purchases. Prices may vary.

Bias Mitigation: A Critical Skill

Bias is an unavoidable aspect of human judgment, but it can be managed. Common types of judging bias include confirmation bias (favoring information that confirms existing beliefs), affinity bias (favoring individuals who are similar to oneself), and the halo effect (allowing a positive impression in one area to influence overall evaluation). Recognizing these biases is the first step towards mitigating their impact.

Judge training is essential. Training should cover common types of bias, strategies for recognizing and avoiding them, and the importance of applying scoring rubrics consistently. Blind judging techniques – where judges are unaware of the participant’s identity – can also help to reduce bias. Clear, objective scoring rubrics provide a framework for evaluation, minimizing subjective interpretation.

Establishing a process for addressing complaints or concerns about bias is also crucial. Participants should have a clear avenue for reporting potential issues, and these concerns should be investigated thoroughly. Ignoring complaints can erode trust and damage the event’s reputation. Even with the most experienced judges, proactive bias mitigation is a necessity.

No comments yet. Be the first to share your thoughts!