Judging in 2026: virtual, hybrid, or back to in-person?

Organizations moved to virtual events out of necessity after 2020, but the current shift is more deliberate. People want the energy of a physical room, yet digital tools are too cheap and far-reaching to scrap. I believe the best approach for 2026 isn't a binary choice; it's about how well you mix the two.

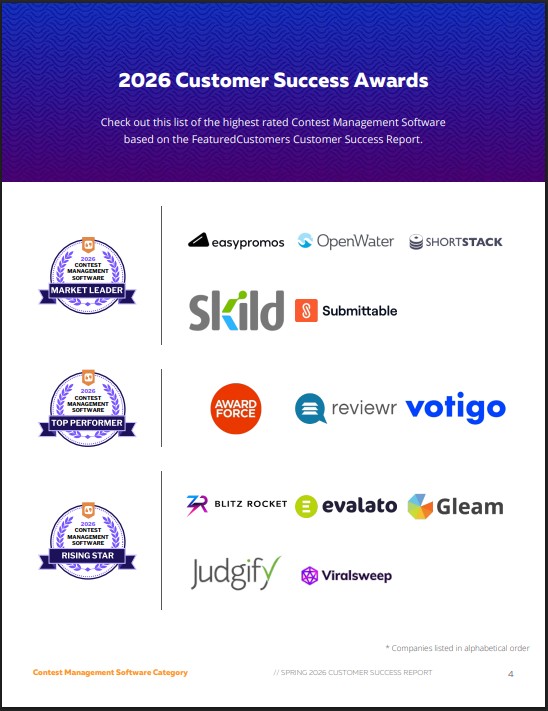

The contest management landscape is still evolving. Organizers are experimenting with different models, and there’s no universal consensus on what constitutes "best practice". This is particularly true when it comes to judging – the core of any successful competition. Many are grappling with how to replicate the nuanced evaluation of in-person judging within a digital environment, or how to effectively integrate remote judges into a hybrid setup.

Looking ahead to 2026, I expect a continued prevalence of virtual and hybrid formats. The convenience and scalability of these options are too significant to ignore. The focus will likely shift from simply hosting events online to optimizing the experience for both participants and judges. This optimization will depend heavily on the underlying technology – specifically, the contest management platform chosen.

What your platform actually needs

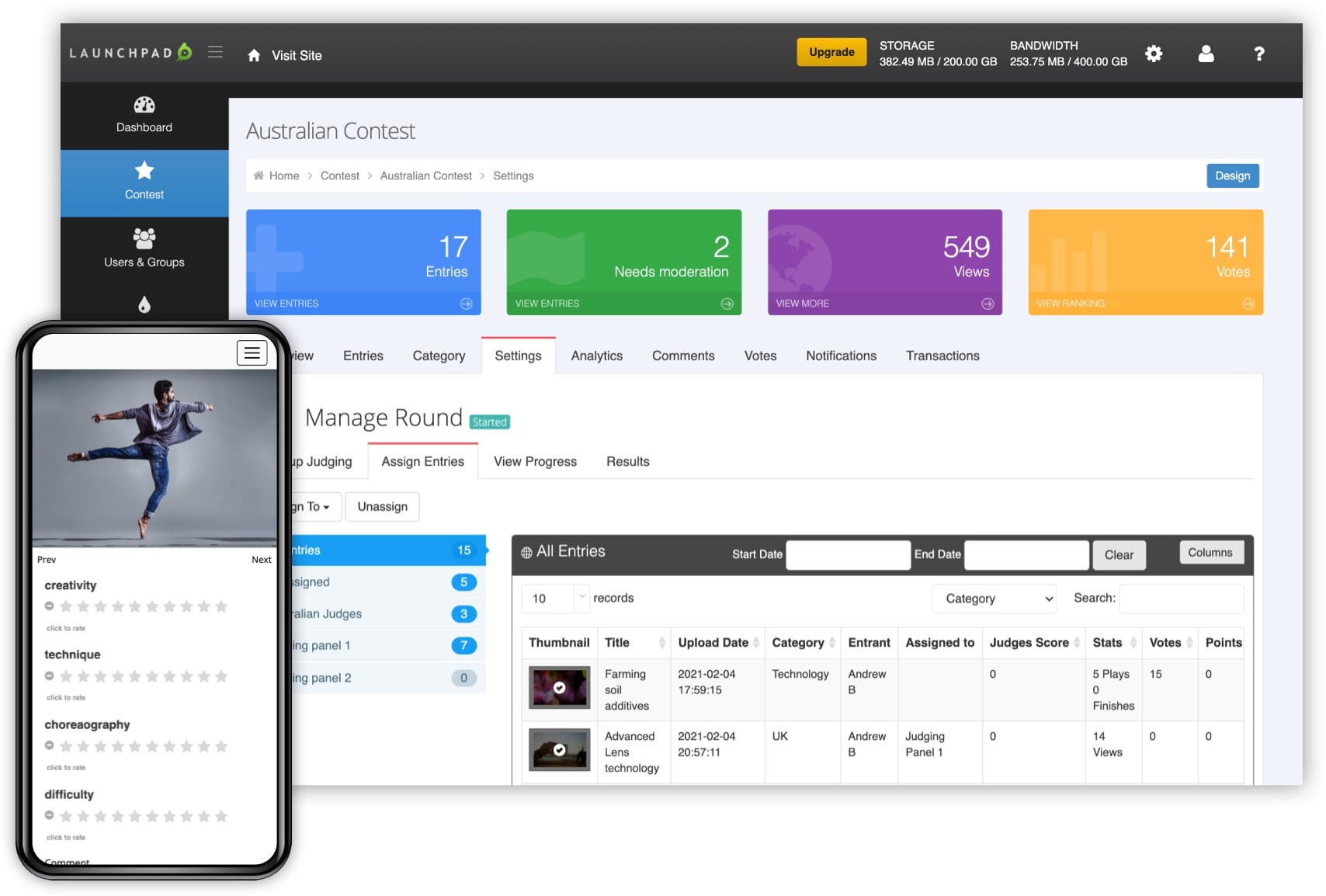

Regardless of whether a competition is fully virtual, hybrid, or primarily in-person, certain core features are non-negotiable. These include robust submission management – the ability to collect and organize entries efficiently – and secure file handling to protect sensitive materials. A well-designed judging interface is also critical, providing judges with the tools they need to evaluate submissions fairly and consistently.

Scoring and ranking functionality is, of course, essential. But it’s not just about tallying scores; the platform must support different scoring methods and weighting schemes. Finally, comprehensive reporting capabilities are needed to analyze results, identify trends, and demonstrate the value of the competition. Both Judgify and Evalato address these needs, though their approaches differ in certain respects.

Judgify is built for speed and simplicity. It has branded portals and basic forms that just work. Evalato is the heavy lifter, designed for massive award shows with complex rules. Both keep your files secure and let you control who sees what, but they feel very different in practice.

- Handling entries and files without a mess

- Secure File Handling

- Judging Interface

- Scoring/Ranking

- Reporting

Judging Workflow: Virtual vs. Hybrid

The judging process itself changes significantly depending on the competition format. In a fully virtual environment, asynchronous judging is common – judges review submissions on their own time, within a specified deadline. This allows for a wider pool of judges, potentially bringing diverse perspectives to the evaluation process. However, it also relies heavily on clear and well-documented submission materials, as judges lack the opportunity to ask clarifying questions in real-time.

Hybrid competitions introduce additional complexity. They often involve a blend of in-person deliberation among a core judging panel and digital scoring from remote judges. Coordinating these two elements effectively is crucial. Platforms need to facilitate seamless communication between in-person and remote judges, and ensure that all scores are integrated accurately. Logistical challenges, such as time zone differences and internet connectivity issues, must also be addressed.

A poorly designed platform can exacerbate these challenges. For example, if remote judges struggle to access submission materials or the scoring interface is clunky, it can lead to inconsistent evaluations and a frustrating experience. The ideal platform will offer a unified workflow that minimizes friction and maximizes efficiency, regardless of where the judges are located.

Security & Compliance: A Growing Concern

Data security is paramount, especially when dealing with sensitive submission materials – intellectual property, creative works, or personal information. Contest management platforms must employ robust security measures to protect against data breaches and unauthorized access. This includes encryption, access controls, and regular security audits. The reputational damage from a security incident could be significant.

Compliance with data privacy regulations, such as GDPR and CCPA, is also critical. Platforms need to provide organizers with the tools to obtain necessary consent from participants and to manage data in a compliant manner. This includes features like data anonymization and the ability to fulfill data subject access requests. I anticipate increased scrutiny in this area as regulations become more stringent.

Both Judgify and Evalato highlight their commitment to security and compliance on their websites. They both mention using secure servers and implementing access controls. However, the level of detail provided varies, and it’s important for organizers to conduct their own due diligence to ensure that the platform meets their specific security requirements.

Evalato & Judgify: A Closer Look

Evalato positions itself as a comprehensive awards management software, geared towards larger organizations running complex programs. It offers features like custom branding, advanced reporting, and integration with other marketing tools. While powerful, this breadth can also make it feel overwhelming for smaller competitions. It's strength appears to be in handling high volumes of submissions and managing intricate judging workflows.

Judgify, in contrast, prioritizes simplicity and ease of use. Its interface is clean and intuitive, making it a good choice for organizations that need a straightforward solution. It offers essential features like submission management, online judging, and automated scoring. It's particularly well-suited for abstract submissions, case studies, and other types of content-based competitions. I find the focus on user experience to be a real differentiator.

A key difference lies in their approach to customization. Evalato offers more extensive customization options, allowing organizers to tailor the platform to their exact needs. Judgify, while still customizable, is more opinionated in its design, guiding users through a more structured workflow. This can be a benefit for less technically inclined organizers, but may limit flexibility for those with more specialized requirements.

Functionally, both platforms allow for blind judging, weighted scoring, and rubric-based evaluation, but the implementation details differ. Evalato’s reporting features are more granular, providing detailed insights into judging patterns and submission performance. Judgify's reporting is more focused on overall results and key metrics.

Moving beyond simple rankings

Simple ranking isn’t always enough. Many competitions benefit from more sophisticated scoring methods, such as weighted criteria, where different aspects of a submission are assigned different levels of importance. Blind judging – concealing the identity of the submitter from the judges – can help to reduce bias. Rubric-based evaluation, using a predefined set of criteria and scoring guidelines, promotes consistency and fairness.

Evalato excels in supporting these advanced techniques. It allows organizers to create highly customized scoring rubrics and to assign different weights to different criteria. It also offers features like inter-rater reliability analysis, which helps to identify inconsistencies in judging. Judgify supports weighted scoring and rubric-based evaluation, but its customization options are less extensive.

Reporting features are equally important. Organizers need to be able to analyze results, identify trends, and demonstrate the value of the competition to stakeholders. Evalato provides detailed reports on judging patterns, submission performance, and overall competition statistics. Judgify offers more basic reporting capabilities, focusing on overall results and key metrics.

- Weighted Criteria

- Blind Judging

- Rubric-Based Evaluation

- Inter-rater Reliability Analysis

Feature Comparison: Evalato vs. Judgify (2026)

| Feature | Evalato | Judgify |

|---|---|---|

| Weighted Criteria | Strong | Moderate |

| Blind Judging | Strong | Strong |

| Custom Rubrics | Strong | Moderate |

| Exportable Reports | Strong | Strong |

| Data Visualization | Moderate | Moderate |

| Public Voting | Limited | Strong |

| Judging Management | Strong | Strong |

| Submission Management | Strong | Strong |

Illustrative comparison based on the article research brief. Verify current pricing, limits, and product details in the official docs before relying on it.

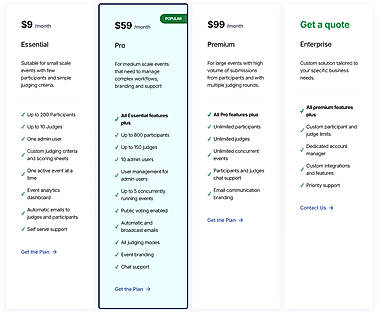

The reality of costs

Pricing information for these platforms can be difficult to obtain without requesting a custom quote. However, generally speaking, Evalato tends to be positioned as a higher-end solution, with a corresponding price tag. It often involves annual subscription fees based on the number of submissions or judges. Judgify offers more flexible pricing plans, including pay-per-event options, making it a more accessible choice for smaller organizations.

The total cost of ownership extends beyond the subscription fee. Setup costs, such as data migration and platform configuration, should also be considered. Additionally, some platforms charge extra for add-ons, such as custom branding or dedicated support. It's important to carefully evaluate all costs before making a decision.

Ultimately, the best platform from a cost perspective will depend on the specific needs of the competition. A smaller organization running a simple event may find Judgify to be the more economical choice, while a larger organization with complex requirements may be willing to pay a premium for Evalato's advanced features.

Essential Hardware for Seamless Virtual and Hybrid Competition Management

Full HD 1080p video resolution at 30 frames per second · Integrated stereo audio for clear sound capture · Automatic light correction for optimal image quality in various lighting conditions

This webcam provides high-definition video and clear audio, essential for effective virtual communication and participant engagement in online competitions.

Active noise cancellation technology for immersive audio · Bluetooth wireless connectivity for freedom of movement · Comfortable over-ear design for extended wear

These headphones minimize ambient distractions, allowing competition organizers and remote participants to focus on critical audio cues and communication.

Ergonomic design with PostureFit SL support for spinal alignment · Adjustable lumbar support for personalized comfort · Breathable mesh material for temperature regulation

This ergonomic chair promotes proper posture and comfort during long periods of sitting, crucial for organizers managing complex hybrid events.

High-speed duplex scanning up to 40 pages per minute · Automatic document feeder with a 50-sheet capacity · Wi-Fi and USB connectivity for flexible integration

This document scanner streamlines the digitization of competition-related paperwork, facilitating efficient data management and accessibility for hybrid events.

As an Amazon Associate I earn from qualifying purchases. Prices may vary.

No comments yet. Be the first to share your thoughts!